Introduction to Clustering

Clustering is a fundamental technique in the realms of data analysis and machine learning, serving as a catalyst for extracting meaningful insights from complex datasets. At its core, clustering involves the automatic grouping of similar data points, enabling analysts to categorize items based on inherent characteristics without prior knowledge of the data labels.

In various domains, such as market research, biology, and social science, clustering plays a pivotal role in identifying patterns and establishing relationships among data entities. For instance, marketers employ clustering techniques to segment their customer base into distinct groups, which allows for targeted marketing strategies and enhanced customer satisfaction. Similarly, in biological research, clustering helps scientists identify groups of genes with similar functions, contributing to our understanding of cellular processes.

The primary goal of clustering is to minimize the variance within each cluster while maximizing the variance between different clusters. This drive towards homogeneity within clusters enhances the interpretability of the data and aids in discovering the underlying structure of the dataset. Various algorithms exist to tackle clustering tasks, with K-Means being one of the most widely used due to its simplicity and efficiency.

Clustering also finds application in diverse fields to improve decision-making processes. For example, in the realm of customer relationship management, businesses employ clustering to predict customer behavior, thereby crafting personalized experiences. As companies increasingly harness the power of big data, understanding clustering becomes essential for driving strategic initiatives and promoting effective data-driven decision-making.

The Importance of Clustering in Data Science

Clustering is a significant technique in data science, primarily because it allows analysts to identify structures and patterns within unlabeled data. This capability is particularly beneficial across various fields, including marketing, biology, image processing, and many others. By applying clustering methods, professionals can effectively segment data points into distinctive groups, leading to insights that inform strategic decisions and improve operational outcomes.

For instance, in marketing, businesses leverage clustering to perform customer segmentation. By grouping consumers based on purchasing behaviors and preferences, companies can tailor their marketing strategies to fit the distinct needs of each segment. This targeted approach not only enhances customer satisfaction but also boosts conversion rates and customer loyalty.

In the realm of biology, clustering techniques are pivotal in analyzing genetic data or understanding ecological systems. Researchers use clustering to classify species or to identify genetic markers that show similar characteristics. Such insights can lead to advancements in conservation efforts and improved health outcomes by identifying potential genetic predispositions to diseases.

Furthermore, in image processing, clustering algorithms are utilized to enhance the processing of visual data. Techniques such as K-Means clustering make it possible to segment images into regions that share common attributes, thereby enabling tasks such as image compression, object recognition, and scene classification. This application is especially critical in fields like medical imaging, where precise segmentation can significantly impact diagnostic accuracy.

Overall, the importance of clustering in data science cannot be overstated. By facilitating the interpretation of complex data sets and enabling the identification of underlying patterns, clustering serves as a foundational tool across various sectors. Its versatility and efficacy in extracting meaningful insights ensure that it remains an integral part of the data analysis toolkit.

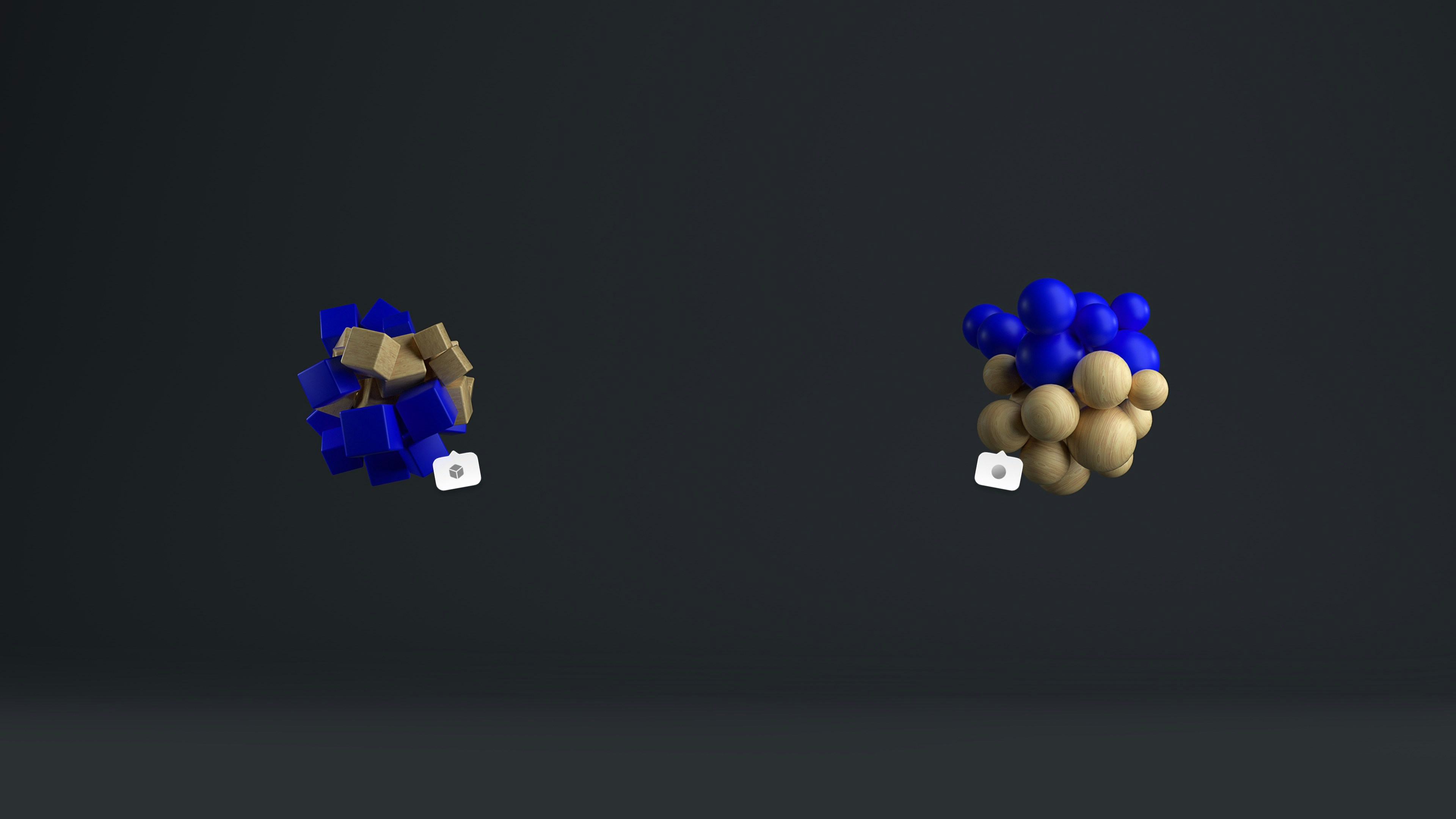

Types of Clustering Algorithms

Clustering algorithms are fundamental tools in data mining and machine learning, as they enable the grouping of data points based on their similarities. Various clustering techniques exist, each tailored to meet specific needs in clustering analysis. This section explores three primary types of clustering algorithms: hierarchical, partitioning, and density-based methods.

Hierarchical clustering is a method that builds a hierarchy of clusters, either by starting with each data point as a separate cluster and merging them (agglomerative approach) or by starting with one all-encompassing cluster and splitting it (divisive approach). This technique provides a clear tree-like representation, known as a dendrogram, that illustrates the relationships and similarities between clusters, making it particularly useful for exploratory data analysis and when the number of clusters is not predetermined.

Partitioning clustering, exemplified by the well-known K-means algorithm, involves dividing the dataset into a predefined number of clusters. The process involves selecting initial centroids and iterating to assign data points to the nearest centroid, followed by recalculating centroids based on these assignments. While K-means is efficient for large datasets, it does require the user to specify the number of clusters beforehand and is sensitive to outliers, which can skew results.

Density-based clustering, such as the DBSCAN (Density-Based Spatial Clustering of Applications with Noise) algorithm, groups together data points that are closely packed while marking as outliers points that lie alone in low-density regions. This method is particularly effective for datasets with irregular shapes and varying densities, allowing for the identification of complex patterns without the need for predefined cluster counts.

Each of these clustering approaches offers distinct advantages and is best suited for different types of datasets and analysis objectives. By understanding these varying methods, practitioners can choose the appropriate algorithm to meet their specific clustering needs.

K-Means Clustering Explained

K-Means clustering is a widely adopted algorithm in machine learning and data mining, known for its simplicity and efficiency in partitioning a dataset into distinct groups. The fundamental objective of K-Means is to minimize the variance within each cluster while maximizing the variance between clusters, thus fostering greater separation. The algorithm operates through a sequence of iterative steps that facilitate the identification of optimal cluster centers or centroids.

The first step in the K-Means algorithm involves the initialization of centroids. This can be achieved randomly selecting K data points from the dataset or through more sophisticated methods such as K-Means++ to optimize the selection, ultimately ensuring better initial centroids. Once initialized, the next step is the assignment of data points to the nearest centroid based on the Euclidean distance metric, wherein each point is allocated to the cluster that is closest to its respective centroid.

Subsequently, the algorithm updates the centroids, which involves recalculating the mean of all data points assigned to each cluster. This mean then becomes the new centroid for that cluster. The iterative process of assignment and updating continues until convergence occurs; that is, when the centroids no longer change significantly, indicating that the clusters have stabilized.

The effectiveness of K-Means clustering heavily relies on the selection of the number of clusters, denoted as K, which can greatly influence the structure of the final output. Techniques such as the Elbow Method or silhouette analysis are commonly employed to determine the optimal K, ensuring the resultant clusters reflect meaningful patterns within the data. Overall, K-Means is a powerful tool for data analysis, allowing for efficient cluster formation and insightful data interpretation.

Choosing the Right Number of Clusters

Determining the optimal number of clusters in K-Means clustering is essential to ensure the effectiveness of the model. An appropriate choice can lead to valuable insights, while a poor selection may create misleading representations of the underlying data structure. Several methods can aid in identifying the best number of clusters, with the elbow method, silhouette score, and cross-validation being among the most widely used.

The elbow method is straightforward and involves plotting the explained variance as a function of the number of clusters. As the number of clusters increases, one typically observes a point where the rate of variance improvement slows significantly—this point is referred to as the elbow. Selecting the number of clusters at this elbow point suggests that adding more clusters does not yield substantial improvements in variance explanation, indicating an optimal balance.

Another robust metric is the silhouette score, which measures how similar an object is to its own cluster compared to other clusters. The silhouette score ranges from -1 to +1, where a score close to +1 indicates that objects are well clustered. By calculating the silhouette coefficient for different values of K, one can ascertain which number yields the highest value, thus identifying the most suitable number of clusters.

Cross-validation can also serve as a crucial tool in this context. By repeating the clustering process on different subsets of the data and evaluating the stability of clusters formed, one can ascertain the robustness of the chosen K value. A consistent clustering solution across various data subsets can enhance confidence in the selected number of clusters.

In summary, using techniques such as the elbow method, silhouette scores, and cross-validation assists practitioners in making informed decisions regarding the optimal number of clusters in K-Means clustering, ultimately ensuring the effectiveness of the analysis and application of the model.

Advantages and Limitations of K-Means

K-Means clustering is a widely used algorithm in data analysis due to its numerous advantages. One of its most prominent strengths is simplicity. The algorithm’s basic concept—grouping data points into a predefined number of clusters (k)—is easy to understand and implement. This simplicity makes it accessible for users across various fields, enabling data scientists and researchers to quickly apply the method without needing extensive background knowledge in complex statistics.

Furthermore, K-Means is efficient when dealing with large datasets. Its computational complexity is relatively low, often allowing it to process vast amounts of data more quickly than many other clustering algorithms. This efficiency is particularly valuable in real-time applications where speed is critical.

However, despite its advantages, K-Means also has notable limitations. A significant drawback is its sensitivity to the initialization of cluster centroids. Different random initializations can lead to different clustering results, which may not be optimal. This sensitivity necessitates the implementation of multiple runs or the usage of more advanced techniques to achieve consistent results.

Additionally, K-Means can be significantly affected by outliers. Since the algorithm relies on the mean of the data points to determine the centroids, the presence of outliers can skew the results, leading to misleading conclusions. This characteristic underscores the importance of pre-processing data to remove or mitigate the impact of outliers before applying K-Means.

Another limitation is the algorithm’s assumption of spherical clusters. K-Means generally performs well when clusters are spherical and evenly sized. However, in real-world scenarios, data clusters can have various shapes and densities, which K-Means may struggle to properly identify. These assumptions can lead to poor clustering performance in many practical applications.

Real-World Applications of Clustering

Clustering techniques find numerous practical applications across various fields, illustrating their versatility and importance in data analysis. One major application is customer segmentation in marketing. Businesses often use clustering algorithms to identify distinct groups within their customer base. By analyzing purchasing behaviors and preferences, companies can tailor their marketing strategies to meet the specific needs of each segment. This targeted approach improves customer satisfaction and increases the effectiveness of promotional campaigns, ultimately leading to higher sales conversions.

Another vital application is anomaly detection, particularly in fraud detection within financial systems. Clustering algorithms can analyze transaction data to establish what constitutes typical behavior among users. Any transaction that deviates significantly from these established clusters can then be flagged as potentially fraudulent. Such techniques are essential for financial institutions that need to monitor vast volumes of transactions in real-time while minimizing false positives.

In the field of biology, clustering is pivotal in gene sequencing and analysis. Researchers can group genes based on their expression patterns or functional similarities, allowing them to identify relationships and behaviors within biological systems. Clustering techniques facilitate the analysis of complex genomic data, enabling scientists to discover new gene functions, understand diseases better, and even develop personalized medicine approaches.

Additionally, the application of clustering extends to image processing, social network analysis, and even urban planning. For instance, in image processing, clustering can help in object recognition by grouping similar pixel colors together, making it easier to identify different components of an image. In urban planning, clustering data can reveal patterns in population density, assisting policymakers in efficient resource allocation.

Advanced Clustering Techniques Beyond K-Means

While K-Means is a widely used clustering method due to its simplicity and efficiency, it is essential to explore more advanced clustering techniques that can offer improved performance in certain scenarios. Techniques such as DBSCAN, hierarchical clustering, and Gaussian Mixture Models (GMM) provide alternatives that address some of the limitations of K-Means.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise) is a density-based clustering technique that excels at identifying clusters with varied shapes and sizes. Unlike K-Means, which requires pre-defining the number of clusters, DBSCAN identifies clusters based on the density of data points. It groups together closely packed points while marking outlying points as noise. This feature makes DBSCAN particularly effective for datasets with noise and outliers, enabling it to discover meaningful clusters that K-Means might overlook.

Hierarchical clustering is another advanced technique that builds a hierarchy of clusters. This method can be agglomerative (bottom-up) or divisive (top-down) and allows users to create a dendrogram that visualizes the relationships between clusters. Hierarchical clustering does not require the number of clusters to be established beforehand, offering more flexibility than K-Means. It is particularly useful for exploratory data analysis where the goal is to discover the underlying structure of data.

Gaussian Mixture Models (GMM) introduce a probabilistic approach to clustering, treating data as a mixture of multiple Gaussian distributions. This technique allows for the modeling of clusters that may have different shapes and sizes, accommodating the complexities of real-world data more effectively than K-Means. GMM also offers the advantage of estimating probabilistic memberships of data points to clusters, providing a richer understanding of the data structure.

In summary, while K-Means remains a popular choice for clustering tasks, more advanced techniques such as DBSCAN, hierarchical clustering, and Gaussian Mixture Models should be considered. These methods can better accommodate complex data distributions and enhance clustering accuracy, showcasing the importance of selecting the right technique based on the specific characteristics of the dataset at hand.

Conclusion and Future of Clustering

Throughout this exploration of clustering, we have delved into various methodologies, with a primary focus on K-Means, a staple technique in the data mining domain. K-Means is widely recognized for its simplicity and efficiency, especially in handling large datasets. However, as we unraveled the intricacies of clustering, it became evident that there are numerous algorithms available, each catering to different use cases and types of data.

The discussion highlighted critical considerations such as the selection of the appropriate number of clusters, the nature of the data, and the potential for improved performance through advanced variants like K-Medoids and hierarchical clustering. As data continues to proliferate in modern applications, the demand for nuanced and robust clustering solutions is increasingly evident.

Looking forward, the future of clustering presents exciting opportunities for innovation and enhancement. As artificial intelligence and machine learning techniques advance, clustering methods are expected to evolve, incorporating more sophisticated statistical analyses and deeper learning algorithms. Enhanced capacity for handling complex, high-dimensional datasets will likely emerge, allowing for better insights and more accurate segmentation.

Moreover, the integration of clustering in real-time data analysis, particularly in fields like healthcare, marketing, and image recognition, illustrates its vital role in shaping decision-making processes. Initiatives promoting increased collaboration between researchers and industry practitioners can further drive developments in this field. As such, fostering a culture of experimentation with clustering techniques is crucial.

In conclusion, while K-Means and traditional clustering algorithms serve as foundations, the exploration into clustering does not end here. Continuous research and embracing new methodologies promise to unveil further capabilities of clustering, ultimately enhancing our understanding and applications of data analytics.