Introduction to Alignment and Its Importance

Alignment, in various contexts, refers to the degree to which systems, processes, or entities work harmoniously towards common objectives or goals. In the fields of artificial intelligence (AI) and cognitive science, the concept of alignment holds significant importance, particularly regarding the ethical implications of AI systems and the safety of their implementations.

Within AI, alignment typically pertains to ensuring that the goals and behaviors of an artificial intelligence system resonate with human values and expectations. The objective is to create AI that not only performs tasks effectively but also does so in a manner that aligns with societal norms and ethical standards. A well-aligned AI system can assist in problem-solving while minimizing risks associated with unintended consequences or harmful outputs.

The importance of alignment extends beyond mere operational efficiency. Misalignment in AI can lead to serious ethical dilemmas, such as biases within decision-making processes or the execution of actions that could harm individuals or groups. For example, an AI programmed to enhance productivity must align with ethical frameworks to prevent exploitation of workers or infringement on personal rights. This demonstrates that alignment is not just a technical goal but also an ethical imperative.

Furthermore, understanding alignment encompasses various perspectives, including human cognitive processes, which influence how individuals interpret and engage with AI. In cognitive science, alignment is vital for inspecting how AI complements or contradicts human reasoning, aiming for systems that are transparent and comprehensible. As such, fostering alignment in these domains not only enhances technology but also promotes trust and acceptance among users, further advocating for a responsible approach to AI development and deployment.

Understanding Probability in the Context of Alignment

Probability serves as a critical concept when evaluating the potential for alignment in various disciplines, ranging from artificial intelligence to organizational behavior. It quantifies uncertainty, allowing stakeholders to assess the likelihood of achieving specific outcomes during alignment attempts. Probability can be interpreted and measured in myriad ways, depending on the context and the variables involved.

One prevalent method of quantifying probability is through statistical models that analyze historical data to forecast future events. In the realm of alignment, this could mean analyzing past organizational initiatives to predict the success of a new alignment strategy. For example, by measuring the correlation between previous alignment efforts and their impacts, organizations can derive a probability estimate. Furthermore, expert judgment can also be employed to gauge the likelihood of alignment, particularly in novel situations where data may be scarce. This serves as another layer of assessment, allowing teams to incorporate qualitative insights into their decision-making processes.

In interpreting probabilities, it is essential to recognize the inherent risks involved. Risk assessment provides a framework for understanding potential pitfalls associated with misalignment, helping organizations prioritize strategies. By weighing the potential benefits against the assigned probabilities of various alignment scenarios, decision-makers can better navigate uncertainties. Additionally, understanding the concept of variance within probability distributions can be instrumental. High variance, for example, indicates a wide range of potential outcomes, suggesting that alignment might be particularly challenging.

In summary, probability plays a vital role in the alignment process by providing a structured approach to assess uncertainties and risks. By employing statistical methods alongside expert judgments, organizations can gain valuable insights into their potential for achieving alignment while being mindful of the risks involved.

Factors Influencing Alignment Probability

In the pursuit of understanding alignment, various critical factors play a pivotal role in determining the overall probability of achieving it. These factors encompass technological limitations, complexity of the systems involved, cognitive biases, and ethical considerations. Each aspect warrants thorough examination to elucidate its impact on alignment likelihood.

Technological limitations present a formidable challenge to alignment probabilities. The current state of technology may not adequately support the desired functionalities essential for establishing alignment. Limitations in processing power, algorithms, and data integrity can hinder the effective modeling of systems that aim for alignment. Furthermore, the pace of technological advancement introduces uncertainty, as existing frameworks may quickly become obsolete.

The complexity of the systems involved significantly contributes to the alignment probabilities. As systems grow increasingly intricate, interactions between components become less predictable, which complicates the achievement of alignment. The myriad of potential variables, unforeseen interactions, and emergent behaviors introduces uncertainties that can derail alignment efforts. This complexity can be exacerbated by the scale at which systems operate, particularly in environments where numerous agents interact.

Cognitive biases also affect the probability of alignment. Decision-makers may operate under various biases that skew their perceptions and evaluations of alignment strategies. For instance, confirmation bias may lead individuals to favor information supporting their preexisting beliefs, potentially overlooking critical evidence that could suggest alignment is not feasible. Additionally, overconfidence bias may result in an underestimation of the challenges involved in achieving alignment.

Lastly, ethical considerations constitute a significant aspect that influences alignment probability. Societal norms and values impact the acceptance of certain technologies and alignment strategies. Ethical dilemmas may arise in balancing the benefits of alignment against potential risks and harms to individuals or groups, affecting stakeholder support and consequently the alignment efforts. Understanding these factors is essential for assessing the realistic prospects of achieving alignment in complex systems.

Historical Case Studies: Examples of Alignment Success and Failure

The exploration of alignment, particularly within organizational and technological frameworks, has been richly informed by a multitude of historical case studies that highlight both successful and unsuccessful attempts. A quintessential example of alignment success can be seen in the collaboration between NASA and various private aerospace companies during the 21st century. This partnership facilitated rapid advancements in space technology, which were driven by shared goals, resources, and expertise. The alignment achieved in this case underscores the importance of clear communication, mutual respect, and shared vision among stakeholders. As a result, both parties were able to innovate faster and more effectively, ultimately leading to groundbreaking missions that benefited the entire scientific community.

Conversely, there are notable examples of failed alignment that serve as cautionary tales. One such case is the introduction of the infamous Boeing 737 MAX aircraft, where corporate neglect in addressing fundamental safety concerns led to disastrous outcomes. In this instance, misalignment among the engineering, management, and regulatory bodies created a chasm that ultimately resulted in tragic accidents. This case illustrates how critical it is for stakeholders to remain aligned not only in vision but also in ethical responsibilities and safety standards. The consequences of failure in alignment here were devastating, highlighting the necessity for continuous dialogue and reassessment of goals and practices throughout the project lifecycle.

Reflecting upon these historical case studies reveals significant patterns in the factors that contribute to alignment success or failure. Key lessons include the imperative of open communication, the need for shared objectives, and a well-defined governance structure that allows for adaptation. Insights gained from past experiences signal that future alignment efforts must integrate these considerations to foster collaborative environments capable of driving innovation while minimizing risks.

The Role of Human Judgment in Alignment Probability

Human judgment plays a pivotal role in determining the probability of achieving alignment, particularly in complex systems where uncertainty prevails. Decision-making is often impacted by cognitive biases and heuristics, which can skew perceptions of risk and influence strategic choices. Cognitive biases are systematic patterns of deviation from norm or rationality in judgment, often leading individuals to make ill-informed decisions. For example, confirmation bias leads individuals to favor information that aligns with their pre-existing beliefs, potentially disregarding evidence that contradicts their viewpoints. This bias can significantly impact alignment strategies by prioritizing decisions that reinforce existing assumptions rather than objectively assessing the situation.

Heuristics, or mental shortcuts, are frequently employed by individuals to simplify decision-making processes. While heuristics can be beneficial in some contexts, they can also oversimplify complex issues. This tendency may result in suboptimal outcomes, particularly when strict alignment is necessary for success. For instance, the availability heuristic might drive individuals to base their judgments on readily available information, rather than undertaking a comprehensive analysis. Such oversights can exacerbate alignment challenges, as they may lead to misaligned strategies based on incomplete or misinterpreted data.

Furthermore, the impact of expertise versus layperson perspectives plays a crucial role in alignment probability. Experts typically possess a deeper understanding of specific domains, often leading to more informed decision-making. However, reliance on experts may also create an illusion of certainty, where laypersons may accept expert opinions without critical evaluation. Conversely, laypersons may bring fresh perspectives that challenge conventional wisdom, potentially revealing overlooked aspects of alignment. Therefore, understanding the interplay between expert insights and general public judgment is essential when considering alignment strategies.

Mathematical Models and Theories Related to Alignment Probability

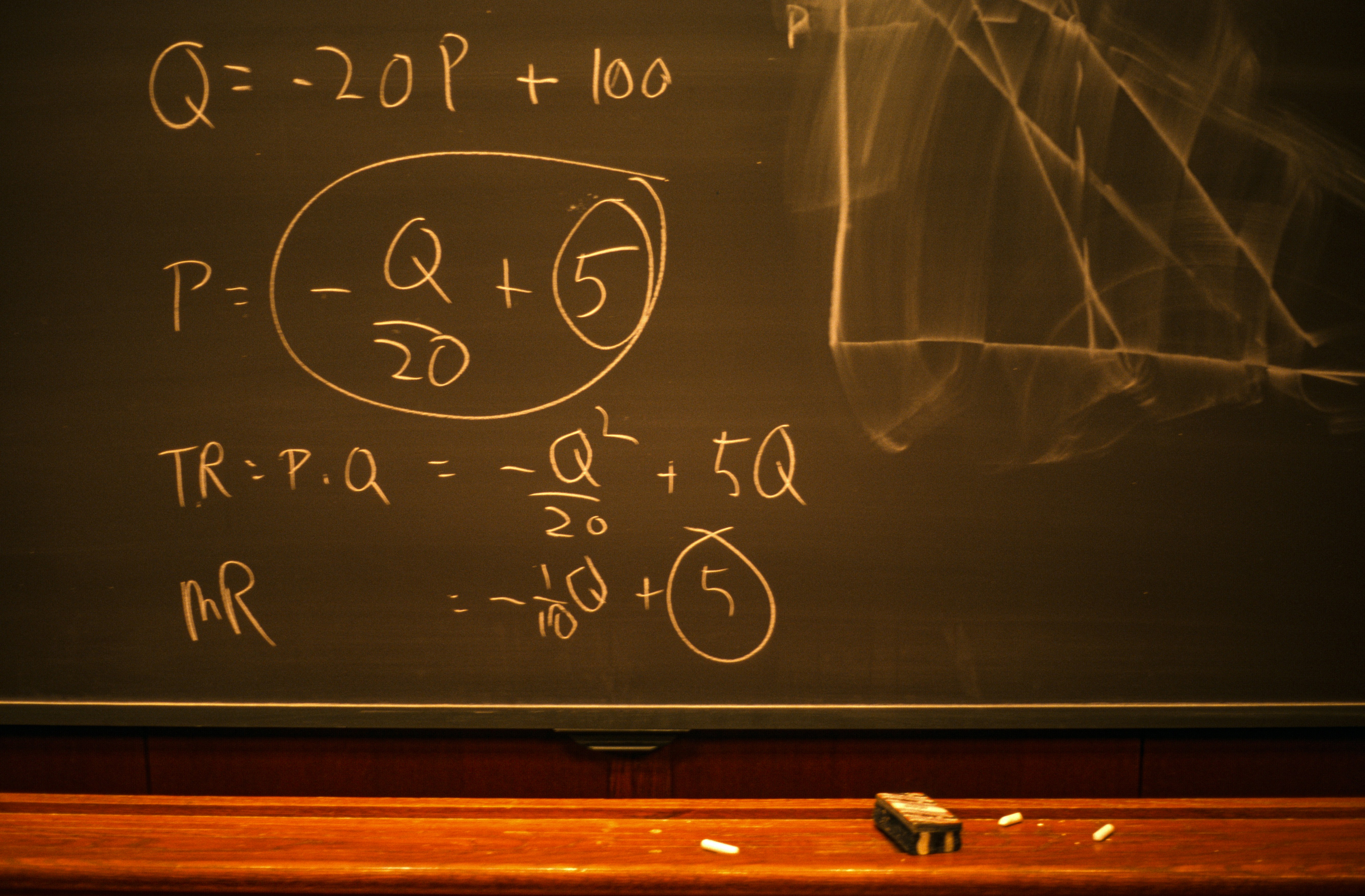

Various mathematical models and theoretical frameworks have been developed to assess the probability of alignment in different contexts. These models aim to quantify the likelihood of alignment or misalignment between multiple entities, whether in evolutionary biology, artificial intelligence, or other domains. Each model is built upon specific assumptions and employs a variety of methodologies to analyze alignment outcomes.

One prominent approach is the use of Bayesian inference, which allows researchers to update the probability of alignment as new evidence becomes available. This method is particularly powerful due to its capability to incorporate prior beliefs and refine predictions through iterative observations. However, it relies heavily on the accuracy of prior distributions and the chosen model structure. If these elements are flawed or biased, the predictions regarding alignment probabilities may also be significantly off.

Another notable model is the agent-based modeling framework, which simulates the interactions between autonomous agents within a defined environment. This approach provides a visual representation of alignment dynamics, enabling researchers to observe emergent behaviors and potential alignment patterns. The strengths of agent-based models lie in their flexibility and ability to capture complex systems. Nevertheless, they often require substantial computational resources and can be highly sensitive to initial conditions and parameter settings.

Additionally, game theory has also been extensively utilized to explore alignment situations, particularly in scenarios involving competing interests. By analyzing strategies and outcomes of various players in a game, researchers can derive insights into when and why alignment occurs or fails. While game theory provides a structured approach to understanding strategic interactions, it is sometimes criticized for its reliance on idealized scenarios that may not accurately reflect real-life complexities.

In summary, various mathematical models exist to study the probability of alignment, each with its inherent strengths and limitations. Understanding these models is essential for advancing the dialogue surrounding alignment outcomes.

Mitigation Strategies for Improving Alignment Probability

In the pursuit of better alignment in technological and social systems, it is essential to incorporate effective mitigation strategies aimed at improving alignment probability. These strategies can emerge from various disciplines, including AI ethics, policy frameworks, and collaborative stakeholder efforts. By leveraging these fields, we can develop comprehensive approaches to navigate the complexities associated with alignment challenges.

AI ethics provides a foundational framework for assessing alignment. By prioritizing ethical considerations in algorithm and model development, practitioners can enhance the alignment of outcomes with human values. This includes implementing fairness and transparency measures, which can facilitate a better understanding of automated systems and their decision-making processes. Moreover, ethical decision-making should manifest in guidelines that inform AI deployment, ensuring that the technology serves society’s best interests.

On the policy side, establishing robust regulatory frameworks is critical. Governments and regulatory bodies can develop legal structures that promote safety, accountability, and transparency within AI operations. This can be achieved through legislation that mandates reporting and auditing requirements for AI systems, thereby fostering greater confidence in their alignment capabilities. Furthermore, engaging a diverse array of stakeholders in policy-making processes is vital. This can ensure that varied perspectives contribute to shaping the laws and guidelines governing AI technologies.

The role of collaboration cannot be underestimated in improving alignment probability. Stakeholders—including technologists, ethicists, and end-users—should work together to share insights and best practices. This cooperative environment encourages a holistic understanding of the implications of AI, enabling the identification of potential misalignments before they arise. Ultimately, proactive measures taken through ethical frameworks, policy initiatives, and collaborative efforts will enhance the probability of alignment, making AI systems more beneficial for society.

Future Implications: The Quest for Alignment

The concept of alignment, especially within the context of advanced technologies, remains a subject of intense scrutiny and speculation. As we move toward an era increasingly dominated by artificial intelligence and other transformative technologies, understanding the probability of achieving effective alignment will become crucial. The question of whether alignment can be successfully attained involves evaluating emerging technologies, societal impacts, and an evolving regulatory framework.

Emerging technologies, such as machine learning, robotic systems, and autonomous agents, pose unique alignment challenges. The complexity of these technologies often leads to unpredictable outcomes, which can create a gap between intended and actual effects. Addressing these challenges requires ongoing research and development efforts to improve both the reliability and comprehensibility of AI systems. As these technologies become more prevalent, the need for successful alignment grows, with probable implications for safety and ethical considerations.

Societal impacts will also play a significant role in shaping the future of alignment. As society becomes more intertwined with technology, understanding the effects of misaligned AI systems on jobs, privacy, and interpersonal relationships becomes imperative. A significant aspect of this discussion is the public’s perception of AI and its intended role. Misalignment can lead to a loss of trust in technology, which could hinder its adoption and application in various sectors.

The evolving role of regulation and governance will be crucial in influencing the probability of alignment success. Policymakers must grapple with the challenge of creating frameworks that not only promote innovation but also ensure the safety and ethical use of technology. Effective regulatory measures could establish standards that guide the development of technologies toward alignment, thereby fostering an environment where safety and societal benefit can coexist. In conclusion, the future of alignment hinges on collaborative approaches among technologists, regulators, and society at large to create frameworks that anticipate challenges and encourage beneficial technological advancements.

Conclusion: Summarizing the Insights on Impossibility of Alignment

In examining the probability of alignment being impossible, several essential insights have emerged that highlight the complexity and intricacies of this issue. The exploration revealed that alignment, while an appealing goal for various fields such as artificial intelligence and machine learning, may fundamentally face insurmountable challenges. One primary factor contributing to this complexity is the unpredictable nature of advanced systems and their interactions with human values and ethics.

Moreover, the discussions posited that the inherent limitations in our understanding of both the systems and the intended alignment objectives play a significant role. The criteria we set for successful alignment often conflict with the rapidly evolving parameters of technology and human preferences. This ever-changing landscape complicates the task of anticipating how well these systems can align with human interests, thereby reinforcing the probability that achieving such alignment may be impossible.

Additionally, the consequences of accepting the notion that alignment could be impossible are profound. It prompts a reevaluation of our strategies in developing and deploying technology, urging greater caution and perhaps a shift toward more robust frameworks that emphasize ethical considerations over pure efficiency. This reflection could ultimately lead to greater accountability and foster a deeper discourse on the implications of our technological advancements.

Therefore, while the exploration offered valuable insights into the probability of alignment and its potential impossibility, it also stresses the need for continued investigation and dialogue in the face of such challenging prospects. Progress in this domain demands not only technical advancements but also significant reflection on our societal values and objectives.