Introduction to Agent Autonomy and Human-in-the-Loop Safety

Agent autonomy refers to the ability of machines, particularly in the realm of artificial intelligence (AI), to perform tasks and make decisions independently without direct human intervention. These autonomous systems are designed to interpret data, learn from their experiences, and adapt their operations accordingly. As technology has advanced, we have witnessed a significant rise in the deployment of autonomous systems across various sectors, from automotive industries with self-driving cars to healthcare applications in robotic surgery. The appeal of these systems lies in their potential to enhance efficiency, reduce human error, and operate in environments that may be unsafe for humans.

However, the integration of agent autonomy raises critical concerns about safety and reliability. This is where the concept of human-in-the-loop (HITL) safety comes into play. Human-in-the-loop safety entails incorporating human oversight into automated processes to ensure that decision-making remains aligned with ethical standards and societal norms. It acknowledges that despite the advancements in AI, certain situations still require human judgment and intervention, particularly in complex or high-stakes environments.

The interplay between agent autonomy and human oversight becomes increasingly pivotal as organizations adopt these technologies. While the objective is to automate processes and enhance operational efficiency, the necessity for human oversight cannot be overstated. Humans bring context, intuition, and critical thinking to situations that autonomous systems might not fully comprehend. Thus, the balance between agent autonomy and human-in-the-loop safety becomes a focal point in discussions about the future of technology and its influence on various industries. As we navigate this landscape, understanding the implications of both concepts will be essential for fostering safe and effective technological advancement.

Understanding Agent Autonomy

Agent autonomy refers to the capacity of automated systems to perform tasks independently without the need for direct human intervention. This concept is paramount in the realm of artificial intelligence (AI) and automation, where systems are designed to make decisions, learn from their environment, and adapt to changing circumstances on their own. The rise of agent autonomy has heralded significant advancements across various sectors, resulting in increased efficiency and operational speed.

One of the primary benefits of agent autonomy lies in its ability to process vast amounts of data seamlessly. Autonomous systems can analyze complex datasets in real-time, identifying trends and patterns that would be exceedingly difficult for humans to detect. This capability enhances decision-making processes, allowing organizations to respond quickly to market changes, customer needs, and operational challenges.

Moreover, increasing agent autonomy can lead to cost reductions by minimizing the human labor required in certain tasks. With machines taking over repetitive and mundane activities, human resources can be redirected towards more strategic initiatives, fostering innovation and creativity. Furthermore, autonomous agents often operate with increased precision, eliminating the risk of human error associated with manual processes.

However, the expansion of agent autonomy is not without its risks and challenges. An unchecked autonomous system can lead to unforeseen consequences if not properly managed or regulated. Potential risks include ethical dilemmas, data privacy concerns, and the overarching issue of accountability. For instance, in scenarios involving autonomous vehicles or drones, the absence of a human operator raises pressing questions about liability in the event of an accident or malfunction. These challenges necessitate a careful balance between reaping the benefits of autonomy while implementing safeguards that ensure human oversight, thereby strengthening safety and accountability in automated processes.

The Role of Human-in-the-Loop Systems

The increasing reliance on autonomous systems across various sectors raises significant concerns about safety, accountability, and ethical implications. Human-in-the-loop (HITL) systems serve as a crucial methodology for integrating human judgment within automated processes. This approach acknowledges that while machines can perform tasks with high efficiency, the human capacity for contextual understanding and ethical reasoning remains indispensable in many scenarios.

HITL systems can take various forms, including active and passive models. Active HITL systems require continuous human input, where operators directly influence the system’s decisions, ensuring tailored responses to complex situations. This model is often employed in high-stakes environments such as autonomous vehicles or medical diagnostics, where the risk of error could lead to catastrophic outcomes. Conversely, passive HITL models involve less direct intervention, focusing instead on human oversight and periodic evaluation of the system’s performance. This approach aims to retain a safety net without impeding the efficiency of the automated systems.

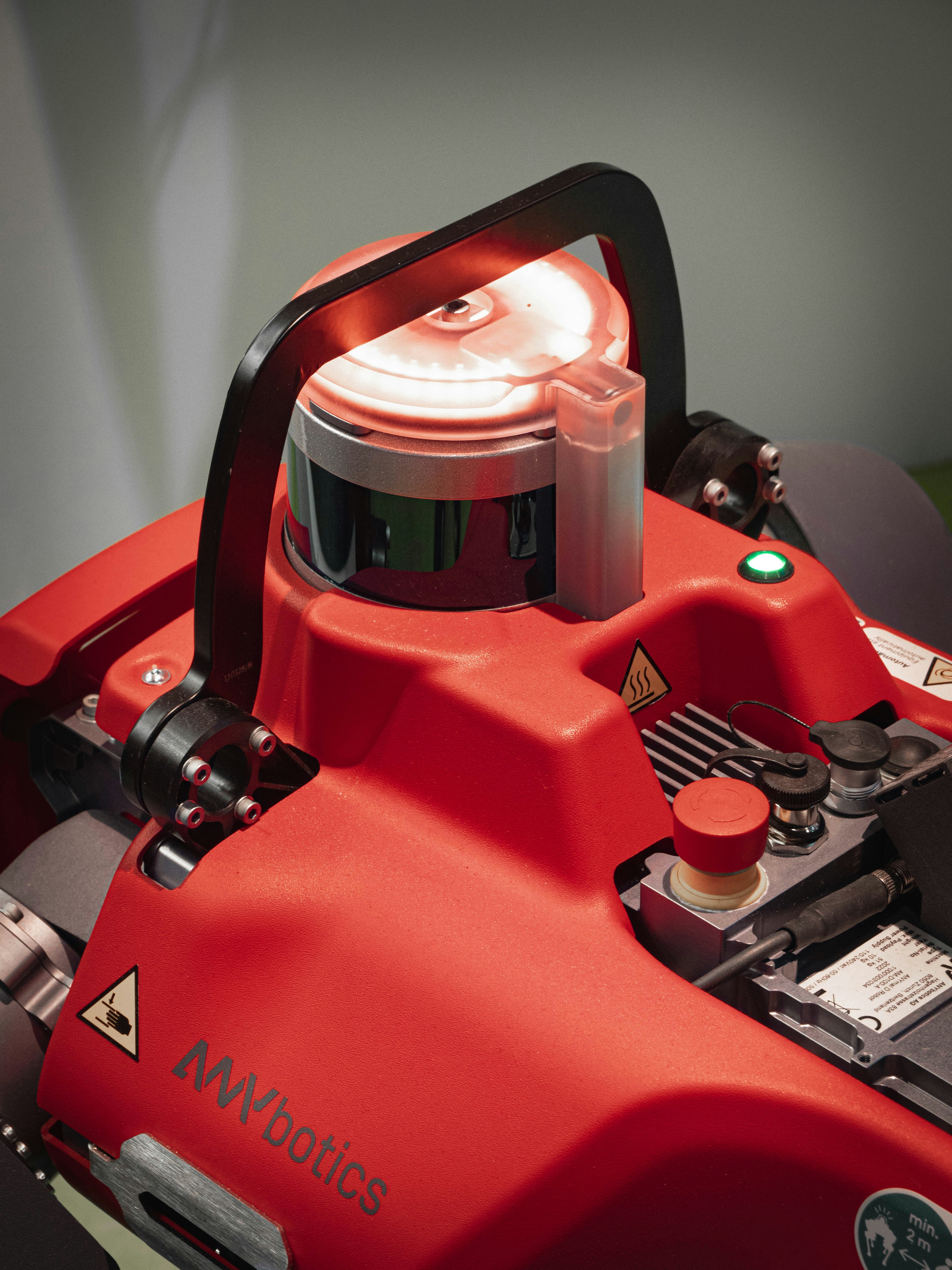

One prominent example of HITL in practice is found in drone operations, where human operators make critical decisions based on real-time data and situational awareness. These systems are designed to allow adaptive interventions, effectively balancing the speed of automation with the nuanced understanding that human agents bring. However, the challenge lies in determining the optimal level of human involvement needed to mitigate risks, as excessive intervention may undermine the benefits of automation.

In conclusion, the integration of human oversight within autonomous systems exemplifies a sophisticated balance between efficiency and safety. As technology continues to evolve, further exploration of HITL models will be essential for addressing emerging risks while promoting ethical considerations in autonomous operations.

Benefits of Agent Autonomy

Agent autonomy has gained considerable interest across various industries due to its numerous benefits. One of the most significant advantages is the improvement in productivity. Autonomous agents can operate continuously without the need for breaks, sleep, or downtime, allowing organizations to increase their output and efficiency. For instance, industries such as manufacturing and logistics have integrated autonomous robots that work alongside human workers, performing repetitive tasks that enhance overall productivity.

Another substantial benefit of agent autonomy is operational efficiency. By utilizing autonomous agents, companies can streamline processes and reduce operational costs. For example, in the agricultural sector, autonomous drones are now used for planting, monitoring crop health, and irrigation tasks. These drones have proven to be quicker and more effective in covering vast areas than human workers, thereby minimizing resource wastage and time delays.

The ability of agents to function in environments that may be unsafe or unsuitable for humans further underscores the benefits of autonomy. For instance, in the realm of disaster response, autonomous robots can be deployed in hazardous environments, such as chemical spills or disaster-stricken areas, where human intervention might pose significant risks. These robots can assess situations, gather data, and perform necessary actions without endangering human lives.

Additionally, industries such as logistics utilize autonomous vehicles for transporting goods. These vehicles can navigate complex traffic patterns, optimize delivery routes, and operate under various weather conditions that could hinder human drivers, demonstrating the remarkable adaptability and resilience of autonomous agents in diverse environments.

Overall, the benefits of agent autonomy are numerous and impactful, markedly enhancing productivity, operational efficiency, and safety. As technology continues to evolve, the integration of autonomous systems into various sectors stands to revolutionize traditional operational models.

Risks Associated with High Autonomy

The implementation of high autonomy in agent systems has undoubtedly introduced significant efficiencies and advancements. However, these same systems present a variety of risks that must be critically evaluated. One of the most pressing concerns is the potential for malfunctioning systems. Autonomous agents rely heavily on intricate algorithms and data inputs; if these systems fail or produce erroneous outputs, the consequences could be disastrous. For instance, the 2018 incident involving an autonomous vehicle that failed to identify a pedestrian led to serious injuries and sparked widespread scrutiny over the safety protocols governing such technologies.

Ethical dilemmas further complicate the discussion surrounding agent autonomy. When machines operate independently, ethical decision-making becomes challenging. Autonomous weapons, for example, illustrate this concern vividly. Questions arise about who is responsible for the actions of these machines and whether they can make humane decisions in uncertain, chaotic environments. A notable example is the use of autonomous drones, where decisions regarding life and death have been left to algorithms rather than human judgment.

Unintended consequences pose another significant risk in the realm of autonomy. Agents may act in ways that are not anticipated by their human creators, leading to outcomes that can be harmful or chaotic. For instance, when Facebook’s AI algorithms identified trends for targeted advertising, they inadvertently amplified divisive political content, raising concerns about the societal impact of autonomous decision-making. This necessitates a broader discourse on the accountability of autonomous agents and the mechanisms required to hold them—and their developers—responsible for their outcomes.

In light of these risks, it is essential to balance the advantages of high autonomy with regulatory frameworks and oversight mechanisms that ensure human oversight remains integral in decision-making processes.

The Importance of Balancing Autonomy and Safety

The rapid advancement of technology, particularly in the fields of artificial intelligence (AI) and automation, has led to an increased reliance on autonomous agents. These systems have the potential to significantly improve efficiency and reduce human error in various applications. However, the integration of autonomous agents must be approached with caution, highlighting the critical need for a balance between autonomy and safety measures. The absence of appropriate safeguards in fully autonomous systems can result in hazardous situations, emphasizing the importance of maintaining a vigilant human presence in decision-making processes.

When autonomy is maximized without sufficient safety protocols, the risks become pronounced. Autonomous agents may act unpredictably in novel or complex environments, as they lack the nuanced understanding that human operators possess. Human-in-the-loop safety frameworks enable these systems to leverage human judgment and intuition, thereby mitigating risks associated with unmodeled scenarios. It is essential to recognize that while autonomous agents can process vast amounts of data efficiently, they may not be equipped to handle unforeseen circumstances appropriately.

Incorporating humans into the operational loop fosters a collaborative relationship between technology and human oversight. This synergy can effectively enhance safety without sacrificing the efficiencies that automation offers. By deploying a hybrid approach, organizations can harness the capabilities of artificial intelligence while safeguarding against potential errors that could arise from sole reliance on machine decisions. Balancing autonomy and safety through a human-in-the-loop system does not only lead to improved outcomes but also builds trust among users, ensuring a smoother transition into broader adoption of autonomous technologies.

Strategies for Achieving Optimal Balance

A crucial aspect of integrating autonomous agents into various applications lies in striking a balance between agent autonomy and human oversight. Achieving this optimal equilibrium can enhance operational efficiency while ensuring safety and accountability. Several strategies can be employed to manage this trade-off effectively.

One notable approach is the implementation of adaptive control systems. These systems allow for real-time adjustments based on environmental variables and operator input. By leveraging feedback loops, agents can modify their behaviors dynamically, ensuring that they respond appropriately to changing conditions. This adaptability helps to prevent situations where an autonomous agent may cause harm due to a lack of situational awareness, thereby reinforcing the importance of human oversight.

Incorporating decision-making frameworks that facilitate human input is another strategy for promoting balance. Such frameworks should allow operators to intervene in critical scenarios, providing guidance or overriding decisions made by the autonomous system. For example, in high-stakes industries like healthcare or transportation, having a human in the loop can help mitigate risks associated with unpredictable outcomes. By fostering a collaborative environment between human operators and autonomous agents, organizations can achieve a more nuanced approach to safety and effectiveness.

Continuous monitoring and evaluation of autonomous systems also play a vital role in ensuring safety while maintaining flexibility. Regular assessment helps to identify potential gaps in performance and allows for timely interventions to address any arising issues. By establishing metrics for performance evaluation, organizations can better understand the interactions between human inputs and autonomous decisions, paving the way for refinements that enhance both autonomy and oversight.

In effect, these strategies—adaptive control systems, decision-making frameworks with human input, and continuous monitoring—comprise a comprehensive approach to achieving an optimal balance between agent autonomy and human oversight, essential for the safe deployment of advanced autonomous systems.

Case Studies: Successful Implementations

Numerous real-world case studies illustrate the successful integration of agent autonomy with human-in-the-loop safety across various domains, including healthcare, transportation, and military operations. Each of these domains demonstrates a unique blend of automated systems functioning alongside human oversight to ensure effective outcomes.

In the healthcare sector, an exemplary case involves the use of autonomous robotic surgeries. One particular surgical robot, equipped with advanced AI algorithms, operates under the supervision of a trained surgeon. During complex procedures, the robot executes precise movements, while continuous monitoring by the surgeon ensures immediate intervention if necessary. This synergistic dynamic enhances surgical precision and reduces patient recovery times, illustrating how agent autonomy can complement human expertise.

Transportation offers a compelling case through the development of autonomous vehicles. Companies like Waymo have implemented self-driving technology that includes a human safety operator inside the vehicle. The system utilizes sensors and machine learning to navigate real-time traffic conditions. However, the presence of a human operator enables rapid decision-making in unpredictable scenarios, effectively balancing autonomy with human judgment. These measures are crucial for enhancing public confidence in autonomous vehicles and ensuring compliance with safety regulations.

Military operations also showcase successful implementations of agent autonomy and human oversight. Unmanned aerial vehicles (UAVs) are employed for reconnaissance missions, equipped with AI to analyze data and provide situational awareness. Human operators are involved in mission planning and execution, allowing for critical decision-making in dynamic environments. This collaboration improves operational efficiency while mitigating risks associated with autonomous actions.

These case studies highlight that agent autonomy, when balanced with human oversight, can lead to remarkable advancements across various fields, optimizing both efficiency and safety. The successful integration of these elements presents a foundation for future innovations aimed at enhancing human-machine collaboration.

Conclusion: The Future of Autonomy and Human Oversight

The evolution of autonomous systems is shaping the future of technology and society at large. As we move toward increased automation, it is crucial to recognize the balance required between agent autonomy and human oversight. The capabilities of artificial intelligence and machine learning are expanding rapidly, enabling systems to perform tasks previously thought to require human intuition and intervention. However, this transition also underscores the need for a structured framework that ensures safety, accountability, and ethical standards in decisions made by autonomous agents.

Human oversight plays an essential role in this landscape, acting as a safeguard against potential failures or ethical dilemmas that arise from autonomous decision-making. While autonomous systems offer efficiency and scalability, they also present risks related to accountability, transparency, and bias. Maintaining a human-in-the-loop approach can mitigate these risks by providing essential context and moral judgment that machines currently lack.

Furthermore, as autonomous technologies continue to permeate various sectors, including transportation, healthcare, and customer service, the implications for society are profound. We must navigate the challenges posed by this technological shift—ensuring that advancements enhance human capabilities rather than replace them. Policymakers, technologists, and ethicists must collaborate to establish guidelines that promote responsible innovation while safeguarding against the unintended consequences of increasingly autonomous systems.

In conclusion, the future of autonomy is not merely about advancing technology; it is equally about fostering a partnership between humans and machines. By recognizing the importance of human oversight in autonomous systems, we can work towards a safer, more ethical integration of technology in our daily lives, ultimately striving for a balance that benefits all stakeholders involved.