Introduction to Self-Taught Optimizer Loops

Self-taught optimizer loops represent a progressive evolution in the realm of optimization techniques, transcending traditional methodologies by incorporating learning and adaptation mechanisms that enhance performance over time. These loops blend automated processes with intelligent adjustments based on feedback, facilitating adaptive learning and refinement throughout the optimization process. The core principle behind self-taught optimizer loops lies in their ability to iteratively improve a model’s performance without extensive external guidance, essentially allowing the optimizer to learn from its own experiences.

The relevance of self-taught optimizer loops is becoming increasingly significant across various fields such as machine learning, artificial intelligence, and algorithmic trading. In contrast to conventional optimization methods, which usually rely on pre-defined parameters and static heuristics, self-taught optimizers harness the power of continuous feedback to fine-tune their strategies dynamically. This not only enhances the speed of convergence but also potentially leads to more robust solutions that can adapt to changing environments or datasets.

A prominent feature of self-taught optimizer loops is their self-refinement capability. This mechanism enables the optimizer to evaluate its past performance, identify weaknesses, and adjust its approach accordingly. An important distinction here is the iterative nature of these loops, where each cycle builds upon the last, fostering an accumulation of knowledge that propels the optimizer towards achieving optimal outcomes efficiently.

Ultimately, understanding the principles that underpin self-taught optimizer loops provides valuable insights into how modern optimization techniques function. By embracing a more flexible, feedback-oriented approach, these loops stand to redefine optimization processes, ensuring better outcomes that are not merely reliant on predefined metrics but rather evolve through self-directed learning and adaptation.

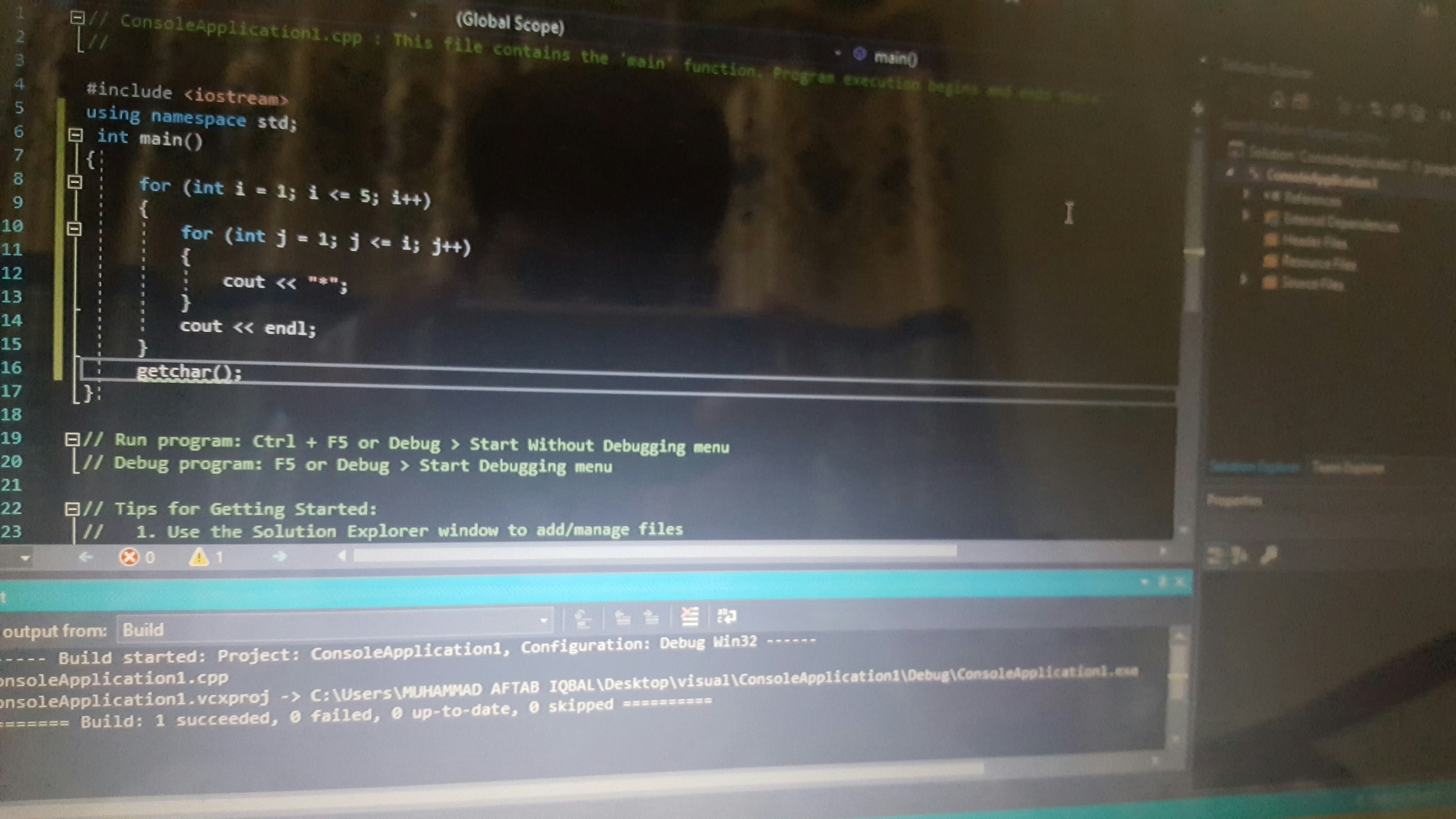

The Concept of the Star Loop

The Star Loop serves as a foundational element within the framework of self-taught optimizer loops, primarily aiding in the optimization process. This loop is designed to streamline problem-solving by establishing a structured pathway for addressing complex challenges. Its framework revolves around three core principles: clarity, iteration, and learning.

Firstly, clarity facilitates a deep understanding of the issue at hand. In this stage, practitioners identify the parameters that define the problem, ensuring that all aspects are well-articulated. This meticulous approach not only helps in clearly outlining the goals but also provides a benchmark for success. The more precise the problem statement, the more effective the subsequent iterations will be.

The second principle, iteration, involves creating continuous cycles of assessment and adjustment. In the Star Loop, after defining the problem clearly, the next step includes generating potential solutions. Each solution is evaluated according to predefined criteria, allowing practitioners to identify the most effective strategies. By iterating through different approaches, one can refine these potential solutions, thereby enhancing the overall optimization process. This method fosters a culture of experimentation, where failure is viewed not as a setback but as a valuable learning opportunity.

Finally, the learning component of the Star Loop emphasizes the necessity of accumulating knowledge from each iteration. Post-evaluation, collected insights can be documented and analyzed to inform future strategies. This not only improves immediate problem-solving capabilities but also builds a repository of knowledge that can be leveraged for subsequent challenges. Ultimately, the Star Loop exemplifies a systematic approach to optimization that encapsulates the principles of structured problem-solving, iteration, and continuous learning, making it a crucial component in the realm of self-taught optimization.

Understanding the Self-Refine Loop

The Self-Refine Loop is a critical component in self-optimizing processes, providing a mechanism for enhancing learning and optimizing tasks effectively. At its core, the Self-Refine Loop allows systems to adaptively improve their performance by continuously analyzing past outcomes and adjusting their strategies accordingly. This iterative process ensures that not only are mistakes rectified, but successful strategies can be reinforced over time, leading to an optimized approach to problem-solving.

This optimization technique can be observed in various applications. For instance, in machine learning, the Self-Refine Loop can facilitate the tuning of algorithms based on previous performance metrics. Consider a neural network that adjusts its weights based on the errors made during training. Here, the loop enables the network to refine its model incrementally, gradually leading to improved accuracy. Similarly, in optimization tasks within complex systems, the Self-Refine Loop can be employed to evaluate different operational strategies, eliminating less effective approaches while honing in on the best-performing options.

A significant aspect of the Self-Refine Loop is its ability to foster a feedback-rich environment, which enhances the learning process. The loop utilizes feedback from various iterations, enabling continual refinement of skills and methodologies. For example, in automated trading systems, a Self-Refine Loop may adjust trading strategies based on the success of previous trades. By learning from both wins and losses, the system optimizes future decisions, ultimately improving its profitability.

The significance of the Self-Refine Loop is evident in its capacity to create adaptive systems that thrive on feedback and evolve over time. This adaptability is crucial in dynamic environments where conditions change rapidly, thus requiring a robust mechanism to maintain optimal performance. As a result, the Self-Refine Loop stands as an essential principle in the realm of self-optimizing processes.

Backtracking: A Critical Component

Backtracking is a powerful algorithmic technique commonly employed in the realm of computer science, particularly in relation to optimization problems. This method operates by incrementally building candidates for solutions and abandoning a candidate as soon as it is determined that the candidate cannot lead to a valid solution. Within the context of self-taught optimizer loops, backtracking plays an essential role by allowing for dynamic adjustments and refinements during the optimization process.

The application of backtracking is particularly effective in scenarios that involve a series of choices, enabling algorithms to explore potential solutions in a structured manner. By systematically traversing through decision trees, backtracking can find optimal solutions to problems like constraint satisfaction, pathfinding in mazes, and combinatorial problem-solving, including puzzles such as Sudoku. These examples underscore how backtracking optimizes solution discovery by discarding unsuitable paths early in the evaluation process.

One of the key advantages of backtracking lies in its efficiency. Unlike brute force methods that may exhaustively search through all possible configurations, backtracking significantly reduces the search space. This is accomplished by cutting off paths that cannot yield feasible solutions, leading to faster convergence on optimal answers. Furthermore, backtracking can operate in conjunction with other optimization techniques such as dynamic programming and greedy algorithms, enhancing its utility across a wider array of applications.

Backtracking is particularly well-suited for problems where the solution can be constructed incrementally, and it is capable of addressing numerous challenging scenarios effectively. As the demand for intelligent algorithms evolves, the significance of backtracking in self-taught optimizers becomes increasingly evident, illustrating its critical importance in facilitating efficient and dynamic optimization processes.

Interconnections Among Star, Self-Refine, and Backtracking

The exploration of self-taught optimizer loops—namely Star, Self-Refine, and Backtracking—reveals a complex interplay that enhances overall optimization processes. Each loop contributes unique strengths that, when appropriately utilized together, form a robust optimization framework.

Star, known for its ability to efficiently identify optimal solutions in vast problem spaces, initiates the optimization journey by generating a diverse set of candidate solutions. This loop excels at broad exploration, ensuring that essential solutions are not overlooked. However, while Star’s strength lies in its search capacity, it may occasionally fall short in refining these solutions to their utmost potential.

This is where the Self-Refine loop comes into play. Acting as a corrective phase, Self-Refine focuses on enhancing the solutions identified by Star. By applying specific heuristics or adjustment techniques, Self-Refine systematically improves the quality of these candidates, addressing shortcomings that originate from the initial search process. Through iterative adjustments, Self-Refine refines the solutions, exponentially increasing their applicability and effectiveness.

While Star and Self-Refine operate as initial search and refinement stages, the Backtracking loop adds another critical layer of depth to the optimization strategy. This loop allows for reevaluation of solutions, enabling the model to traverse back and analyze previously discarded candidates. By leveraging insights gained from successful refinements, Backtracking ensures that no viable solution is completely abandoned. This combination of exploration, refinement, and reevaluation creates a resilient feedback system that enhances the overall efficacy of the optimization framework.

As a cohesive unit, Star, Self-Refine, and Backtracking embody a symbiotic relationship where each loop’s strengths mitigate the weaknesses of the others. Together, they provide a comprehensive approach that fosters improved optimization outcomes, ultimately leading to superior solutions in various contexts.

Practical Applications of Self-Taught Optimizer Loops

Self-taught optimizer loops have emerged as a significant advancement in various fields such as machine learning, data analysis, and software engineering. These methodologies enable systems to enhance their performance autonomously, adapting to new data and evolving scenarios without extensive human intervention. The principles of self-taught optimization can be illustrated through several practical applications.

In machine learning, self-taught optimizers are pivotal in refining algorithms. For instance, a model employing a self-refine loop can analyze its own predictions against observed data, allowing it to calibrate its parameters and improve accuracy over time. This dynamic adjustment is particularly useful in environments where data patterns shift rapidly, such as in financial markets or online recommendation systems. By utilizing self-taught loops, these algorithms can remain relevant and effective.

Data analysis also benefits from self-taught optimizer loops. In scenarios where large datasets must be processed, these loops can facilitate continuous learning from user interactions and adapt data processing methods accordingly. For example, a data analytics tool that integrates self-taught capabilities can learn user preferences, ultimately crafting personalized reports and insights based on historical usage patterns. This results in greater efficiency and user satisfaction.

In software engineering, self-taught optimization streams are applied to enhance code performance. Development tools equipped with these loops can analyze coding practices, learning from previous iterations to suggest improvements. This application allows for the proactive identification of potential vulnerabilities and performance bottlenecks. As the loops refine themselves based on new data and user feedback, they contribute to more robust and maintainable software solutions.

The implementation of self-taught optimizer loops across these fields signifies a transformative shift towards automation, where systems not only execute tasks but also learn and adapt from their experiences, ultimately leading to better outcomes.

Challenges and Limitations

While self-taught optimizer loops, such as Star, Self-Refine, and Backtracking, present innovative approaches to optimization problems, they are not without their challenges and limitations. One significant difficulty lies in data quality and quantity. For these methods to function effectively, they require a robust dataset that exhibits a certain level of diversity and completeness. Inadequate or biased datasets can lead to suboptimal solutions, as the learning process relies heavily on the input data. Therefore, practitioners must be vigilant in curating their training data to prevent inherent biases from affecting the optimization process.

Additionally, the interpretability of the results generated by self-taught optimizers can be a challenge. As these methods often employ complex algorithms and models, understanding their decision-making process can be difficult. This opacity can create issues when users need to justify or explain the outputs to stakeholders, particularly in industries where transparency is paramount. For example, in healthcare or finance, stakeholders may demand clear rationales for optimization decisions that impact outcomes significantly.

Another limitation is the computational intensity associated with implementing self-taught optimizer loops. The continuous self-improvement mechanism may require substantial computational resources, particularly as the size of the dataset increases. This can lead to time lags in processing and may necessitate investments in high-performance computing infrastructure—which could be cost-prohibitive for smaller organizations.

Moreover, self-taught optimizers may struggle in dynamic environments where the data distribution shifts over time. These methods often assume a static learning environment, which may not hold true in real-world applications. Consequently, if the underlying data changes significantly, the optimizer may lose its effectiveness, resulting in poor performance and potentially misguided conclusions.

Future Directions in Optimization Techniques

The realm of optimization techniques is continually evolving, driven by advancements in technology and a deeper understanding of complex problem-solving methodologies. In the context of self-taught optimizer loops, such as star, self-refine, and backtracking methods, there are several anticipated future directions that could enhance their effectiveness and application across various industries.

One significant trend is the integration of artificial intelligence and machine learning algorithms within optimization processes. These technologies have the potential to automate and enhance the decision-making process inherent in self-taught loops. By analyzing vast datasets, AI can identify patterns and optimize the search space more efficiently than traditional methods. For instance, reinforcement learning could be employed to refine the parameters of the optimization loops dynamically, resulting in more adaptive and robust solutions.

Moreover, as computational resources grow more powerful and accessible, there is an opportunity to tackle more complex problems that were previously infeasible to solve. With the advent of quantum computing, optimization techniques could undergo a paradigm shift, allowing for solutions to be reached at unprecedented speeds. This advancement could significantly impact various sectors, including logistics, finance, and healthcare, where optimization plays a crucial role in operational efficiency and strategic planning.

Additionally, the exploration of hybrid optimization methods, which combine elements of different optimization strategies, is poised to gain traction. Such approaches can leverage the strengths of various techniques, such as heuristic and exact optimization methods, to yield more comprehensive solutions. Industries that rely heavily on optimization, including manufacturing and telecommunications, may benefit greatly from such innovative methodologies.

In conclusion, the landscape of optimization techniques related to self-taught loops is set for substantial growth and innovation. As technology progresses and new methodologies emerge, practitioners will likely discover novel applications and improved efficiencies, reshaping how industries approach problem-solving and optimization.

Conclusion: The Impact of Self-Taught Optimizer Loops

Self-taught optimizer loops, including methodologies such as Star, Self-Refine, and Backtracking, represent a pivotal advancement in the realm of optimization strategies. These systems leverage their own past performance data to iteratively enhance their efficiency and efficacy, ultimately leading to superior outcomes across various applications. By facilitating a cycle of self-improvement, these loops offer a more dynamic and responsive approach to problem-solving, setting them apart from traditional static optimization methods.

The insights gained from this exploration underscore the significance of adaptive learning processes in optimization. The Star method, with its holistic evaluation approach, allows for a broader perspective on achievable outcomes. Meanwhile, the Self-Refine technique promotes a more concentrated analysis of specific areas, enabling focused enhancements. Backtracking adds an additional layer of resilience by allowing the optimization process to revert to previous iterations when necessary, ensuring robust solutions. Together, these methods embody a comprehensive strategy that takes full advantage of self-directed learning.

As the field of optimization continues to evolve, the role of self-taught optimizer loops is likely to expand. With advancements in artificial intelligence and machine learning, the potential for these loops to integrate with other technologies is promising. This integration may enhance their functionality, allowing for even more personalized and context-aware optimization strategies. Therefore, the future of self-taught optimizer loops holds considerable potential, suggesting that further research and application of these techniques will yield valuable contributions to both theory and practice in optimization.