Introduction to Global Models and Data Biases

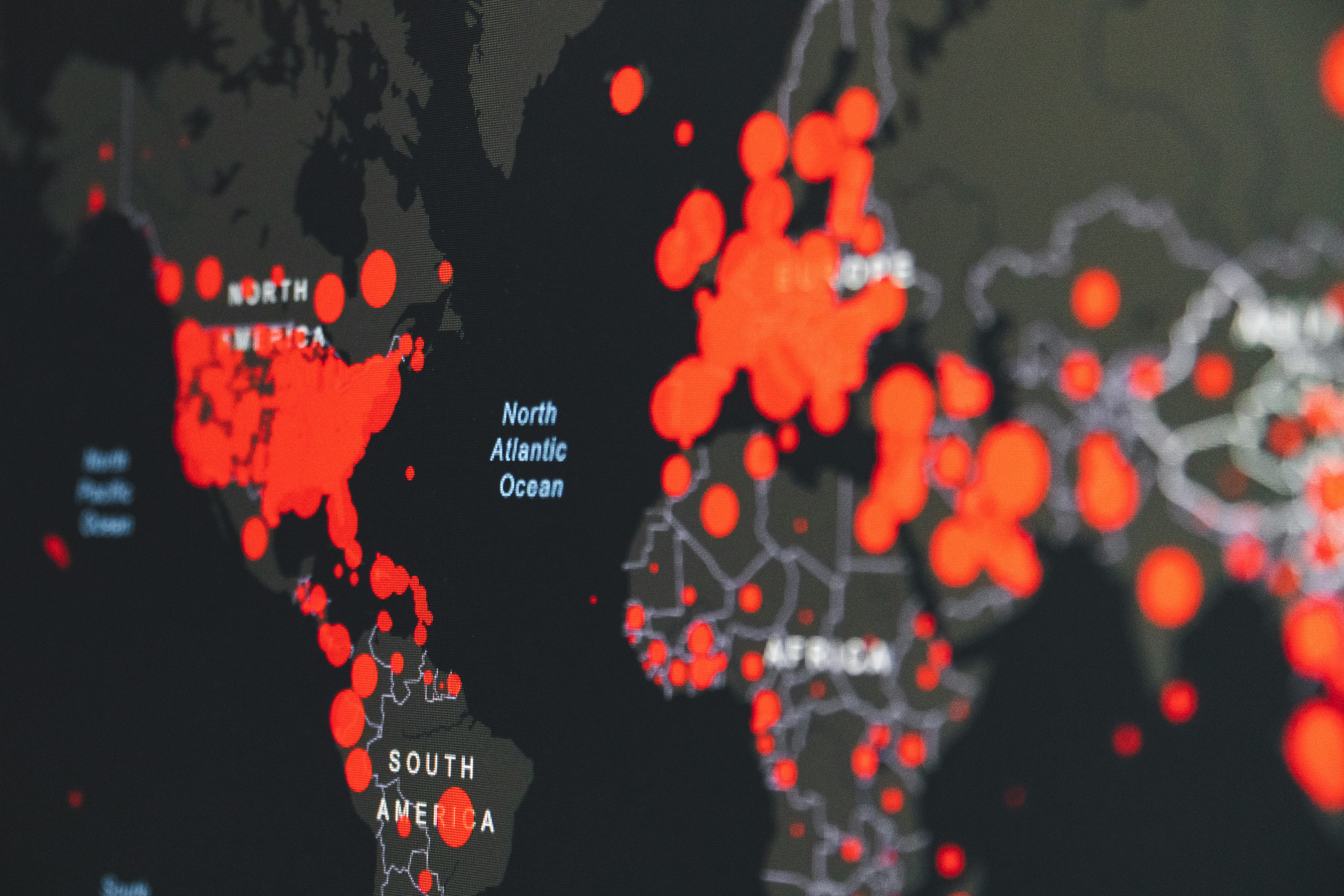

In the realm of machine learning, global models play a critical role in processing vast amounts of data to make predictions and inform decisions across various sectors. These models are designed to learn from diverse datasets, integrating information for generalized applications. However, the training data’s geographical and cultural origins profoundly influences their performance and efficacy. Consequently, the significance of understanding the implications of training such models on predominantly Western datasets as opposed to Indic datasets cannot be overstated.

Data biases refer to the systematic, erroneous preferences embedded in data that can skew an artificial intelligence model’s output. These biases often emerge when models are developed without sufficient representation from diverse populations, leading to skewed predictions. For instance, when a global model primarily utilizes data from Western countries, it may not accurately reflect the needs, preferences, or behaviors of users from other regions, such as India.

Training data collected from Western datasets frequently embodies cultural narratives, societal norms, and behavioral patterns that may not align with those found in Indic datasets. As a result, the model may inadvertently perpetuate stereotypes or misconceptions about non-Western populations, which is detrimental to achieving fairness and equity in AI applications. The disparity between datasets raises important questions about the interpretability, accountability, and ethical use of AI technologies.

With the rapid advancement of artificial intelligence, it becomes increasingly vital for developers and researchers to address these biases proactively. By broadening the scope of training datasets to include a variety of cultural backgrounds, particularly those representing underrepresented regions, global models can be made more inclusive. This dimension not only enhances the model’s performance but also fosters trust among users, allowing AI to serve as a more effective tool in the global context.

In the landscape of artificial intelligence and machine learning, the significance of utilizing diverse datasets cannot be overstated. A diverse dataset encompasses a variety of cultural, social, and linguistic contexts, which is essential for developing global models that are equitable and effective across different demographics. When training models, relying solely on datasets from a single region—such as Western datasets—can lead to biases that undermine the model’s ability to perform well in other cultural settings.

The incorporation of diverse datasets allows machine learning algorithms to learn from a broader spectrum of human experience, thereby enhancing their ability to generalize across different scenarios. For instance, integrating data from Indic contexts can provide insights into unique social nuances, idiomatic expressions, and cultural references that Western datasets might overlook. Such an approach not only enriches the training process but also mitigates the risk of developing models that perpetuate existing biases or fail to understand the needs of varied user groups.

Moreover, diverse datasets can significantly improve the model’s applicability. By training on varied information, the model gains exposure to multiple ways of thinking and problem-solving, which ultimately drives innovation and creativity in AI solutions. This practice leads to the development of models that are more robust and capable of performing effectively across different geographic and demographic contexts. As models become more inclusive, they are better equipped to address complex challenges that arise in global settings, fostering a more comprehensive understanding of issues and providing fairer outcomes.

In summary, leveraging diverse datasets is critical for enhancing the performance and applicability of global models. By acknowledging and integrating the wealth of perspectives provided by different cultural and social contexts, developers can create models that are not only effective but also representative of the diverse world we inhabit.

Understanding Western Datasets and Their Implications

Western datasets have become a predominant source for training global models, resulting in a significant influence over digital narratives. These datasets are typically curated from a variety of sources, including social media, consumer behavior studies, and public records, which often reflect the socio-economic narratives and cultural norms prevalent in Western societies. One key characteristic of these datasets is their representation of issues, values, and preferences that resonate with Western audiences, potentially skewing the perception of global perspectives.

As models trained on Western datasets are deployed worldwide, the biases embedded in this data can manifest in diverse ways. For instance, algorithms may prioritize information that aligns with Western cultural norms, unintentionally marginalizing or misrepresenting the values and behaviors of non-Western communities. This can lead to a disparity in how individuals from different backgrounds interact with technology, ultimately contributing to a lack of inclusivity in digital environments.

Moreover, the reliance on Western-centric data can influence the design and functionality of systems, fostering an ecosystem that may alienate users who do not conform to Western ideals. Such biases can be particularly problematic in areas like natural language processing, where models might struggle to effectively interpret or generate content that accurately reflects non-Western contexts, leading to misunderstandings and miscommunications.

Addressing these implications requires an awareness of the limitations inherent in Western datasets. By acknowledging the cultural specificities and socio-economic contexts that inform these sources, it becomes possible to better appreciate the ways in which they can perpetuate bias. This understanding can aid in developing more balanced, inclusive datasets that represent diverse worldviews, laying the groundwork for more equitable global models.

Insights from Indic Datasets

Indic datasets are characterized by their remarkable linguistic diversity, cultural richness, and social complexities. Covering a vast array of languages—over 700 spoken across the Indian subcontinent—these datasets offer an extensive spectrum of linguistic variations. Each language embodies unique syntax, semantics, and idiomatic expressions, which contribute to the overall complexity of communication within these communities. Consequently, models trained on Indic datasets are likely to capture nuances that models relying solely on Western datasets might overlook.

Culture plays a pivotal role in shaping the meanings and contexts of language. Indic datasets encompass multiple cultural dimensions, including mythology, folklore, traditions, and contemporary social issues. For example, regional traditions significantly influence people’s interactions, beliefs, and values. This cultural wealth can provide models with valuable context, allowing for a more comprehensive understanding of various social phenomena. By incorporating insights from Indic datasets, global models can achieve a more nuanced perspective, addressing potential biases arising from homogenous datasets.

Furthermore, social complexities within Indic communities exhibit a range of factors, including caste dynamics, gender roles, and rural-urban divides. These intricacies add layers of context that are essential for the development of models meant for global applications. Ignoring these complexities could inadvertently lead to skewed interpretations and outputs. Models that leverage Indic datasets can better emphasize diverse viewpoints and develop more equitable frameworks, thus addressing, at least in part, the biases that may arise from a Western-centric approach.

Training on Indic datasets not only enables models to provide more diverse outputs but also helps cultivate an inclusive understanding of global narratives. By embracing the linguistic, cultural, and social richness inherent in these datasets, we can foster advancements in artificial intelligence that resonate with a wider audience and reflect a more accurate representation of our interconnected world.

Comparing Biases Arising from Western vs Indic Training Data

In the ever-evolving landscape of artificial intelligence (AI) and machine learning, biases in dataset training hold significant implications for model accuracy and fairness. This is particularly relevant when analyzing models built predominantly on Western versus Indic datasets. The disparities in cultural contexts, social norms, and linguistic nuances can lead to divergent biases that influence the outcomes of these models.

Models trained on Western datasets often reflect the socio-economic, cultural, and political frameworks prevalent in those regions. For instance, when machine learning algorithms are developed using a primarily Western lens, they may inherit biases that align with Western ideologies, potentially marginalizing perspectives from other cultures. Consequently, sectors such as finance may prioritize risk assessments or credit evaluations that do not account for diverse social behaviors typical in non-Western societies. This can result in inequitably biased decisions, amplifying existing inequalities.

On the other hand, Indic datasets, rich in cultural diversity, present their own set of challenges. Training models on Indic data requires careful consideration of the wide-ranging languages, traditions, and social dynamics present in the region. If not adequately addressed, biases may emerge in healthcare technologies, skewing diagnoses and treatment recommendations to favor demographics represented in the data over others. For example, a healthcare model might underrepresent diseases prevalent in certain demographies leading to an ineffective standard of care.

Furthermore, the realm of social media is intricately influenced by the biases present in the training data. A model that relies heavily on Western narrative structures may misinterpret culturally specific content found in Indic contributions, resulting in miscommunications and misunderstanding among users. Thus, the nature and origin of training data play a critical role in shaping the biases that manifest in AI applications across various sectors, warranting a thorough comparative analysis to promote fairness and inclusivity.

Case Studies: Consequences of Data Bias

One of the most vivid illustrations of data bias can be found in the realm of facial recognition technology. Several studies have revealed that models trained predominantly on Western datasets exhibit substantial discrepancies in accuracy across different ethnic groups. For instance, a well-known analysis showed that the error rate for identifying African American women can be as high as 34%, while the error rate for identifying Western males hovers around 1%. This case starkly highlights how biases entrenched in training datasets can give rise to systematic discrimination and misrepresentation of certain demographics, leading to potentially harmful societal repercussions.

Similarly, in the context of language processing models, research indicated that Western-centric datasets contribute to significant performance disparities when addressing non-Western languages. One particular instance involved a translation model that was predominantly trained on English-French datasets. Users attempting to translate less commonly spoken languages, such as Tamil or Telugu, encountered inaccuracies that not only distorted meaning but also led to misunderstandings in cultural nuances. This resulted in a lack of trust in the technology, reinforcing linguistic hierarchies that prioritize certain languages over others; this is a scenario that could easily shift if the models were trained with Indic datasets.

Another relevant example concerns the hiring algorithms used in recruitment processes. In an experiment conducted by a large technology firm, it was found that the model favored candidates based on resumes primarily sourced from Western universities. This bias substantially marginalized qualified candidates from diverse backgrounds, thereby perpetuating a homogenous workforce. The firm eventually had to recalibrate their model to ensure it accounted for a wider representation, a lesson in the necessity of comprehensive and diverse training data.

Mitigating Bias in Global Models

As the reliance on global models increases, the importance of reducing biases in these systems has become more critical. These biases often arise when models are trained on datasets that predominantly reflect specific cultural or regional perspectives, such as Western or Indic datasets. To mitigate these biases, data scientists and organizations can adopt several strategies aimed at creating more equitable and representative machine learning models.

One effective approach is to incorporate a diverse array of data sources during the model training process. This entails actively seeking datasets that include voices from underrepresented groups and regions. For instance, integrating Indic datasets alongside Western datasets can provide a more balanced perspective, helping to develop models that are better equipped to handle a variety of cultural contexts.

Moreover, engaging with local experts and communities during the data selection process can enhance the relevance and applicability of the data being used. These experts can offer invaluable insights into the nuances within their contexts, ensuring that the datasets are not only diverse but also reflective of real-world complexities. Additionally, periodic reviews of trained models can help identify and rectify biases that may not have been evident during the initial training phase.

Another strategy involves utilizing techniques such as adversarial training, where the model is exposed to examples deliberately crafted to challenge its biases. This can help in improving the model’s robustness against biased predictions. Implementing fairness constraints in the model evaluation process can also assist in pinpointing bias and ensuring that performance is consistent across different demographic groups.

In summary, the journey toward mitigating bias in global models is multifaceted and requires a proactive stance in dataset selection and model training. By prioritizing inclusivity and representation, organizations can enhance the effectiveness and ethical implications of their artificial intelligence systems.

The Role of Stakeholders in Addressing Bias

Addressing biases in global models requires a concerted effort from various stakeholders, each contributing unique insights and expertise. Policymakers, technologists, and data scientists all play critical roles in fostering an equitable data landscape. Their collaboration is vital to ensure that the models developed are not only technically sound but also socially responsible.

Policymakers are tasked with establishing regulations and standards that promote fairness in technology development and usage. They must focus on implementing guidelines that encourage transparency and accountability in AI systems, ensuring that the datasets used for training are representative of diverse populations. Furthermore, they can facilitate collaborations between stakeholders to share best practices and contribute to a collective understanding of biases present in global models developed using Western or Indic datasets.

Technologists, including software engineers and machine learning experts, are responsible for the deployment of algorithms and the architecture of models. Their expertise is vital in implementing mechanisms that can detect and mitigate biases in training datasets. By prioritizing inclusivity in model design, they can create algorithms that recognize and adapt to various cultural contexts, subsequently reducing the skew towards specific demographics often seen in models trained on Western datasets.

Data scientists, on the other hand, are essential in curating and preparing the datasets used for model training. They must ensure that the data is impartial, comprehensive, and free from historical prejudices. This involves actively engaging with communities represented in the data, thereby fostering a participatory approach that acknowledges the intricacies of various cultures.

In conclusion, a multi-faceted approach that involves all stakeholders is necessary to combat bias effectively. Each group must understand their responsibilities and collaborate towards the common goal of achieving equity in AI systems.

Conclusion: Towards a More Inclusive AI Future

As we reflect on the discussions surrounding biases in global models trained on Western versus Indic datasets, several crucial points emerge. The prevalence of biases in artificial intelligence is an important aspect that cannot be overlooked, as it fundamentally influences the effectiveness and fairness of AI technologies. Recognizing these biases is the first step towards understanding their implications on global representation and functionality. The reliance on Western datasets often results in models that fail to adequately service non-Western populations, leading to systemic inequities in various sectors, including healthcare, finance, and education.

Proactive measures must be taken to address these disparities. This includes the incorporation of diverse datasets that reflect global demographics, ensuring that AI systems are not developed in isolation but are inclusive of perspectives from all around the world. Moreover, collaboration between researchers from varied cultural backgrounds can enhance the development of more nuanced and fair AI algorithms. Stakeholders must advocate for transparency in AI processes to foster trust and enable users to understand how and why decisions are made by these intelligent systems.

In shaping a more inclusive AI future, we must emphasize the importance of designing technologies that serve all communities equitably. By prioritizing ethical AI practices and diversifying the data from which models learn, we can mitigate biases and improve the reliability of AI. Ultimately, the goal is to create intelligent systems that not only reflect the complexities of human language and behavior but also bring about improved outcomes for individuals across the globe. By championing this inclusivity, we pave the way for AI that truly benefits a diverse world.