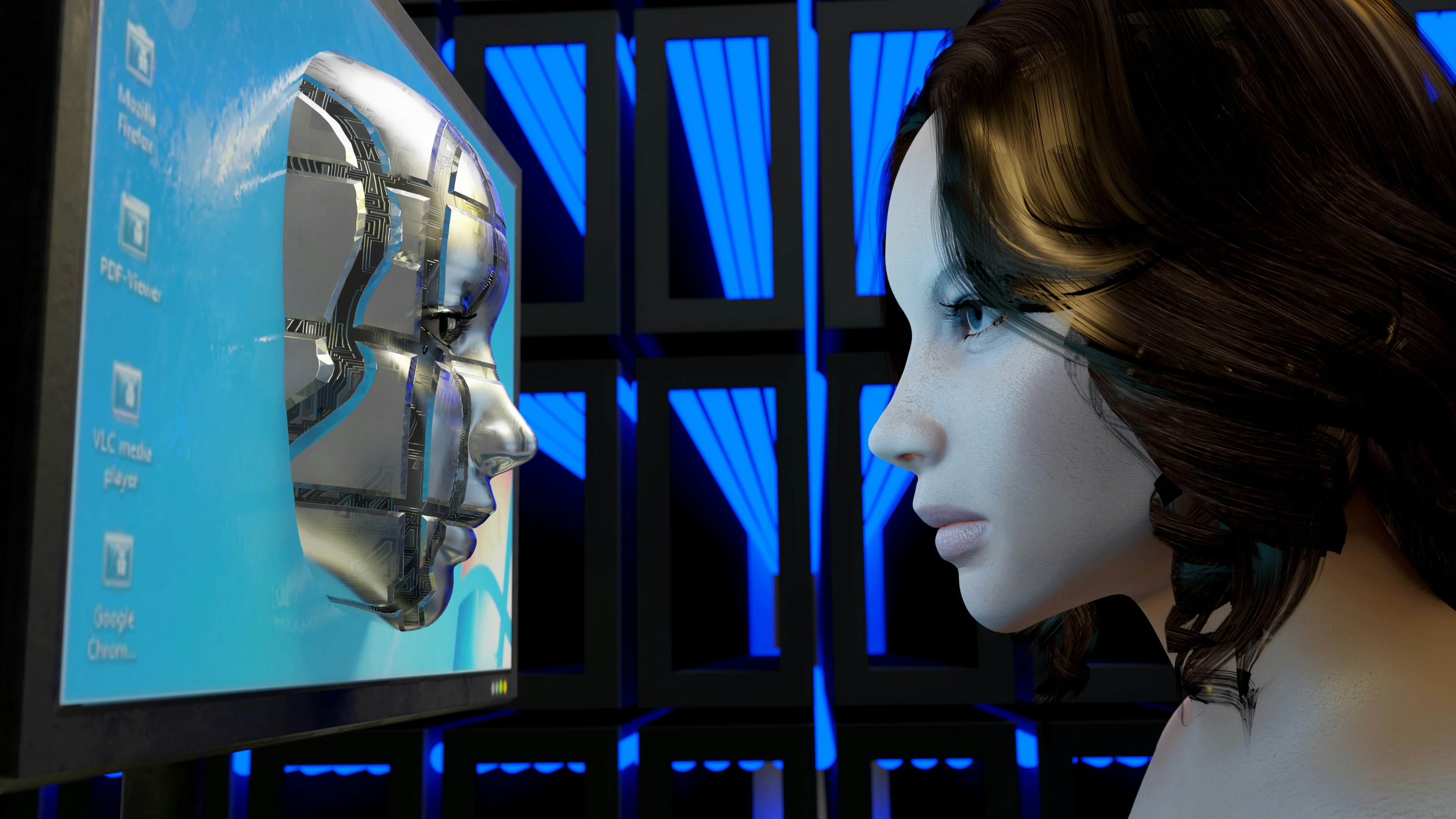

Introduction to Deepfakes and Dystopian Scenarios

Deepfake technology leverages artificial intelligence and machine learning to create realistic yet fabricated content. It allows for the seamless manipulation of audio and visual media, resulting in the production of video and audio files that make individuals appear to say or do things they have not. The creation of deepfakes typically involves training algorithms on large datasets of the target’s previous media, which then generates new content that closely mimics the person’s characteristics.

As the capabilities of deepfake technology become more advanced, the potential for its misuse increases significantly. Dystopian scenarios are emerging where deepfakes could be employed as tools for disinformation campaigns. Governments, corporations, or malicious actors might exploit this technology to create fake news or misleading content, undermining democratic processes and public discourse. For example, a deepfake could manipulate a world leader’s likeness, prompting international tensions based on fabricated statements or actions.

Beyond misinformation, deepfakes pose a considerable risk of identity theft. Cybercriminals may utilize deepfake technology to impersonate individuals, leading to potential fraud, reputational damage, and erosion of privacy. The increasing accessibility of deepfake creation tools further exacerbates these threats, as individuals with malicious intent can produce convincing content with minimal technical expertise.

This manipulation of media also significantly impacts societal trust in authentic sources of information. As deepfakes proliferate, distinguishing between legitimate and fabricated content becomes increasingly challenging for the public. This could lead to a broader societal skepticism regarding media, ultimately damaging the fundamental trust needed for effective communication and informed citizenship. Understanding deepfakes and their implications is essential for developing strategies to mitigate their negative consequences.

The Rise of Deepfake Technology

Deepfake technology has emerged as a significant advancement in the field of artificial intelligence (AI) and machine learning (ML). At its core, a deepfake is a synthesized multimedia content that combines, replaces, or superimposes existing audio or video from a source, creating an illusion of authenticity. The underlying processes utilize deep learning methods and neural networks, which have undergone considerable development in recent years. These advancements have facilitated the creation of increasingly realistic and convincing deepfake content.

Initially, the application of deepfake technology was primarily for entertainment purposes, ranging from humorous memes to film and video production. However, the exponential growth of the technology and its accessibility has contributed to its widespread adoption across various platforms. The proliferation of user-friendly software and tools has enabled individuals, not just skilled developers, to produce deepfakes with relative ease. This democratization of technology raises concerns regarding ethical implications and potential misuse.

Moreover, the popularity of deepfakes can be partly attributed to social media platforms, which provide an audience eager for unique and captivating content. As these platforms continue to evolve, they inadvertently promote the sharing and visibility of deepfake videos, amplifying their reach and influence. The consequences of this phenomenon extend far beyond entertainment; the ability to manipulate images and sounds can distort reality, create misinformation, and reframe perceptions.

The combination of these factors has led to an environment where deepfake technology thrives. As it becomes more accessible and sophisticated, the challenge lies in finding effective solutions to prevent the negative repercussions associated with its misuse. Addressing these challenges is essential to maintaining the integrity of information dissemination and public discourse in our increasingly digital world.

Understanding the Dangers of Deepfakes

Deepfakes represent a significant technological advancement, yet they also pose various threats that can destabilize societal structures and individual reputations. At their core, deepfakes utilize artificial intelligence to create hyper-realistic alterations in video and audio media, often resulting in manipulated content that can misinform or mislead audiences. The implications of such technology are profound, particularly in an era where media consumption is already plagued by misinformation.

One of the most critical dangers of deepfakes lies in their ability to manipulate public opinion. By producing fabricated video clips of political figures delivering inflammatory or misleading statements, deepfakes can sway elections or tarnish the reputation of public officials. For example, a deepfake that portrays a politician endorsing radical views can lead to public outcry and irreversible damage to their career, even if the content is entirely false. This potential for deception extends beyond political landscapes, infiltrating the realm of social media and personal relationships, where individuals’ images can be distorted to create damaging narratives.

Additionally, deepfakes have been used for blackmail and harassment, particularly targeting women by generating non-consensual explicit content. These instances inflict real psychological harm and may lead to severe consequences, including loss of employment and social ostracization. The anonymity and accessibility of creating deepfakes exacerbate their use as tools of exploitation, highlighting the urgent need for robust countermeasures.

Moreover, the credibility of media sources is increasingly at risk. As audiences become aware of the potency of deepfakes, they may grow distrustful of authentic content, leading to a general skepticism around news and information dissemination. This erosion of trust could undermine democratic processes and exacerbate societal divisions. Addressing these threats is imperative to safeguard both individual dignity and societal integrity in our increasingly digital age.

Legal and Ethical Considerations Surrounding Deepfakes

The proliferation of deepfake technology has raised significant legal and ethical issues that warrant close examination. As deepfakes become more sophisticated and prevalent, the existing legal framework struggles to keep pace with the challenges they present. Currently, many jurisdictions lack specific laws addressing deepfakes, although existing statutes related to defamation, intellectual property, and privacy can be applied. For instance, individuals whose likenesses are manipulated without consent may have grounds for legal action under privacy laws. However, the lack of clear regulations specific to deepfakes often leads to uncertainty regarding their legal status.

One of the primary ethical concerns surrounding deepfakes is consent. The unauthorized use of a person’s image or voice raises questions about personal autonomy and the right to control one’s own likeness. This issue is particularly pressing in contexts where deepfakes are used to create misleading or harmful content, such as fake news or non-consensual pornography. The ethical implications extend to creators and distributors of deepfake content as well; they bear a responsibility to consider the potential consequences of their work on individuals and society at large.

Moreover, privacy is a crucial component of the debate over deepfakes. With the ability to create hyper-realistic videos, the risk of invasion of privacy increases significantly. Individuals may find their images used in contexts that misrepresent their beliefs or actions, leading to reputational harm. The growing recognition of these ethical dilemmas has prompted calls for comprehensive legislation that addresses consent, accountability, and the risks associated with deepfake technology.

As lawmakers consider new regulations, it is imperative that they strike a balance between protecting freedom of expression and addressing the potential for harm posed by deepfakes. This balance is challenging but necessary to safeguard individuals from misuse while fostering innovation in digital content creation.

Technological Solutions for Preventing Deepfake Abuse

The rise of deepfake technology has introduced significant challenges in maintaining the integrity of information and media. As this technology evolves, so too must the methods for combating its misuse. Various technological solutions are being developed to prevent deepfake abuse, focusing on detection, authentication, and content monitoring.

One of the most promising advancements is the implementation of deepfake detection algorithms. These algorithms utilize artificial intelligence and machine learning techniques to analyze media content for potential manipulation. By examining inconsistencies in pixelation, anomalies in visual behavior, and unnatural movements, these algorithms can accurately identify digital fakes. As machine learning models undergo constant training with extensive datasets, their accuracy in discernment is expected to improve significantly, leading to more reliable detection methods that can be integrated into platforms widespread.

Another effective approach is the use of watermarking techniques for authentic media. Watermarking involves embedding identifiable information into multimedia content that can be traced back to its source. This not only facilitates the verification of authentic material but also deters the propagation of deepfakes by making it easier to identify manipulated media. As watermarking technology matures, it provides a robust framework to enhance the trustworthiness of shared information across various digital platforms.

Furthermore, the role of AI in monitoring and flagging potentially harmful content is becoming increasingly vital. Through continuous surveillance, AI-driven systems can identify and encapsulate suspicious media, notifying authorities and users of potential deepfake occurrences. The integration of real-time monitoring tools empowers platforms to conduct proactive measures against misinformation while enabling users to discern credible from misleading content.

Overall, the advancement of technology aimed at combating deepfake abuse is a multifaceted initiative. By harnessing the power of detection algorithms, watermarking techniques, and AI, society can forge a path toward mitigating the risks associated with increasingly sophisticated disinformation tactics.

Education and Awareness: A Key Defense Against Deepfakes

The rise of deepfakes poses a significant threat to the integrity of information available online. As a response, enhancing public education and awareness about this technology is paramount in safeguarding individuals from its adverse effects. Implementing media literacy programs in educational institutions can equip students with the necessary skills to discern between genuine and manipulated content. These initiatives should focus on teaching students how to critically analyze sources, scrutinize information, and recognize the subtle nuances that characterize deepfakes.

Moreover, critical thinking skills should be fostered at all educational levels. By encouraging individuals to question the authenticity of visual and audio content, educational programs lay the groundwork for a more discerning public. Engaging lessons about the ethics of technology and the implications of misinformation can help instill a sense of responsibility among students. Often, deepfake technology is not inherently malicious, but its misuse can lead to a breakdown in trust regarding digital media.

To further combat the challenges posed by deepfakes, it is essential to promote strategies among the general population for verifying content. Individuals should be motivated to fact-check information before sharing it. Utilizing resources such as reverse image searches, video analysis tools, and reputable fact-checking websites enhances the credibility of the information being consumed. Social media platforms must also contribute to this effort by developing and implementing algorithms that can detect and flag suspected deepfakes, thereby alerting users to potential misinformation.

In conclusion, fostering education and raising awareness about deepfakes is crucial in the ongoing battle against disinformation. By empowering individuals with the tools and knowledge to navigate today’s digital landscape, society can work collectively towards a more informed and vigilant populace capable of discerning the truth from deception.

Case Studies: Successful Prevention and Mitigation Strategies

The rise of deepfake technology has triggered significant concern over its potential for misuse. However, various organizations and platforms have taken proactive measures to combat the disinformation stemming from deepfake content. These case studies highlight the effective strategies employed to address this pressing issue.

One notable example is Facebook’s initiative, which aims to curb the spread of deepfakes and false information. The social media giant has implemented a multi-faceted approach that includes enhanced detection algorithms leveraging artificial intelligence. In collaboration with academic and industry partners, Facebook has developed tools that can identify manipulated media, which are then flagged for verification. This initiative has proven successful in preventing the circulation of harmful deepfake videos, ensuring that users have access to reliable and authentic content.

Another instance can be observed in the efforts of the technology company Deeptrace. This organization specializes in detecting and analyzing deepfake videos. In a significant project, Deeptrace collaborated with news agencies to create a deepfake database that helps journalists and content creators discern the authenticity of visual media. By providing tools and resources for real-time deepfake detection, Deeptrace has raised awareness about the dangers of manipulated content and promoted best practices for media literacy within the community.

Furthermore, the collaboration between governmental bodies and technology firms has also yielded positive results. For example, the United States government has launched initiatives aimed at educating the public about deepfake technology. This involves partnering with non-profit organizations to disseminate information on recognizing and reporting potential deepfakes. By fostering an informed citizenry, these strategies aim to build resilience against disinformation campaigns powered by deepfakes.

These case studies demonstrate that a combination of technological innovation, community engagement, and educational outreach can prove effective in mitigating the risks associated with deepfake abuse. As organizations continue to refine their strategies, it is vital to learn from these experiences to establish robust defenses against the next wave of disinformation.

The Role of Social Media Platforms in Combatting Deepfakes

In recent years, the emergence of deepfake technology has posed significant challenges for social media platforms, as it can be used to create highly realistic but misleading content. As a consequence, these companies are increasingly recognizing their responsibility to mitigate the impact of deepfakes and disinformation on their platforms. Various measures have been introduced to combat the spread of such deceptive media.

Firstly, many social media platforms have implemented stringent policies that prohibit the creation and dissemination of misleading content, including deepfakes. For example, Facebook and Twitter have established clear guidelines that outline unacceptable behavior concerning manipulated media, which are crucial for maintaining the integrity of the information shared on their platforms. These policies serve not only to inform users but also to set repercussions for those who violate them, thereby discouraging the creation of harmful deepfakes.

In addition to policy development, social media companies have deployed advanced technological solutions to detect deepfakes. Machine learning algorithms and artificial intelligence tools are being used to analyze video and audio content in real-time. By identifying telltale signs of manipulation, these technologies can flag potentially misleading content for further review. For instance, TikTok has initiated programs that utilize these technological advancements to identify and reduce the visibility of deepfake videos, thus proactively addressing the issue.

Furthermore, collaboration with external fact-checking organizations has become a key strategy. Platforms are partnering with independent fact-checkers to assess the authenticity of content deemed suspicious. This partnership not only helps in verifying information but also educates users on the potential risks associated with deepfakes, promoting critical thinking in the consumption of media.

In conclusion, social media platforms play a vital role in combatting deepfakes through robust policies, innovative technologies, and strategic collaborations. By taking proactive measures, they aim to diminish the spread of disinformation and safeguard their communities from the adverse effects of manipulated media.

Conclusion: Building a Resilient Future in the Age of Deepfakes

The proliferation of deepfake technology poses a pressing challenge for contemporary society, particularly concerning the spread of disinformation. As this digital manipulation continues to evolve in sophistication, it is imperative to recognize that tackling this issue requires a comprehensive, multi-faceted approach. Firstly, technological advancements in deepfake detection tools must be prioritized, enabling individuals and organizations to distinguish between authentic content and manipulated media. These technological solutions can offer an essential line of defense against misinformation.

Moreover, legislative frameworks need to evolve in tandem with technological developments. Governments must create robust laws that address the creation and distribution of harmful deepfakes, ensuring accountability for malicious actors. This legal infrastructure should be complemented by international cooperation, as disinformation knows no borders. A collaborative global effort will enhance enforcement capabilities and build a cohesive strategy against the misuse of deepfake technology.

Education also plays a vital role in cultivating media literacy among the public. By equipping individuals with the skills necessary to critically evaluate digital content, society can fortify itself against the persuasive techniques employed by deepfakes. Initiatives that promote awareness and understanding of how deepfakes function will empower individuals to question the veracity of the content they consume.

Finally, the media must uphold high ethical standards in reporting, ensuring accurate representations of events and minimizing the impact of sensationalized misinformation. By fostering a responsible media environment, society can enhance the overall trustworthiness of information sources.

In conclusion, addressing the implications of deepfake technology calls for an integrated approach involving technological innovation, legislative action, educational initiatives, and responsible media practices. Only through concerted efforts can we build a resilient future that safeguards the integrity of information in an increasingly complex digital landscape.