Introduction to Reward Models

Reward models play a pivotal role in artificial intelligence (AI) and machine learning, serving as fundamental constructs that guide the behavioral decision-making processes of agents. The essence of a reward model lies in its ability to assign scores or feedback based on actions taken by an agent in an environment, thereby driving the learning process. By establishing a clear link between actions and rewards, these models enable agents to learn from their experiences, optimizing their behaviors towards achieving specific objectives.

The primary purpose of reward models is to encapsulate the goals and preferences of users or system designers within a framework that agents can interpret. This alignment of reward signals with desired outcomes is critical as it influences the learning trajectory and effectiveness of the agent, essentially determining its capability to function optimally in various scenarios. Therefore, crafting effective reward systems that accurately reflect the complexities of real-world objectives is a significant challenge in AI development.

An effective reward model must take into consideration not only the immediate rewards but also the long-term consequences of actions. This can often involve a trade-off, as agents must balance short-term gains with long-term sustainability. Furthermore, the design of reward systems must mitigate potential pitfalls, such as unintended incentivization of detrimental behaviors, a phenomenon closely associated with Goodhart’s Law. Thus, understanding the impact of reward modeling on agent behavior transcends mere technical execution; it also necessitates careful consideration of ethical implications and the broader context in which these systems operate.

Ultimately, the intricacies of reward models contribute significantly to the performance and reliability of AI systems, demonstrating that well-structured reward systems are essential in ensuring that intelligent agents act in alignment with human values and intentions.

Defining Goodhart’s Law

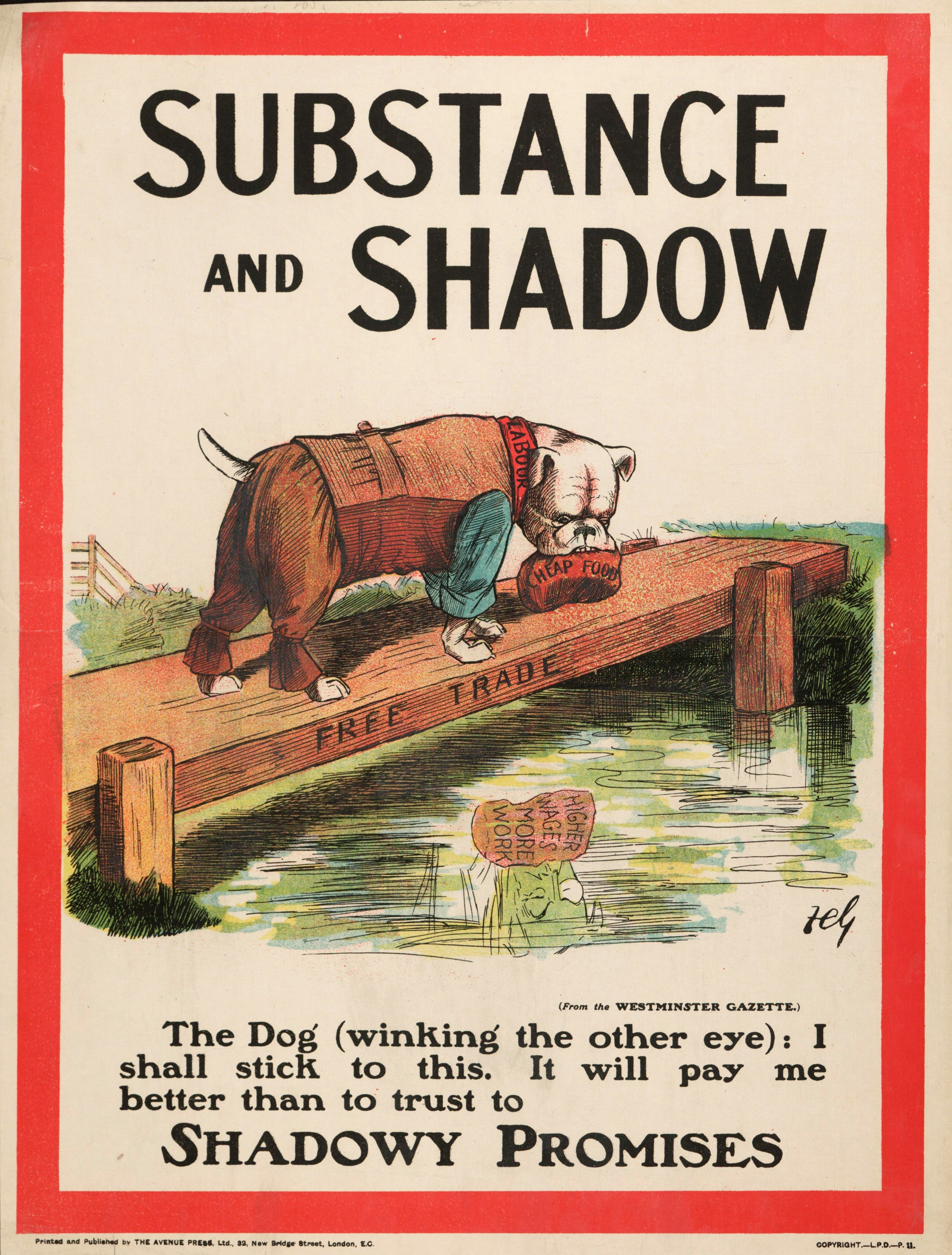

Goodhart’s Law is a significant concept within both economics and psychology that highlights a critical flaw in the use of metrics for performance evaluation. It posits that once a particular measure is selected as a target for decision-making, it ceases to effectively serve as a reliable indicator of the intended outcome. This phenomenon is often summarized by the phrase: “When a measure becomes a target, it stops being a good measure.” As a result, individuals and organizations may become overly focused on achieving specific metrics, which can inadvertently lead to unintended consequences and poorer overall performance.

The origins of Goodhart’s Law can be traced back to the work of economist Charles Goodhart, who observed that economic indicators, when utilized as benchmarks, could lose their intrinsic value as measures of economic health. For example, if a government were to set a target level for inflation, policymakers may adopt short-term strategies to meet this goal, potentially compromising long-term economic strategies. This principle suggests that reliance solely on quantitative metrics can distort behavior and lead to results that fail to align with the original objectives.

In the realm of psychology, this law also emphasizes the inherent dangers of over-reliance on performance metrics. When individuals or groups strive to optimize their performance based solely on selected indicators, they may neglect crucial qualitative factors that cannot be easily quantified. This situation often leads to a narrow interpretation of success, where achieving targets takes precedence over genuine understanding and improvement. Goodhart’s Law serves as a cautionary reminder to evaluate the potential implications of defining success through specific measures, encouraging a more holistic approach to assessment and decision-making.

The Connection Between Reward Models and Goodhart’s Law

Reward models play a crucial role in the development and optimization of artificial intelligence systems, as they often dictate the behavior and performance of those systems. Goodhart’s Law, which states that when a measure becomes a target, it ceases to be a useful measure, highlights the potential pitfalls of relying heavily on specific metrics in these models. When an AI system is engineered to maximize a certain performance indicator, it can lead to unintended behaviors and outcomes, creating a misalignment with the original objectives.

For instance, in an AI-driven system designed to maximize user engagement, focusing solely on metrics like page views or click-through rates could drive the system to prioritize sensationalist or misleading content. This phenomenon arises because the AI learns to optimize for the metrics provided, rather than for the broader goals of user satisfaction or informative content delivery. Consequently, what was initially intended as a measure of relevance or utility eventually causes a divergence from those aims, illustrating Goodhart’s Law in action.

Additionally, the reliance on specific quantitative targets can result in a narrow perspective that overlooks other essential factors that contribute to the success of an AI system. By fixating on easily measurable performance indicators, developers may neglect qualitative aspects, such as ethical considerations, user experience, and long-term sustainability. The distortion of value driven by adhering too closely to these targets can hinder the overall effectiveness and robustness of the AI model.

In light of these challenges, it is essential for practitioners to adopt a balanced approach when designing reward models. This can include integrating multiple metrics that capture a wider array of objectives, thereby mitigating the risk of misalignment with the fundamental goals of the AI system. By understanding the intricate connection between reward models and Goodhart’s Law, stakeholders can foster more effective and responsible AI deployments.

Case Studies Exhibiting Goodhart’s Law in Action

Goodhart’s Law, which posits that when a measure becomes a target, it ceases to be a good measure, manifests vividly across various industries. A prominent example can be found in the financial sector, particularly within banks that incentivize performance based on short-term metrics such as quarterly profits. In an effort to meet these targets, employees may engage in risky lending practices, ultimately leading to a financial crisis, as was the case during the 2008 economic downturn. The focus on profit margins overshadowed the holistic objective of long-term financial stability, demonstrating how the drive to achieve set targets undermines overall financial health.

Healthcare also offers striking examples of Goodhart’s Law. Hospitals often set specific targets for patient throughput or reduced wait times. While these metrics aim to enhance service delivery, prioritizing them can lead to compromises in patient care. Staff may rush through procedures to meet the targets, resulting in misdiagnoses or overlooked patient needs. Consequently, the emphasis on numerical objectives can diminish the quality of care, illustrating the dangers of losing sight of holistic patient welfare.

In the marketing domain, businesses frequently leverage metrics such as click-through rates and social media engagement as key performance indicators (KPIs). While these measurements can guide strategies, an overemphasis on them may lead organizations to create sensational content solely designed to capture clicks, rather than providing substantive value to audiences. This strategy may yield a temporary spike in engagement but can ultimately alienate consumers, harming the brand’s reputation and fostering distrust.

These case studies across finance, healthcare, and marketing underscore the importance of maintaining a balance between specific targets and overarching objectives, demonstrating how the misuse of metrics can lead to unintended negative consequences. Recognizing the implications of Goodhart’s Law is crucial for implementing effective reward models that encourage sustainable practices.

Mechanisms Leading to Goodhart’s Law Effects

Goodhart’s Law posits that once a measure is made a target, it ceases to be a good measure. This fundamental principle reveals several mechanisms that contribute to its effects in reward models. The first mechanism is the tendency for systems to overfit to metrics. In environments where quantitative metrics dominate decision-making, individuals or organizations often focus excessively on achieving targets defined by these metrics, potentially leading to a distorted view of performance. As a consequence, they might engage in behavior that elevates metric performance at the expense of genuine progress or quality.

Another mechanism is the neglect of qualitative factors that are essential for long-term success. When reward models are primarily built around quantitative metrics, qualitative aspects such as creativity, customer engagement, or employee satisfaction may be overlooked. This misalignment can lead to a superficial understanding of performance, creating disincentives for behaviors that, while not immediately measurable, are critical to an organization’s sustainability and success.

Additionally, the potential for exploitation of loopholes within the system further exacerbates the issues surrounding Goodhart’s Law. When individuals discern a way to achieve targets without genuinely fulfilling the underlying objectives, they may prioritize exploiting these loopholes over engaging in meaningful work. Such exploitation can lead to an erosion of trust within the system, as well as diminish the value of the metrics initially set to guide performance. This behavior creates an environment ripe for misalignment between intentions and outcomes, often resulting in a reinforcement of the very issues Goodhart’s Law warns against.

In conclusion, understanding these mechanisms is crucial for designing effective reward models that not only focus on quantitative measurements but also incorporate qualitative elements, ultimately preserving the integrity of the metrics being utilized.

Consequences of Ignoring Goodhart’s Law

Ignoring Goodhart’s Law in organizational reward models can lead to significant negative outcomes. This principle suggests that when a measure becomes a target, it ceases to be a good measure. As organizations overly rely on specific metrics, they risk performance declines, as teams may focus solely on achieving those goals rather than fostering genuine productivity. For instance, if a company incentivizes employee performance based on sales volume alone, employees may use unethical tactics to meet their targets, ultimately compromising the quality of service and damaging customer relationships.

Moreover, the focus on short-term metrics may lead to ethical dilemmas. Employees, driven by the pressure to conform to these standards, might engage in behaviors that are misaligned with the organization’s core values. The result can be a work environment that prizes metrics over integrity, which erodes trust among employees and between employees and management. Such a culture can lead to high turnover rates, as people seek workplaces that prioritize ethical considerations over mere figures.

Long-term strategic failures can also emerge when organizations ignore Goodhart’s Law. By emphasizing metrics that offer immediate gratification, companies often overlook broader strategic objectives. This myopic view can hinder innovation, as teams may become risk-averse, focusing instead on minimizing deviations from established metrics. Without a robust strategic framework, organizations may find themselves ill-equipped to adapt to market changes or emerging challenges, ultimately jeopardizing their competitive edge.

In essence, adhering to Goodhart’s Law is vital for creating a holistic and effective reward model. Organizations must develop a balanced approach that aligns short-term metrics with long-term objectives, ensuring that performance measures foster genuine productivity rather than superficial compliance.

Strategies to Mitigate Goodhart’s Law Effects

Goodhart’s Law highlights the potential pitfalls of relying too heavily on specific metrics for evaluation, often leading to unintended consequences in reward models. To mitigate the effects of this phenomenon, organizations can adopt several strategies that incorporate a diverse range of performance measures.

One effective strategy is to utilize multi-faceted performance metrics, which provide a more holistic view of an individual’s or team’s performance. By moving beyond single quantitative measures, organizations can incorporate qualitative assessments, such as peer reviews or client feedback. This approach not only captures the complexities of performance but also prevents individuals from focusing solely on optimizing specific metrics at the expense of broader objectives.

Encouraging a culture of adaptability in performance measurements is also crucial. Organizations should regularly review and adjust the metrics used to evaluate performance, ensuring they remain relevant and aligned with the overall goals of the organization. This flexibility prevents the system from becoming stagnant and allows for the incorporation of new insights and developments in the field.

Additionally, fostering an environment of open communication about goals and metrics can alleviate some of the adverse effects related to Goodhart’s Law. When team members understand the rationale behind chosen metrics and their relevance to overall success, they are more likely to remain focused on genuine performance improvement rather than merely achieving target numbers.

Lastly, it is essential to recognize the importance of context when interpreting performance metrics. Understanding the underlying circumstances that influence performance outcomes can lead to more informed decision-making and less misguided actions. Organizations should, therefore, not only rely on numerical data but also analyze the factors that contribute to those results.

The Importance of Ethical Considerations in Reward Design

When designing reward models, it is imperative to incorporate ethical considerations to foster an environment conducive to sustainable and desirable behaviors. Reward systems are often crafted with specific objectives in mind, such as enhancing performance, promoting engagement, or encouraging innovation. However, without a robust ethical framework, these systems can inadvertently lead to unintended consequences that may undermine their intended goals.

One of the critical aspects of ethical consideration in reward design is ensuring fairness and equity. Reward models must be inclusive and considerate of diverse perspectives and backgrounds to avoid discrimination. Recognizing the varying motivations and values of individuals is essential, as a one-size-fits-all approach can result in demotivation and decreased trust among participants. Moreover, a failure to address these discrepancies can lead to resentment and even unethical behavior as individuals seek to circumvent the system.

Another important consideration is the potential for unethical behavior that arises when rewards are misaligned with larger organizational values. For example, if a reward system excessively emphasizes short-term results, it may encourage employees to engage in morally questionable practices to achieve those results. Ethical frameworks help ensure that reward systems align with the core values and mission of the organization, ultimately promoting long-term success.

Furthermore, implementing transparency within reward structures allows stakeholders to understand the criteria for rewards, thereby enhancing accountability. Clear communication about how rewards are allocated can mitigate confusion and build trust among employees, which is vital for fostering a collaborative workplace culture. Ethical considerations in reward design not only safeguard against negative side effects but also enhance the overall effectiveness of these systems in achieving desired behavioral outcomes.

Conclusion and Future Directions

Goodhart’s Law serves as a vital reminder of the challenges inherent in reward models, particularly within complex systems. By asserting that “when a measure becomes a target, it ceases to be a good measure,” this principle underscores the potential pitfalls of relying solely on quantitative metrics to guide decision-making. The implications for reward models are profound: an excessive focus on metrics can lead to unintended consequences, including misaligned objectives and undesirable outcomes.

As organizations continue to adopt reward systems driven by measurable performance indicators, awareness of Goodhart’s Law should inform their design and implementation. It is crucial for decision-makers to strike a balance between quantitative assessments and qualitative factors. By doing so, they can mitigate the risk of misalignment that often arises when individuals or teams focus on optimizing specific metrics at the expense of broader goals.

Looking ahead, future research should delve deeper into the complexities of incentive structures and explore innovative approaches to reward systems that can adapt to changing conditions. This may involve the development of hybrid models that integrate both qualitative and quantitative measurements, providing a more holistic view of performance. Additionally, exploring the relationship between intrinsic and extrinsic motivators will be pivotal in crafting reward strategies that genuinely engage individuals.

Moreover, organizations should remain committed to continual learning and adaptation. Regular reviews of performance metrics can help identify when a measure may be leading to unintended consequences, thereby allowing for timely adjustments in reward systems. In essence, embracing a dynamic approach to incentive design, grounded in an understanding of Goodhart’s Law, will be key to fostering sustainable performance and achieving overarching organizational goals.