Introduction to Batch Normalization

Batch normalization is a crucial technique in the field of deep learning, primarily aimed at accelerating the training of neural networks and enhancing their stability. Introduced by Sergey Ioffe and Christian Szegedy in a 2015 paper, this method addresses the challenges posed by internal covariate shift, which can impede proper training and convergence of deep learning models.

The purpose of batch normalization is to normalize the inputs of each layer by adjusting and scaling the activations. Specifically, it utilizes the mean and variance computed from the mini-batch of data during training. By doing so, batch normalization ensures that the activations remain centered around zero, allowing each layer to learn more effectively. This normalization process helps maintain a consistent distribution of inputs across different mini-batches, which significantly reduces the training time required for deep networks.

Batch normalization achieves its effects through a two-step process. In the first step, for each mini-batch, the mean and variance are calculated, which are used to standardize the input activations. These statistics help in shifting and scaling the data to a standard normal distribution, fostering a more stable learning environment. The second step involves learnable parameters that allow network designers to maintain control over the activations. By incorporating two additional parameters—scale and shift—this technique provides the flexibility needed for modeling complex data distributions.

Overall, as batch normalization becomes a standard approach in training deep neural networks, understanding its mechanics and implications is essential. It plays a significant role in increasing the efficiency of training processes while ensuring that the learning dynamics are well-regulated. Consequently, batch normalization contributes to better performance and generalization of deep learning models.

The Mechanics of Batch Normalization

Batch normalization (BN) has become an essential technique within deep learning, used predominantly to enhance the training speed and improve the model stability. At its core, batch normalization normalizes the output of a previous layer by subtracting the batch mean and dividing by the batch standard deviation. This process ensures that the data input to the subsequent layer bears a mean of zero and a variance of one, which significantly helps in stabilizing the learning process.

During the training phase, for each mini-batch, BN computes the mean and variance of the inputs. These statistics are then used to standardize the inputs. However, it also maintains running averages of the mean and variance across all mini-batches. This is where the critical difference between the training and testing phases lies. While tests do not process mini-batches of the data, the statistics must be leveraged for consistent results.

At test time, the computations shift from batch statistics to these accumulated estimates. The running averages for mean and variance, computed during the training phase, are employed to normalize the inputs. This approach mitigates variability caused by the stochastic nature of mini-batches, resulting in more robust performance when the model is exposed to unseen data. It is important to note that if a model is not properly tuned to utilize the running averages instead of the batch-specific statistics at test time, the performance can deteriorate significantly.

Therefore, clearly understanding how batch normalization operates during both training and testing phases is crucial for developing reliable deep learning models. Maintaining these running averages allows the model to function smoothly outside a training context, reinforcing the significance of batch normalization in the deployment phase.

The Transition from Training to Testing

In deep learning, the transition from training to testing is a pivotal moment that significantly influences model performance. During the training phase, a model learns to identify patterns from the training data through iterative updates to its weights based on a loss function. This phase utilizes a batch normalization process that computes normalization statistics—mean and variance—within each mini-batch of training data. However, when transitioning to the testing or inference phase, the approach to these statistics changes dramatically, as the model encounters data it has not seen before.

During testing, the behavior of the model should remain consistent with its training state. The batch normalization layer, which contributed greatly to the learning process, requires careful consideration at this stage. Instead of recalculating stats based on the test data—potentially leading to erratic behavior—test-time operation typically involves using the running averages of the previously computed mean and variance from the training phase. This consistency is crucial because discrepancies between the training and inference statistics can lead to a drop in model performance, causing issues such as overfitting or underfitting.

Thus, maintaining the integrity of batch normalization statistics is essential for achieving reliable inference results. A model’s accuracy during testing is contingent upon replicating the training conditions as closely as possible. This involves not only preserving batch normalization behavior but also ensuring that the overall structure of the model remains intact. Any deviations during this critical transition can compromise the predictive power of the model. As such, it is imperative for practitioners to understand these nuances to optimize their deep learning frameworks effectively.

Why Test-Time Statistics Matter

In the realm of deep learning, the choice of statistics utilized during the testing phase can significantly influence the overall performance of a model. When conducting predictions, the most appropriate method should include the use of learned averages rather than mini-batch statistics. This practice is crucial for ensuring that the model’s output is both accurate and reliable. Utilizing mini-batch statistics, which depend on the specific data input during a limited sampling period, can introduce variability that distorts the model’s predictions.

The impact of using inappropriate statistics during testing is profound. If a model was trained with batch normalization applied, it expects an environment during validation and testing that closely resembles the conditions seen during training. By employing mini-batch statistics at test time, there is a risk of altering the representation that the model has learned. This incongruence can lead to discrepancies between expected outcomes and actual predictions, which, in turn, can result in reduced accuracy and reliability. Essentially, if the model encounters variations in input distributions in test scenarios, its learned parameters may not perform optimally, leading to potential misinterpretations of data.

Moreover, these inconsistencies can compound in scenarios where high-stakes decisions are made based on model predictions, such as in healthcare or autonomous systems. Precise testing protocols that leverage learned averages can help mitigate these risks. As such, understanding the role of effective test-time statistics is crucial in optimizing the performance and trustworthiness of machine learning models. Attention to these details ensures that the benefits of batch normalization are fully realized, fostering models that are resilient, adaptable, and reliable across a wide array of applications.

Common Issues with Batch Normalization at Test Time

Batch normalization has become a widely adopted technique in training deep neural networks. It assists in reducing internal covariate shift, thereby speeding up the training process. However, the performance of batch normalization can be considerably hindered when the statistics calculated during training are improperly applied during testing. This section discusses common pitfalls associated with utilizing batch normalization at test time.

One of the primary issues arises from the inconsistency in batch sizes between training and testing phases. During training, models typically benefit from large batch sizes that provide reliable estimates of batch statistics. Conversely, at test time, smaller batches may lead to noisy estimates, adversely affecting the model’s performance. For instance, if a model trained with batch sizes of 256 is then evaluated using a batch size of 1, the statistics (mean and variance) used for normalization may not represent the distribution of the dataset well, leading to degraded performance.

Another significant concern is the dependency on real-time samples during inference. Batch normalization computes the mean and variance based on the current batch rather than relying solely on the accumulated statistics from training. In cases where the test batch is not representative of the training data, this can lead to fluctuations in the model’s output, potentially impacting decision-making processes in applications such as medical diagnoses or financial predictions.

Moreover, the iterative updates of batch statistics further complicate the situation. If the model encounters outlier data points during testing, these can disproportionately influence the batch mean and variance, causing unforeseen discrepancies in predictions. To mitigate these issues, practitioners must consider using fixed statistics rather than relying on dynamic computation at test time. In summary, understanding these common issues and their implications is crucial for ensuring the robustness of models utilizing batch normalization during test phases.

Strategies to Mitigate Test-Time Issues

Batch normalization plays a crucial role during the training phase of deep learning models by normalizing layer inputs, which stabilizes learning and accelerates convergence. However, at test time, the reliance on batch statistics can lead to performance inconsistencies, particularly when the batch size is small or the data distribution significantly differs from that of the training set. To address these challenges, researchers and practitioners have developed several strategies to facilitate improved outcomes during inference.

One effective method is to use the moving averages of the statistics collected during training rather than relying on batch statistics. By employing the running mean and variance, the model can maintain a stable representation of normalized inputs, thus enhancing performance under varying data distributions at test time. Additionally, it is vital to ensure that the moving averages are updated throughout training to accurately reflect the data characteristics.

Another approach involves adapting the batch normalization strategy to utilize different normalization layers, such as Layer Normalization or Instance Normalization. These alternatives can be particularly beneficial for tasks involving sequential data or images, as they can offer better performance when the need for batch-level statistics is less critical. For instance, Layer Normalization normalizes the features across each training example, which can be advantageous in scenarios with small batch sizes.

Moreover, one might consider replacing batch normalization altogether with group normalization, which divides the channels into groups and normalizes within those groups. This method has shown promise in both training and test settings, particularly for small batch sizes, as it reduces dependence on the statistics of the entire batch.

Lastly, incorporating dropout layers or other regularization techniques can help the model generalize better during inference, thus mitigating the adverse impacts originally introduced by the use of batch normalization. Ensuring proper integration of these strategies can significantly enhance model performance and lead to more reliable predictions at test time.

Empirical Evidence and Case Studies

Batch normalization (BN) has become a critical technique for enhancing the performance of deep learning models. Numerous empirical studies have explored the effects of batch normalization statistics during test time. In particular, the impact of using moving averages of batch statistics instead of mini-batch statistics has been a focal point of various research endeavors. A significant finding was presented in the seminal paper by Ioffe and Szegedy, which revealed that using running estimates of the mean and variance, computed during training, leads to more stable predictions during inference.

In practical applications, case studies demonstrate that batch normalization can markedly improve accuracy when deployed across various domains. For instance, a study analyzing convolutional neural networks (CNNs) for image recognition tasks showcased that models leveraging batch normalization consistently outperformed their non-normalized counterparts. The addition of BN not only boosted validation accuracy but also facilitated faster convergence rates, underscoring its significance in real-world applications.

Furthermore, other empirical evidence indicates that the degree of impact may differ depending on the architecture and the complexity of the dataset. In a comprehensive analysis involving recurrent neural networks (RNNs), researchers found that batch normalization led to improved generalization in more complex datasets, while simpler datasets did not exhibit a marked difference. This variance highlights the necessity of tailoring batch normalization approaches to specific model types and datasets.

Overall, empirical studies have conclusively demonstrated that the statistics utilized during test time can significantly influence a model’s performance. Documentation of these effects across various contexts not only reinforces the importance of batch normalization but also serves as a guide for practitioners aiming to maximize their models’ predictive capacities in real-world settings.

Conclusion: Best Practices for Batch Normalization

In the realm of machine learning, particularly in training neural networks, the implementation of batch normalization has emerged as a pivotal technique. It aids in stabilizing the learning process and significantly influences model performance at test time. Proper management of the batch normalization statistics during both the training and testing phases is vital to achieve optimal results.

One of the key takeaways in utilizing batch normalization is ensuring that the model captures accurate statistics throughout the training phase. This involves maintaining a careful balance between the running average of the mean and variance statistics and the statistics derived from the small batch sizes during training. Practitioners should leverage techniques such as moving averages for these statistics, which helps in smoothing out fluctuations and ensures that the model generalizes well to unseen data. It’s important that these moving averages are effectively updated based on the training data’s distribution.

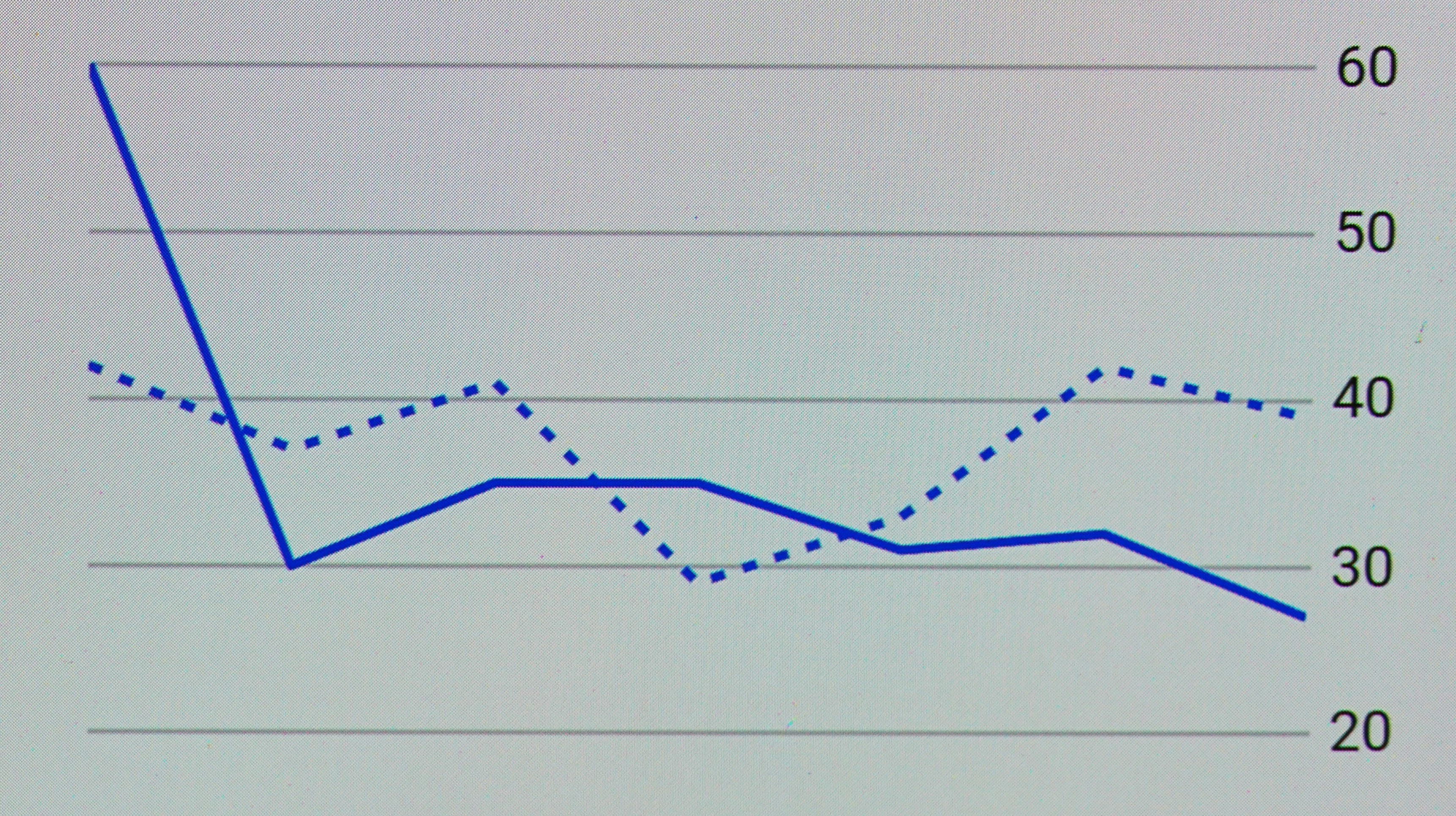

Another best practice is to thoroughly evaluate the model’s performance with and without batch normalization during the testing phase. This comparison helps in understanding the impact of batch normalization on prediction accuracy and stability, as the model behaves differently with normalized inputs. Additionally, practitioners should also consider the batch size during testing, as this can affect the scale of the normalization layers. A batch size close to the one used during training often yields better performance.

Finally, maintaining proper documentation and consistency in the implementation of batch normalization parameters across different models is essential. This ensures clarity and allows for reproducibility in experiments. By adhering to these best practices, machine learning practitioners can optimize the advantages of batch normalization, leading to more robust models that perform reliably at test time.

Further Reading and Resources

For those who wish to deepen their understanding of batch normalization and its statistical implications during the testing phase, a variety of resources are available. These materials encompass research papers, tutorials, and online courses that delve into both the theoretical aspects and practical implementations of batch normalization.

A seminal paper by Sergey Ioffe and Christian Szegedy titled “Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift” provides foundational insights into the mechanism of batch normalization. This paper is essential reading for comprehending how batch normalization influences model performance, particularly during training versus testing scenarios.

In addition to primary literature, various online platforms offer courses and tutorials dedicated to deep learning. Websites like Coursera and edX frequently include modules that cover batch normalization, illustrating its role through hands-on coding examples and real-world applications. Specifically, courses focused on deep learning frameworks such as TensorFlow and PyTorch often address batch normalization’s significance, including its implementation nuances and performance effects.

Furthermore, communities like GitHub host numerous repositories where practitioners share their implementations and findings related to batch normalization. By exploring these projects, users can observe how different configurations affect model accuracy and stability during testing.

For a more interactive learning experience, forums such as Stack Overflow and specialized AI discussion boards provide platforms for practitioners to ask questions and share their insights regarding batch normalization. Engaging with these communities can yield practical advice and innovative solutions to specific challenges encountered during model testing.

Collectively, these resources will empower readers to gain a well-rounded comprehension of batch normalization, equipping them with the knowledge to apply these concepts in their own model evaluations effectively.