Understanding Loss Curves in Machine Learning

Loss curves serve as essential tools in machine learning for evaluating models’ performance over time. These curves reflect the relationship between the loss function values and the training iterations or epochs. The loss function quantifies how well a model predicts the expected outcomes, providing a basis for adjustments during training. By analyzing loss curves, practitioners can identify whether a model is underfitting, overfitting, or achieving an optimal balance between the two.

Significance of Phase Transitions

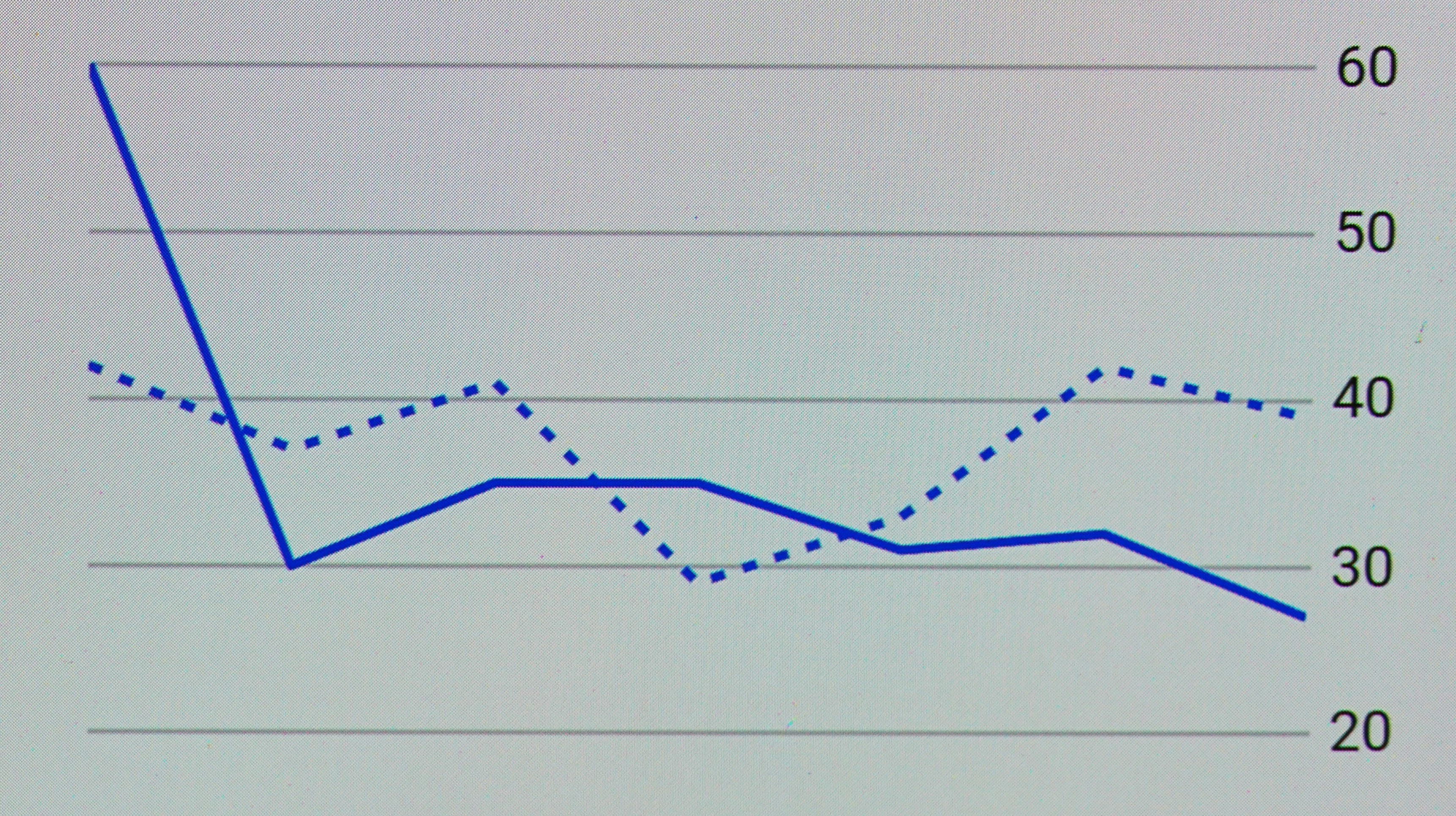

Phase transitions encompass notable changes within the loss curves, indicating shifts in training dynamics. Such transitions may occur when a model moves from learning basic patterns in data to capturing more complex relationships, often resembling a fundamental change in the model’s learning behavior. Recognizing these transitions can illuminate when a model is improving, plateauing, or even degrading in performance.

Evaluating Model Performance

The analysis of loss curves, in conjunction with the understanding of phase transitions, allows for effective utilization of the model’s performance metrics. For instance, by observing a decreasing loss value, one can infer that the model is effectively learning from the training dataset. In contrast, an increase in loss or a plateau may indicate that the training process requires adjustment, such as altering the learning rate or implementing a more sophisticated model architecture.

Implications for Interpretation

Understanding phase transitions within loss curves is crucial for interpreting a model’s behavior throughout its training journey. This knowledge assists in making informed decisions concerning hyperparameter tuning and model refinements. Furthermore, it aids in diagnosing issues related to data quality, model complexity, and generalization capabilities, thus enhancing overall model performance in practical applications.

The Nature of Phase Transitions

Phase transitions are a fundamental concept in both physics and data analysis, illustrating how systems undergo abrupt changes in behavior under varying conditions. In physics, a phase transition refers to the transformation from one state of matter to another, such as solid to liquid or liquid to gas, often characterized by specific temperature and pressure thresholds. Similarly, in the realm of data analysis, phase transitions imply sudden shifts in the dynamics of loss curves, indicating a critical point in training algorithms, particularly in machine learning.

The essence of a phase transition lies in the idea that a system can exist in different states and that these states can abruptly change when key parameters cross certain values. For example, the internal dynamics of neural networks during training may exhibit a phase transition when the learning rate surpasses a threshold, leading to significant changes in performance metrics. This phenomenon can be attributed to the complex interplay of various factors, including overfitting, underfitting, and the optimization landscape as it evolves through iterations.

By analyzing loss curves, researchers can identify specific points at which a phase transition occurs, allowing for more efficient model tuning and improving predictive performance. In this context, understanding the theory of phase transitions not only enhances our comprehension of system behavior but also offers insights into optimizing machine learning models. The analogies drawn from physics to data analysis provide a valuable framework for examining how abrupt changes can impact the effectiveness of computational algorithms.

In summary, the study of phase transitions bridges the disciplines of physics and data science, facilitating a deeper understanding of abrupt changes in behavior within various systems. This cross-disciplinary approach could pave the way for new methodologies in improving model performance and efficiency in complex data-driven tasks.

Mathematical Underpinnings of Loss Curves

In the realm of machine learning, loss functions serve as critical components that quantify the discrepancy between predicted outputs and actual targets. The mathematical formulation of loss functions can take various forms, including mean squared error, cross-entropy, and hinge loss, each underpinned by distinct mathematical principles. These functions determine how well a model is performing and guide the optimization process during training.

The behavior of loss curves can further be understood through the lens of calculus and optimization. The loss can often be viewed as a function of model parameters, and the parameters can be adjusted during training via techniques such as gradient descent. This iterative process relies on the gradient, or the first derivative of the loss function, to indicate the direction in which parameters should be modified to minimize the loss. Understanding the properties of these loss functions, particularly their convexity, is essential in identifying phase transitions in the loss landscape.

Phase transitions in loss curves refer to significant and abrupt changes in behavior as model parameters are varied. These transitions often arise due to changes in optimization dynamics or the complexity of the model. For instance, as a model becomes more complex—adding layers in a neural network or increasing the number of parameters—it may encounter regions in the parameter space where the loss landscape transforms from smooth and convex to highly non-convex. Such shifts can influence the convergence properties of optimization algorithms and the generalization capabilities of the model.

To mathematically characterize these transitions, researchers employ techniques from statistical mechanics and topology, which allow for a deeper understanding of critical points and bifurcations in loss functions. By examining the relationships between model parameters and corresponding loss values, one can extract critical insights that elucidate the dynamics of phase transitions in training loss curves. The interplay between these mathematical properties sheds light on the broader implications for model optimization and performance.

Factors Influencing Phase Transitions in Loss Curves

Phase transitions in loss curves are intricate phenomena influenced by a plethora of factors. Among these, data complexity stands as a predominant consideration. Complex datasets often contain a vast array of features, some of which may be significantly more informative than others. This variability in data structure can result in differing loss behaviors as the model attempts to learn the underlying patterns. When training a model, especially deep learning architectures, the interplay between the complexity of the dataset and the ability of the model to capture these intricacies can lead to noticeable shifts in loss trajectories.

Model architecture is another critical factor. Different architectures possess varying capacities to generalize from training data. For instance, deeper networks may experience phase transitions differently compared to shallower ones, particularly when handling the same dataset. Architectural choices such as the number of layers, types of activation functions, and network connectivity could alter the model’s learning dynamics, directly impacting the observed loss curve behavior.

Learning rates definitively play a crucial role in the dynamics of training. A learning rate that is too high might lead to erratic loss behaviors, contributing to abrupt transitions, while a learning rate that is too low may result in prolonged training times without significant improvement in the loss metric. It is essential to calibrate the learning rate appropriately, as it can either facilitate or hinder the model’s journey through various phases of learning.

Lastly, the choice of optimization algorithm is instrumental in shaping the loss curve. Different optimization strategies, such as stochastic gradient descent, Adam, or RMSprop, adopt varying approaches to traverse the loss landscape. Each algorithm can influence the model’s convergence patterns and the occurrence of phase transitions, thus warranting careful selection based on the specific characteristics of the training problem.

Empirical Observations of Phase Transitions

Understanding phase transitions in loss curves is vital for gaining insights into the behavior of systems under varying conditions. Numerous empirical studies have demonstrated clear phase transitions in loss curves, with implications for fields such as machine learning, physics, and material science. These studies often provide a basis for theoretical models and highlight the importance of observing these transitions in real-world applications.

A notable example can be found in the domain of machine learning, where researchers have documented phase transitions related to overfitting and generalization performance. In these studies, a phase transition is observed when the complexity of the model is increased beyond a certain threshold, leading to a sudden degradation in performance. Such observations underscore the balance required between model complexity and data sufficiency in training processes, especially in high-dimensional settings.

In material science, phase transitions have been explored through loss curves representing the energy states of materials under stress. For instance, by applying pressure to certain materials, a transition in their microstructure can be observed that correlates with abrupt shifts in energy loss. This illustrates how empirical observations help validate theoretical predictions and provide practical insights for developing new materials with tailored properties.

Moreover, theoretical studies simulate various conditions to elucidate the nature of these phase transitions. Phase transition narratives in loss curves reveal how parameters interact in a nonlinear manner, leading to critical points where slight changes can yield significant consequences on system behavior. The empirical validation of these theories through experiments reinforces the understanding of phase transitions and informs best practices across different disciplines.

Collectively, these empirical observations from case studies elucidate the deeper understanding of phase transitions in loss curves, revealing essential insights that facilitate both theoretical advancements and practical applications in various scientific fields.

The Role of Overfitting and Underfitting

Phase transitions in loss curves are closely linked to the concepts of overfitting and underfitting. These phenomena play a significant role in how well a model can generalize to unseen data. Overfitting occurs when a model learns the noise within the training dataset rather than the underlying distribution. This results in excellent performance on training data but poor generalization to new data, leading to a complex and often erratic loss curve. The loss may decrease initially, reflecting improved training accuracy, but may experience a sharp increase as the model captures irrelevant patterns.

Conversely, underfitting arises when a model is too simplistic to capture the underlying structure of the data. This typically results in high bias, and the model fails to learn significant patterns, manifesting in consistently high loss values. The loss curve in this scenario tends to stabilize at a relatively high level, indicating the model’s inability to improve its predictive capabilities. While both overfitting and underfitting lead to substantial deterioration in model performance, they differ fundamentally in their manifestations within loss curves.

The interaction between these two extremes can result in interesting phase transitions within loss curves. For instance, as model complexity increases, one may initially observe a decrease in loss, indicating improved learning of essential data characteristics. However, after reaching a critical point, further increases in complexity may lead to overfitting, causing a notable increase in loss, thus demonstrating a phase transition. These transitions highlight the complexity involved in finding the right balance between a model’s capability to learn and its ability to generalize effectively. Ultimately, understanding the role of overfitting and underfitting is crucial for optimizing models and achieving desired predictive performance.

Practical Implications of Phase Transitions

Understanding phase transitions in loss curves offers valuable insights for machine learning practitioners, especially in guiding essential processes such as model selection, hyperparameter tuning, and the deployment of models in real-world applications. A phase transition typically indicates a significant change in the behavior of a model with respect to variations in parameters, which can have direct consequences on the model’s performance.

In model selection, recognizing the presence of a phase transition allows practitioners to differentiate between models that may appear similar at first glance but behave differently under specific conditions. For example, models exhibiting a smooth transition in their loss curves can indicate stability and predictability in performance, essential features for applications that require consistent outcomes, such as in medical diagnosis or financial forecasting.

Hyperparameter tuning is another area where insights from phase transitions are beneficial. By analyzing loss curves, practitioners can identify critical thresholds where the model’s behavior changes drastically. This can inform the selection of hyperparameters that position the model advantageously within a stable phase, avoiding configurations that may lead to erratic performance or overfitting. Understanding these transitions helps in systematically narrowing down the hyperparameter search space, leading to more efficient tuning processes.

Moreover, the implications of phase transitions extend into the deployment phase. When practitioners are aware of how certain modifications affect model performance, they can make informed decisions, ensuring that the deployed model remains robust under varying circumstances. This knowledge helps in preparing the model for possible shifts in data distributions, which can occur post-deployment.

Overall, a thorough understanding of phase transitions in loss curves equips machine learning practitioners with essential tools to enhance model reliability and performance, supporting better decision-making throughout the machine learning lifecycle.

Future Research Directions

As the field of machine learning continues to evolve, understanding phase transitions in loss curves presents a fertile area for future research. Several key questions remain unanswered, providing opportunities for further investigation. One primary avenue of inquiry is the precise mechanisms that govern phase transitions during training. Researchers could explore how different hyperparameters, such as learning rates or batch sizes, influence these transitions in loss curves. Such investigations could lead to more effective training protocols and better overall model performance.

Another important aspect worth exploring is the role of various data distributions on phase transitions. Different datasets may exhibit unique characteristics, and understanding how these attributes affect the dynamics of training could yield significant insights. By conducting case studies on a range of datasets, researchers can compare the observed phase transitions and determine common patterns or anomalies across different scenarios.

Additionally, the integration of theoretical frameworks with empirical data may enhance our understanding of phase transitions. Employing advanced mathematical models can help clarify the relationships between loss functions and performance metrics. Researchers should consider formulating new theoretical models or refining existing ones to reflect empirical findings more accurately.

Furthermore, the utilization of visualization techniques could prove beneficial in elucidating phase transitions in loss curves. By employing advanced visualization methods, researchers can more clearly see the intricacies of these transitions and potentially uncover nuances previously overlooked. Techniques such as dimensionality reduction or advanced data representation may facilitate deeper insights into the nature of loss landscapes and their implications for model optimization.

In order to effectively take steps towards these research directions, collaboration across disciplines may be essential. Engaging with experts in fields such as statistics, mathematics, and computer science will allow for a more multidisciplinary approach to tackling the complexities of phase transitions in loss curves, ultimately leading to a richer understanding of this phenomenon.

Conclusion and Key Takeaways

Understanding phase transitions in loss curves is crucial for the effective training of machine learning models. Throughout this blog post, we have explored various facets of how these transitions manifest, particularly in the context of optimization and model performance. One significant finding is that recognizing the signs of phase transitions can enable practitioners to make informed decisions about when to adjust their training strategies. For instance, detecting a shift in the loss curve trajectory may indicate that a model has reached an optimal performance plateau or that it is entering a regime where further training is no longer beneficial.

Moreover, we discussed the implications of these phase transitions, highlighting their role in guiding hyperparameter tuning and improving the robustness of models. By critically assessing loss curves, data scientists can better identify overfitting or underfitting situations, thereby implementing timely interventions. This awareness not only enhances predictive accuracy but also contributes to the overall efficiency of the machine learning pipeline.

Furthermore, phase transitions serve as indicators of deeper underlying mechanisms at play during the training process. Understanding these transitions encourages practitioners to delve into the structural complexities of their models, fostering a more comprehensive approach to machine learning development. Future research and exploration into this area promise to yield valuable insights, which can further enhance our understanding and application of loss curves in diverse contexts.

In summary, recognizing and understanding phase transitions in loss curves is paramount for optimizing machine learning models. As the field evolves, continued investigation into these transitions will not only enrich practitioners’ skills but also support the advancement of innovative methodologies in machine learning.