Introduction to Interpolation Regime

Interpolation, in the context of machine learning and statistics, refers to the method of estimating unknown values that fall within the range of a discrete set of known data points. This concept is particularly crucial as it plays a vital role in model training and evaluation. In practice, interpolation is often employed during the model fitting process, where algorithms seek to minimize errors between predicted values and the actual outcomes in the training dataset.

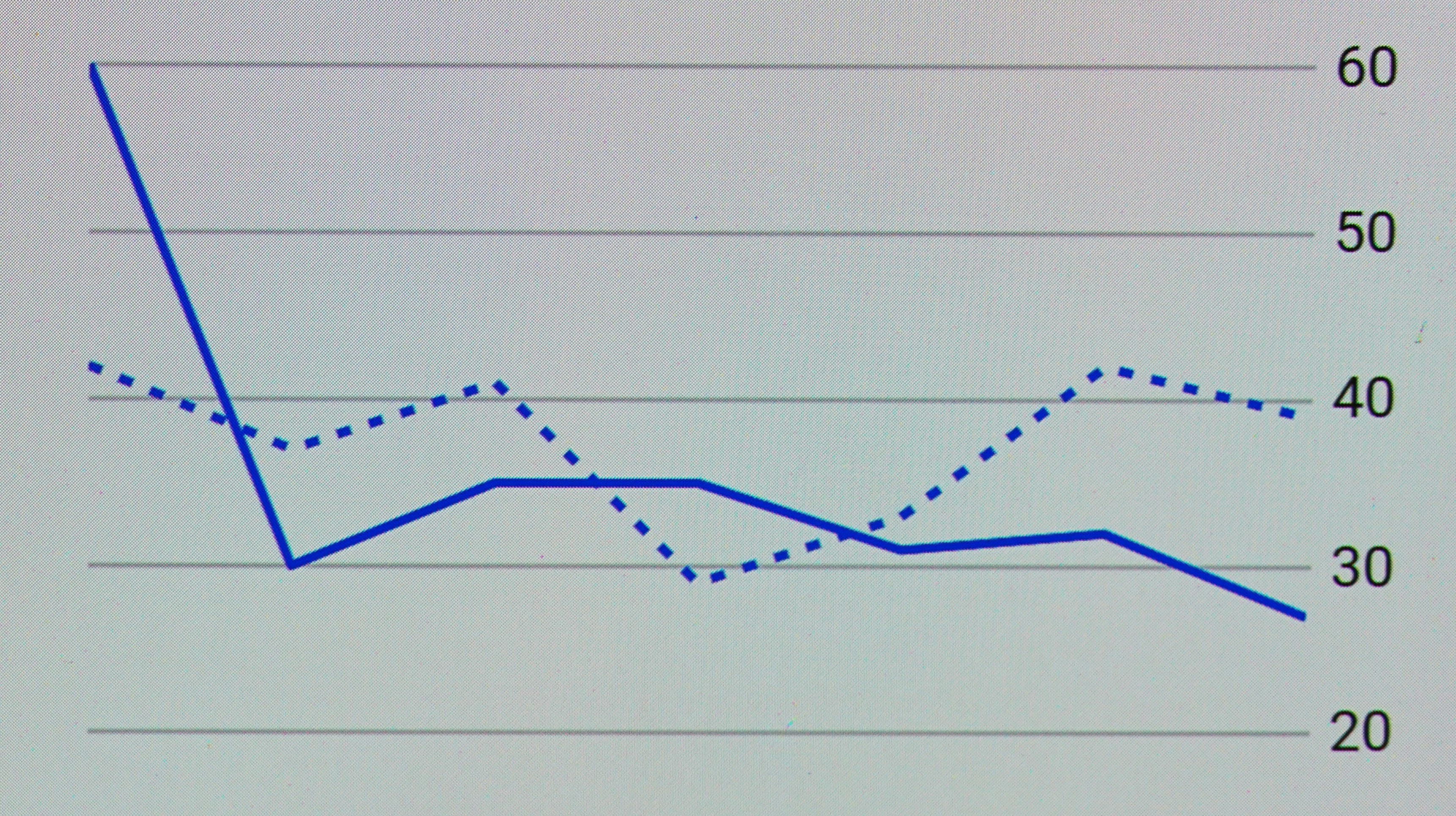

When evaluating machine learning models, two significant metrics are often considered: training error and test error. Training error measures how well the model has learned from the data it was trained on, while the test error assesses the model’s generalization capabilities on unseen data. Understanding the dynamics between these two metrics, especially in the context of interpolation, is essential for practitioners. This leads to the significant observation that under certain conditions, the test error may decrease during the interpolation regime.

This decrease in test error highlights a fascinating aspect of model behavior. When a model interpolates, it makes predictions about points within the convex hull bounded by its training examples. Thus, it can effectively capture the underlying patterns within the training data, potentially reducing the discrepancy on the test dataset as it aligns closely with the learned representations. Understanding this decrease is paramount, as it assists in the model selection process—determining which models are more likely to maintain robustness across varied data scenarios.

Moreover, grasping the interpolation regime concept informs practitioners about the trade-off between model complexity and performance. As models transition towards this regime, distinguishing between genuine improvement in test error and overfitting becomes critical. Understanding these nuances helps in achieving a balance that leads to optimal model performance.

Theoretical Foundations of Test Error

Test error serves as a critical metric in evaluating the performance of machine learning models. It encompasses the error rate when the model makes predictions on unseen data, thus providing insights into its generalization capabilities. The calculation of test error is fundamentally rooted in the difference between the predicted outputs generated by the model and the actual outcomes from the dataset used exclusively for testing. Through this evaluation, researchers can discern how well the model is expected to perform in real-world scenarios, which commonly differ from the training environment.

In machine learning, there exists a significant distinction between training error and test error. The training error reflects the model’s performance on the dataset it was trained on, typically revealing a lower error rate. In contrast, the test error represents performance on a separate dataset, serving as a benchmark for the model’s ability to generalize. A major concern in model evaluation is overfitting, which occurs when a model learns the training data too well, including its noise and fluctuations, resulting in a low training error but a substantially higher test error.

The phenomenon of interpolation plays a pivotal role in shaping the relationship between training and test errors. Interpolation occurs when a model is used to predict outcomes within the range of the training data. Notably, when a well-designed model interpolates, it can lead to a decrease in test error, indicating robust generalization. This aspect illustrates the delicate balance that machine learning practitioners must maintain – the goal is to achieve a model that possesses low training error while simultaneously minimizing test error. Understanding these theoretical foundations provides invaluable insight into effectively training models and achieving superior performance in practical applications.

Understanding Interpolation and Extrapolation

Interpolation and extrapolation are two fundamental concepts in the field of numerical analysis, particularly in regression modeling. These concepts are pivotal when it comes to making predictions based on existing data points. Interpolation refers to the process of estimating values within the range of a discrete set of known data points. For example, if a model is developed using a dataset containing information on temperature measurements taken every hour, interpolation would allow predictions of temperature readings at half-hour intervals. This technique relies heavily on the assumption that the data points exhibit a certain continuity and that the underlying pattern is preserved.

On the other hand, extrapolation involves predicting values outside the range of the available data. For instance, if one were to predict temperature readings for the hours not covered in the original dataset, the challenges increase significantly. Since extrapolation utilizes assumptions about the trends and relationships inherent in the dataset, the accuracy of such predictions often diminishes. It is important to note that errors can escalate quickly when extending beyond the known data points as the model must rely on the validity of its assumptions.

From a model performance perspective, interpolation typically results in lower error rates compared to extrapolation. This difference in performance arises because interpolation operates within the known data domain, where the established trends and relationships are more likely to hold true. Consequently, the model’s predictions are generally more accurate, leading to a decrease in test error at this stage. Understanding this distinction between interpolation and extrapolation is crucial for data scientists and analysts, as it informs the strategies employed to minimize error in predictive models.

The Role of Model Complexity

Model complexity plays a crucial role in determining the performance of predictive algorithms, especially within the interpolation regime. During this phase, a model aims to reduce test error by fitting the training data as closely as possible. However, the level of complexity chosen for a model can significantly impact both its ability to generalize and its overall accuracy.

Overfitting occurs when a model is too complex, capturing noise or random fluctuations in the training data instead of the underlying data distribution. In this scenario, the model performs exceptionally well on training data but poorly on unseen data, leading to increased test error. Conversely, a model that is too simple may fail to capture important patterns within the data, resulting in underfitting. This situation often manifests as high bias, where the model’s predictions are consistently off, thus compromising its performance across both training and test datasets.

The challenge lies in achieving an optimal balance, often referred to as the bias-variance tradeoff. In the interpolation regime, an appropriately complex model should minimize both bias and variance, thus reducing test error. When selecting model complexity, practitioners must consider factors such as the amount of training data available, the dimensionality of the input features, and the inherent variability within the data itself.

Moreover, techniques such as regularization can be employed to manage model complexity effectively. By penalizing overly complex models, regularization allows the model to maintain a level of complexity that is sufficient for learning the underlying relationships within the data while avoiding the pitfalls of overfitting. In this way, controlling model complexity becomes essential for improving test error and enhancing the model’s generalization capabilities.

Empirical Observations in Machine Learning

Recent empirical studies have shown a notable decrease in test error during the interpolation regime across various machine learning tasks. An interpolation regime is a phase in machine learning, typically observed when models attain zero training error, indicating that all training points are accurately predicted. Various research efforts have targeted this phenomenon to understand its implications for model performance.

For instance, in image classification tasks, models trained on large datasets such as CIFAR-10 illustrate significant reductions in test errors as they transition through the interpolation regime. One particular study revealed that Convolutional Neural Networks (CNNs), upon achieving their optimal training capacity, reached a test error rate close to that observed during validation phases. This alignment suggests that the model is not only memorizing training data but also generalizing well to unseen data.

Similarly, in natural language processing tasks, models utilizing transformers have demonstrated a sharp decline in test errors as they effectively interpolate between training examples. Research demonstrates that these models, when operating in the interpolation regime, capture complex linguistic patterns, leading to improved accuracy in tasks such as sentiment analysis and language translation.

Another noteworthy example can be found in regression tasks, where empirical studies have shown that kernel-based methods, such as Support Vector Regression (SVR), effectively reduce test errors in the interpolation regime. By leveraging the kernel trick, these models can fit highly complex relationships within the data, showcasing lower error margins during testing phases.

These empirical observations across diverse machine learning domains underline the crucial relationship between the interpolation regime and test error reduction. By enhancing our understanding of this relationship, practitioners can better design models that not only minimize training error but also optimize test performance, leading to more reliable and robust machine learning systems.

How Capacity Affects Interpolation

The capacity of a model is a crucial factor when considering its performance during interpolation tasks. In machine learning, model capacity refers to its ability to approximate complex functions based on the data presented. A higher capacity model can capture intricate relationships in the training data. However, this increased capacity does not come without trade-offs, particularly in terms of test error.

When a model possesses significant capacity, it is capable of fitting the training data with high accuracy, leading to minimal training error. However, this can also lead to overfitting, where the model learns the noise and fluctuations in the training dataset rather than the underlying distribution. As a result, while the training performance is enhanced, the model’s ability to generalize to new, unseen data diminishes, leading to an increase in test error.

On the contrary, a model with lower capacity may struggle to capture the true patterns inherent in the data. This can result in underfitting, where the model’s predictions are less accurate for both the training and test datasets. Here, the test error remains relatively low, but at the cost of not fully leveraging the data’s potential patterns.

Finding the right balance in model capacity is vital for optimizing performance during interpolation. Techniques such as regularization or using dropout can help manage capacity effectively, allowing for a model to reduce complexity while still capturing necessary patterns. The interplay between model capacity and interpolation performance is a delicate dance, where increasing capacity improves training accuracy but demands careful tuning to prevent an escalation of test error.

The Importance of Regularization Techniques

Regularization techniques play a pivotal role in machine learning models, particularly in maintaining low test error when these models function within the interpolation regime. In essence, regularization aids in addressing the trade-off between bias and variance, leading to more robust predictions. During the interpolation regime, where the model accurately captures the underlying distribution of the training data, it often risks overfitting. This is where regularization becomes crucial.

One of the primary methods of regularization is L2 regularization, also known as Ridge regression. This technique adds a penalty equal to the square of the magnitude of coefficients. By doing so, L2 regularization discourages overly complex models, thus reducing variance and helping maintain predictive performance on unseen data. Another widely recognized method is L1 regularization, or Lasso regression, which not only reduces variance but also encourages sparsity in model coefficients, effectively performing feature selection.

Moreover, dropout is an effective regularization technique used predominantly in neural networks. This approach involves randomly setting a fraction of the input units to zero during training. By doing so, it prevents the model from becoming excessively reliant on any single feature, promoting a more generalized approach that better performs when encountering new data.

Furthermore, early stopping is another mitigation technique to consider, particularly in iterative algorithms. By monitoring the performance on a validation set, training can be halted once performance ceases to improve, which reduces the risk of overfitting. Hence, incorporating regularization techniques during the training process is critical as they not only enhance model generalization but also help lower the test error efficiently.

Real-World Implications of Lower Test Error

The decrease in test error following an interpolation regime carries significant real-world implications across various sectors, particularly in finance, healthcare, and technology. Enhanced model generalization is pivotal in these domains. With reduced test error, models are better equipped to make accurate predictions on unseen data, leading to more reliable outcomes.

In the finance sector, for instance, the ability to forecast market trends and consumer behavior is critical. Financial institutions utilize predictive models to assess risks and optimize investment strategies. When these models exhibit lower test error, the reliability of their forecasts improves, reducing the probability of unexpected losses. This shift not only contributes to enhanced decision-making but also instills greater confidence among investors and stakeholders in financial products.

In healthcare, the implications of reduced test error are profound. Machine learning models used for diagnostics and treatment recommendations are becoming increasingly integral to patient care. A model that generalizes well and demonstrates lower test error can lead to more accurate diagnoses, personalized treatment plans, and ultimately better patient outcomes. Improved reliability in medical predictions can also enhance trust in technological advancements and encourage the integration of AI-driven solutions in clinical practice.

The technology industry also benefits significantly from lower test error. In areas such as natural language processing and image recognition, enhanced model reliability translates into more robust applications like virtual assistants, automated translation services, and high-quality image analysis. This translation of technological advancements into everyday applications showcases the crucial role of model performance, influencing user adoption and satisfaction.

Overall, the implications of reduced test error extend beyond theoretical discussions, demonstrating tangible benefits across critical sectors. Enhanced model generalization not only leads to better performance but also establishes a foundation for future advancements in various fields.

Conclusion and Future Directions

The understanding of test error reductions in the context of interpolation regimes is pivotal for improving machine learning models. Throughout the discussion, it has been established that as the learning algorithms venture further into interpolation, their capacity to fit the training data exemplifies a notable decrease in test error rates. This phenomenon can be attributed to a variety of factors including increased model capacity, enhanced parameter tuning, and improved generalization from the data presentation.

Moreover, the mechanics of interpolation illuminate crucial aspects of how machine learning models perform with respect to unseen data. The transition from underfitting to an optimal fit allows for notable improvements in predictive accuracy, minimizing the disparity between training and test errors. Understanding these dynamics not only provides insights into the functioning of contemporary algorithms but also stresses the importance of data quality and model architecture in achieving superior outcomes.

Looking ahead, several avenues for future research can be proposed. Firstly, further exploration of the threshold at which interpolation begins to dominate could provide insights into optimal model training strategies. Additionally, research into the attributes of datasets that facilitate efficient interpolation would enhance our understanding of how diverse data characteristics influence model performance. Collaborations between fields such as statistics, computer science, and data engineering may yield innovative methodologies to exploit interpolation effects more effectively.

In conclusion, the decrease in test error after reaching an interpolation regime demonstrates significant implications for the development of robust predictive models. By investigating both the theoretical underpinnings and practical implications of these findings, researchers can pave the way for advancements that deepen our comprehension of machine learning processes and their application across various domains.