Introduction to Multi-Head Attention

Multi-head attention is a crucial component of the transformer model architecture, first introduced in the landmark paper “Attention is All You Need” by Vaswani et al. in 2017. This innovation primarily enhanced the performance of natural language processing (NLP) tasks by allowing models to focus on different parts of the input data simultaneously. The fundamental concept behind multi-head attention is to enable the model to capture diverse contextual meanings and relationships within the input sequences by utilizing multiple attention mechanisms, or ‘heads’.

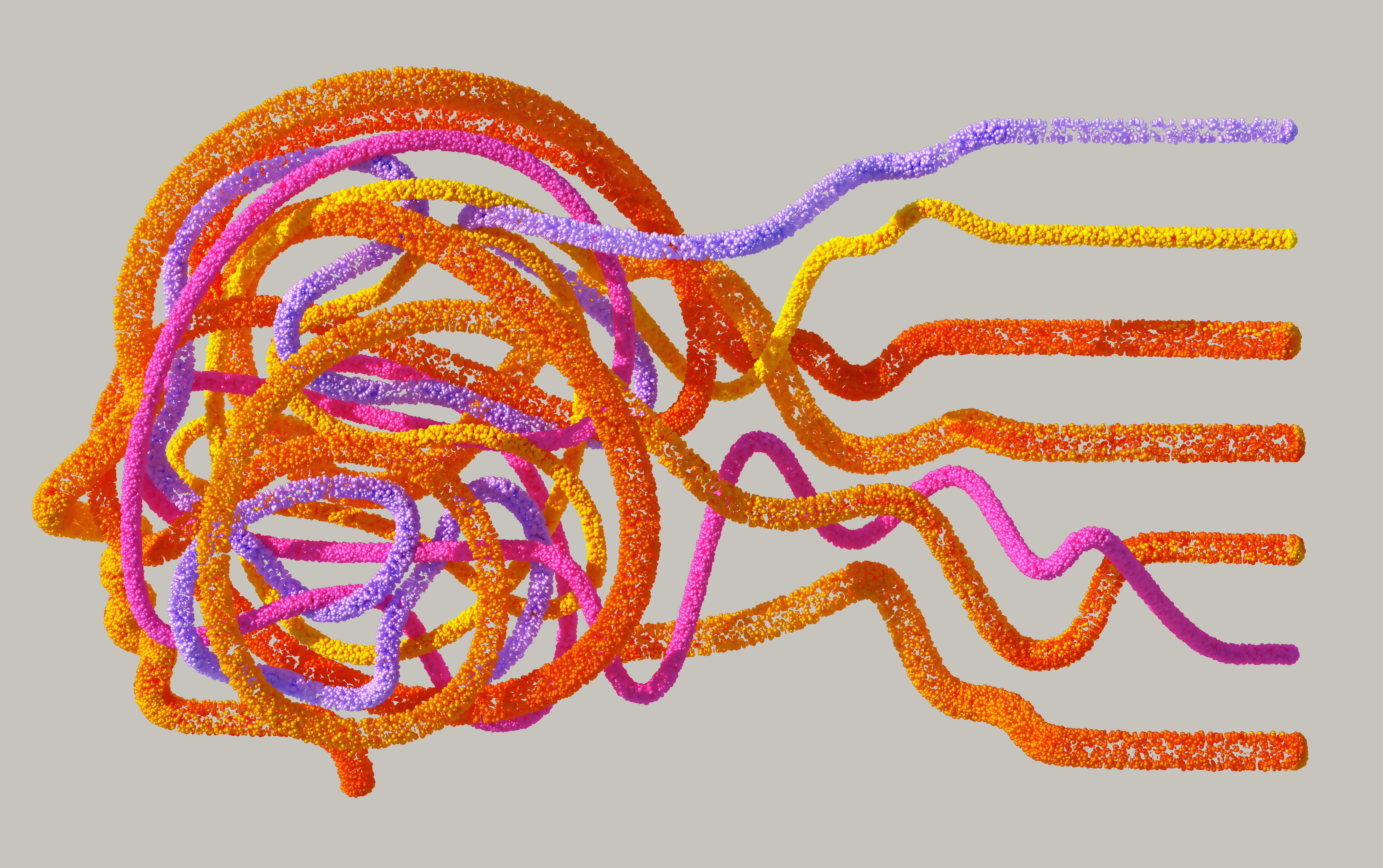

In traditional attention mechanisms, a single attention head is responsible for computing attention weights that determine how much focus should be given to each part of the input. However, this method can restrict the model’s ability to understand complex interactions within the data. Multi-head attention overcomes this limitation by running several attention heads in parallel, each capturing different aspects or features of the input. The outputs from these heads are then concatenated and linearly transformed to produce a rich representation.

The significance of multi-head attention in the transformer architecture lies in its capacity to provide a more nuanced understanding of input data, enabling models to make more informed predictions and decisions. This approach not only enhances the representation power but also facilitates the handling of long-range dependencies in data, which is particularly important in NLP tasks. By utilizing diverse attention focuses across multiple heads, the model can interpret complex patterns and relationships that single-head attention might overlook, leading to improved overall performance.

Understanding Attention Mechanisms

Attention mechanisms are a cornerstone of modern machine learning, particularly in natural language processing (NLP) and computer vision. They serve to enhance the model’s focus on relevant elements within the input data, promoting improved representation learning. There exists a vast array of attention mechanisms, primarily classified into two categories: global and local attention.

Global attention considers all parts of the input, enabling a comprehensive view of the entire dataset. This is especially beneficial in circumstances where relationships between distant elements are significant. In contrast, local attention restricts focus to specific segments of the data, which can be advantageous for tasks where nearby context holds greater relevance. This stratification of attention enables models to adopt varying approaches based on the context of the input they are processing.

Within this framework, self-attention emerges as a critical innovation. Unlike traditional attention models that require a distinct source and target, self-attention allows a model to evaluate the importance of each element in relation to others within the same input sequence. This mechanism operates by generating a representation that encapsulates the interactions between elements, effectively allowing the model to weigh input elements based on their relative significance. Through self-attention, a model can dynamically adjust its focus, enhancing its capability to forge nuanced representations.

Consequently, the integration of various attention mechanisms into machine learning models—especially self-attention—contributes significantly to their representation power. By enabling models to discern and prioritize relevant information while disregarding less informative segments, attention mechanisms enhance overall performance and accuracy in complex tasks. This adaptability fosters a more sophisticated understanding of data structures and relationships, paving the way for advanced AI applications.

The Structure of Multi-Head Attention

The architecture of multi-head attention is a significant advancement in the realm of neural networks, particularly in natural language processing. This innovative design facilitates the model’s ability to attend to different parts of the input sequence simultaneously, thereby enhancing its representation power. At its core, multi-head attention incorporates several attention heads, each of which processes the input data in parallel. This design is not merely a matter of replication; instead, each head is configured to capture distinct aspects of the input.

Each attention head operates by projecting the original input into three different vectors: Query (Q), Key (K), and Value (V). These vectors are derived from the original input through learned linear transformations. The Query vector determines what information to focus on, while the Key vector helps in scoring relevant information based on the attention mechanism. The Value vector, in contrast, represents the actual information that will be aggregated later on. By allowing multiple heads to process these vectors, the model can learn diverse patterns and relationships within the data.

The outputs from each attention head are then concatenated and linearly transformed into a final output vector. This concatenation allows the model to aggregate the information captured by each head, presenting a rich representation of the input data. For instance, while one head might specialize in capturing syntactic structures, another may focus on semantic relationships, thus enabling the model to form a more comprehensive understanding.

Ultimately, the structure of multi-head attention not only enables the capturing of varied representations from the input data but also enhances the overall robustness of the model. By leveraging multiple perspectives through the attention heads, this architecture stands as a key contributor to the superior performance of transformers and similar neural architectures.

Benefits of Multi-Head Attention

Multi-head attention presents several advantages over single-head attention models, significantly enhancing the representation capabilities of neural networks. One key benefit of multi-head attention is its ability to simultaneously attend to different representation subspaces, allowing for the processing of information in a more nuanced manner. This capability stems from the parallelism in the multiple attention heads, which capture various aspects of the input data. Consequently, the system becomes more adept at distinguishing patterns and relationships that may otherwise go unnoticed in a single-head setup.

Another essential advantage of this approach is its impact on model performance across diverse tasks. In natural language processing applications, such as translation and summarization, multi-head attention enables models to maintain context and retrieve relevant features across sentences and phrases. This leads to more coherent and contextually appropriate outputs, improving the overall quality of generated text. Similarly, in visual tasks, such as image processing, the multi-head structure aids in identifying varied features, including color, texture, and spatial relationships, resulting in enhanced image analysis capabilities.

Furthermore, the increased representation power offered by multi-head attention translates to greater robustness and adaptability. Models equipped with this mechanism can better handle noisy or incomplete data, making them more resilient in real-world applications where data integrity cannot be guaranteed. This adaptability not only broadens the applicability of multi-head attention in various domains, including healthcare, finance, and artificial intelligence but also underlines its importance as a fundamental component in modern machine learning architectures.

Enhanced Representation Learning

Multi-head attention is a pivotal mechanism in modern machine learning architectures, particularly in the context of representation learning. It significantly enhances the ability to discern complex relationships within the data by enabling the model to focus on different parts of the input simultaneously. This approach stands in contrast to traditional single-head attention mechanisms, which may overlook the subtleties that arise from various perspectives of the input.

The core advantage of multi-head attention lies in its capacity to generate diverse representations of the input data. Each attention head is tasked with learning to capture unique features, semantics, and contextual cues from the processed information. As a result, the model acquires a richer, more nuanced understanding of the dependencies present in the dataset. For instance, in natural language processing tasks, this becomes critically important as words can have multiple meanings depending on their context.

Furthermore, by integrating the outputs from multiple heads, the model benefits from the complementary strengths of each representation. This aggregation leads to more robust embeddings, as nuances captured by one head can enhance the output derived from others. Hence, the utilization of multi-head attention fosters a learning environment where intricate interrelationships within the data can be effectively modeled, promoting a deeper understanding of the context and semantics involved.

Ultimately, enhanced representation learning via multi-head attention allows for improved performance across various machine learning tasks, ensuring that models are increasingly capable of accurately interpreting complex datasets. This advancement is paramount in securing the fidelity of natural language understanding, computer vision, and other domains where representation plays a key role in task success.

Applications of Multi-Head Attention

The advent of multi-head attention has significantly transformed the landscape of various domains such as Natural Language Processing (NLP), computer vision, and reinforcement learning. This sophisticated mechanism, a core component of transformer architectures, enables models to learn and attend to multiple aspects of input data simultaneously, improving their representation power significantly.

In the realm of NLP, multi-head attention has been pivotal in enhancing models like BERT and GPT. By allowing these models to focus on different parts of a sentence during processing, multi-head attention contributes to a more nuanced understanding of context and meaning. For instance, in sentiment analysis, these models can capture varying sentiments expressed in a single statement, leading to more accurate predictions.

Additionally, multi-head attention is making strides in computer vision. By integrating this mechanism, convolutional neural networks (CNNs) can leverage attention to prioritize relevant features within images. A case study involving image segmentation demonstrated that models using multi-head attention significantly outperformed traditional architectures. The model’s ability to identify prominent objects while discarding irrelevant background information resulted in more precise segmentation outcomes.

In reinforcement learning, the application of multi-head attention has shown promising results in improving decision-making processes. For instance, in complex environments where an agent must predict future states based on a multitude of observations, multi-head attention allows the agent to weigh different observations accordingly. This leads to more informed policy updates and ultimately better performance in tasks like game playing.

These examples illustrate the transformative impact of multi-head attention across various fields. By enhancing representation power and enabling models to focus on crucial aspects of data, this mechanism has solidified its role as a vital element in advancing the capabilities of artificial intelligence applications.

Challenges and Limitations

While multi-head attention mechanisms have shown remarkable capabilities in enhancing representation power, they are not devoid of challenges and limitations that must be considered. One primary concern is the computational complexity associated with these mechanisms. The multi-head approach essentially entails the simultaneous processing of multiple attention heads, which, although beneficial for capturing diverse contextual information, results in a significant increase in computational requirements. In large-scale models, this escalates not only the time taken for training but also the memory usage, which can be prohibitive, especially for resource-constrained environments.

Another critical limitation is the potential for overfitting. With an increased number of heads and parameters comes a heightened risk of the model learning to fit the noise inherent in the training data rather than generalizing to unseen examples. This problem can be particularly pronounced when working with smaller datasets, where the model may not have sufficient variability to learn robust representations effectively. Consequently, practitioners must tread carefully when designing models that leverage multi-head attention, ensuring that the complexity does not overshadow the underlying patterns necessary for effective learning.

To mitigate these challenges, several strategies can be employed. Regularization techniques, such as dropout, can be implemented to reduce the risk of overfitting by randomly deactivating a fraction of attention heads during training. Additionally, employing layer normalization and adjusting the learning rate policies may help stabilize training and decrease computational burden. Further, careful architectural choices, such as limiting the number of heads or leveraging lighter transformer variants, can reduce computational costs while still benefiting from the multi-head attention paradigm.

Comparing Multi-Head Attention with Other Approaches

Multi-head attention has emerged as a powerful technique in the field of representation learning, primarily utilized within the Transformer architecture. To understand its efficacy, it is crucial to compare it with other prevalent methods, particularly convolutional networks (CNNs) and recurrent networks (RNNs). Each of these approaches has unique characteristics that cater to different types of data and tasks.

Convolutional networks excel in processing spatial data, such as images, by identifying local patterns through convolutional layers. This makes CNNs particularly effective for tasks like image classification and object detection. However, they often struggle with capturing long-range dependencies, as they rely on local receptive fields. Conversely, recurrent networks are designed to handle sequential data, making them suitable for applications such as natural language processing. RNNs can maintain context over sequences, but they face challenges, such as vanishing gradients, that may impede training on long sequences.

Multi-head attention, on the other hand, addresses some of these limitations by allowing the model to focus on different parts of the input simultaneously. By employing multiple attention heads, it can capture a wide array of relationships within the data, making it particularly effective for tasks that require understanding complex interactions, such as machine translation and text summarization. This capability allows the model to weigh various aspects of the input data dynamically, resulting in improved representation power.

When choosing between these methodologies, factors such as the nature of the input data and the specific requirements of the task at hand play a critical role. For structured, spatial data, convolutional networks may be optimal, while recurrent networks can be advantageous for time-series or sequential data challenges. Nonetheless, for tasks that demand a high degree of relational understanding among inputs, multi-head attention emerges as a leading choice. Assessing these approaches in context is essential for achieving the best results in representation learning.

Conclusion and Future Directions

In conclusion, the introduction of multi-head attention has significantly enhanced the representation power of machine learning models, particularly within the domain of natural language processing. By allowing models to focus on different aspects of the input data simultaneously, multi-head attention facilitates a richer understanding of the underlying patterns and relationships. This capability has proven effective in various applications, leading to more nuanced interpretations and better overall performance in tasks such as translation, sentiment analysis, and more.

Looking ahead, the future directions of multi-head attention are promising. Emerging trends suggest that ongoing research will continue to optimize this mechanism, potentially incorporating advancements in computational efficiency and scalability. For instance, recent studies are exploring the integration of multi-head attention with graph-based approaches, which could further enhance the representation of spatial and temporal relationships in complex datasets.

Moreover, the exploration of adaptive and dynamic attention mechanisms represents another area where significant improvements may emerge. By allowing models to adjust attention weights in response to the data context in real time, there is potential for even greater accuracy and relevance in tasks that require a deep understanding of context and nuance.

Ultimately, as machine learning continues to evolve, the role of multi-head attention is expected to expand further. Researchers are likely to discover new applications across diverse fields, reinforcing its position as a critical component in building sophisticated, high-performing models. Embracing these advances will be essential for harnessing the full potential of multi-head attention in future machine learning initiatives.