Introduction to Large Models in AI

Large models in artificial intelligence (AI) are defined primarily by their extensive parameters, which often exceed billions, if not trillions, of values. These parameters enable the models to capture intricate patterns and relationships in vast datasets, enhancing their ability to perform tasks such as language understanding, image recognition, and much more. Unlike traditional machine learning models that may rely on simpler structures and fewer parameters, large models utilize deep learning architectures, specifically neural networks, to achieve higher accuracy and capability.

The significance of large AI models lies in their performance across various applications. They have transformed fields such as natural language processing, where models like GPT-3 and BERT have shown remarkable prowess in generating human-like text and understanding context. Similarly, in vision tasks, large convolutional neural networks (CNNs) can recognize and classify images with unprecedented levels of precision. This capacity has led to their widespread adoption in industries ranging from healthcare to finance, where complex decision-making is paramount.

Furthermore, the rise of large models reflects an ongoing trend towards scalability and compute power in AI. With advancements in hardware, particularly the development of Graphics Processing Units (GPUs) and specialized AI accelerators, researchers have been able to construct models that were previously impractical due to computational limitations. Consequently, these large models are not only more effective but also capable of addressing more complex problems that smaller models may struggle to solve.

As we continue to explore these advanced systems, understanding their structure, functionality, and underlying mechanisms becomes increasingly vital, particularly in the context of developing interpretable AI systems. This understanding will facilitate better insights into how large models operate, paving the way for more trustworthy applications of AI technology in the future.

Understanding Model Interpretability

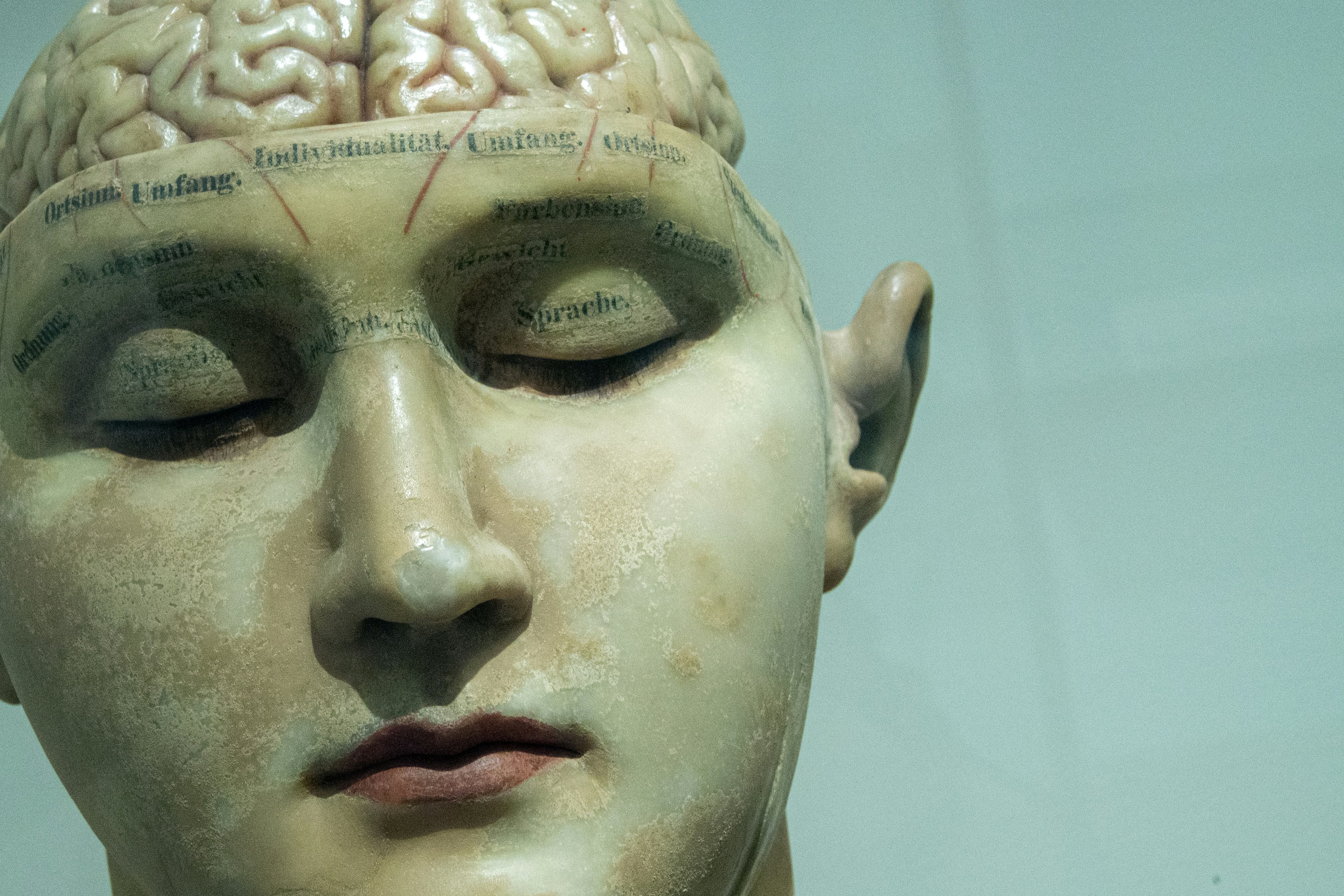

Interpretability in artificial intelligence (AI) is defined as the extent to which a human can understand the cause of a decision made by a model. In the context of AI models, especially large ones, interpretability is crucial for both developers and users. It allows stakeholders to grasp how models come to particular conclusions or recommendations, thereby fostering a greater degree of trust. The success of AI systems hinges not only on their performance but also on the transparency with which they operate.

For developers, having interpretable models means they can debug, refine, and improve their algorithms with a clearer understanding of their operational mechanisms. For users, particularly in critical domains such as healthcare, finance, and law, understanding the decision-making process underscores accountability. Lack of interpretability can lead to a hesitancy in adoption; users may be reluctant to trust a system that operates like a ‘black box’ without any insight into its decision criteria.

Several methods and metrics are used to evaluate the interpretability of models. One common approach is feature importance analysis, where developers assess which features significantly influence model predictions. Techniques such as LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) are increasingly popular for providing local explanations of single predictions, facilitating a deeper understanding of model behavior.

Another aspect of model interpretability involves model agnosticism; it relies on evaluating various types of models to identify the trade-offs between interpretability and predictive performance. Metrics such as fidelity, which measures how accurately an interpretable model reflects the predictions of a more complex model, are essential in this analysis. Through these strategies, the relationship between accountability, trust, and interpretability can be established, contributing to the responsible deployment of AI technologies.

Structure of Large Models

The architecture of large models is a pivotal factor that significantly contributes to their performance, particularly through the implementation of multi-head structures. These structures divide the model’s processing capabilities into various heads that can independently focus on different aspects of the input data. In essence, the multi-head architecture facilitates a more nuanced understanding of complex data sets by allowing each head to specialize in recognizing distinct patterns and features.

The complexity of large models arises not only from their size but also from the intricate interactions between their components. Each head within a multi-head structure processes information simultaneously, leading to an emergent property where the aggregate output achieves higher interpretability. This complexity is further enhanced by the depth of the neural network; deeper networks often integrate multiple layers of transformation that capture a wide range of information, from basic to sophisticated representations.

Moreover, the interaction between different heads can foster redundancy, where multiple heads learn similar features, thereby reinforcing certain interpretations while balancing the system. This balance is critical when it comes to interpretability. By distributing the learning across multiple heads, large models can create clearer, more delineated pathways that offer insights into how decisions are made. Each head’s evaluation can be traced back to input features, leading to a more transparent model behavior.

Additionally, the way large models are structured, with their layers and heads designed for collaborative processing, contributes to a unified framework from which we can derive interpretations. This collaborative nature implies that when one head focuses on a specific task, other heads can complement this focus by tackling related or contextual information, thereby enhancing the overall interpretability of the model. This intricate interplay ultimately sets the stage for a better understanding of the model’s decision-making processes, making large models not only more effective but also potentially more interpretable.

The Emergence of Interpretable Heads

As artificial intelligence continues to evolve, the development of large neural network models has introduced a new layer of complexity, particularly regarding their interpretability. One intriguing phenomenon observed in these models is the emergence of interpretable heads. These specialized components of the architecture not only enhance the model’s functionality but also improve its ability to express additional insights about the underlying data. The emergence of these interpretable heads can be attributed to multiple factors, primarily the attention mechanisms pervasive in such architectures.

Attention mechanisms serve as a foundation for how these models prioritize and process information, granting certain features more prominence during the decision-making process. Through mechanisms like self-attention, models dynamically adjust their focus based on the context provided by the input data. This sensitivity towards specific features enables large models to create nuanced representations, leading to the emergence of interpretable heads. Each head often specializes in capturing particular characteristics, such as sentiment or certain syntactic structures within textual data. Consequently, these uniquely crafted representations facilitate better understanding by translating complex relationships into simpler terms.

Furthermore, the architecture of large models is inherently designed to support scalability and depth, allowing for numerous parameters and layers to capture intricate patterns. As these models are trained on extensive datasets, certain heads naturally begin to develop interpretations that align with prominent features within the data. Notably, this process is not merely a byproduct of sheer size but emerges from the specific interactions facilitated by layers of attention. As a result, the interplay between these attention heads allows large models to distill critical insights while maintaining a level of interpretability that was previously challenging to achieve.

Case Studies of Interpretable Heads in Action

Large models have demonstrated the capacity to develop interpretable heads that significantly illuminate the decision-making processes behind their predictions. One notable instance can be observed in the domain of natural language processing, particularly with models like BERT (Bidirectional Encoder Representations from Transformers). In this case, researchers identified that certain attention heads within BERT were adept at focusing on specific syntactic elements, such as parts of speech. These heads allowed for a clearer understanding of how the model assessed sentence structure, thereby enhancing the interpretability of its outputs.

Another compelling example is the application of large language models in sentiment analysis. By scrutinizing the attention heads within models like GPT-3, researchers found that certain configurations of heads were particularly tuned to identify emotional nuance in text. These interpretable heads facilitated the separation of subtle sentiments, thus demonstrating their potential for real-world applications in customer service and market analysis. Here, organizations can leverage the insights derived from these models to better gauge customer responses and tailor their strategies accordingly.

Moreover, in the realm of healthcare, interpretable heads have played a pivotal role in enhancing the reliability of diagnostic models. For instance, a model trained on medical imaging data utilized interpretable heads to focus on specific features within the images, such as anomalies or variations indicating disease presence. This capability not only helped clinicians in making informed decisions but also substantiated a level of trust in automated systems that are increasingly used in patient care.

These case studies illustrate the practical benefits of interpretable heads in large models. By revealing the mechanisms behind model decisions, these interpretable components not only improve transparency but also pave the way for more robust and trustable applications across various fields.

The Benefits of Having Interpretable Heads

Interpretable heads in large models offer several significant advantages that can greatly enhance their functionality and impact, especially in critical decision-making contexts. One of the primary benefits is the improved debugging processes that arise from these interpretable components. When a model’s decision-making process is transparent, it becomes easier for developers and data scientists to identify errors or biases within the model. This level of clarity allows for targeted adjustments, leading to improved performance and the overall reliability of the model.

Enhanced user trust is another crucial advantage associated with interpretable heads. In environments where decisions can have serious implications—such as healthcare, finance, and criminal justice—stakeholders need to be able to understand how and why a model reaches a specific conclusion. Interpretable heads demystify the decision-making process, making the model’s outputs more accessible and comprehensible to users. This transparency fosters confidence in the AI system, ensuring that users are more likely to rely on its recommendations and insights.

Moreover, interpretable heads contribute to better alignment with ethical AI principles, addressing concerns surrounding fairness, accountability, and transparency. As models increasingly influence significant societal decisions, the need for interpretability aligns with the demand for ethical AI practices. Organizations can demonstrate their commitment to responsible AI by implementing models with interpretable heads, thereby ensuring that their decisions are not only effective but also equitable and just.

In summary, the incorporation of interpretable heads within large models enhances their qualities across multiple dimensions. From facilitating debugging processes to bolstering user trust and aligning with ethical standards, the benefits of interpretable heads are integral in supporting responsible and effective AI deployment.

Challenges and Limitations of Interpretability

Interpretability in large models presents several intrinsic challenges that stem from the complexity of these systems. Large models, especially those utilized in deep learning, often consist of numerous parameters and layers, making it increasingly difficult to derive clear insights about their decision-making processes. This complexity can lead to a trade-off between model accuracy and interpretability. While enhancements in model architecture can boost performance, they may simultaneously obscure the understanding of how certain predictions are made.

One major challenge is the phenomenon of overfitting, where a model learns to capture noise rather than the underlying distribution. This can result in high accuracy on training data but poor generalization to new, unseen data. Consequently, models that perform exceptionally well may provide misleading interpretations, as the features deemed significant by the model may not correlate well with human understanding. The reliance on sophisticated mathematical functions further complicates this; the relationships learned by the model are often non-linear and abstract, detracting from their interpretability in practical applications.

Another limitation arises from post-hoc interpretability methods that seek to explain model behavior after training has occurred. While these techniques can offer insights, they are often approximations and may not accurately represent the model’s true functioning. For instance, methods like LIME or SHAP can highlight features that contribute to a prediction but do not necessarily reflect the intricacies of the underlying model. The reliance on such approximations can inadvertently lead to misinterpretations, causing stakeholders to draw incorrect conclusions regarding model fairness or bias.

In summary, while the desire for interpretability in large models is crucial for fostering trust and understanding, the inherent complexities and potential misalignments pose significant challenges that researchers and practitioners must navigate carefully.

Future Directions in Model Interpretability

As the field of artificial intelligence (AI) continues to advance, particularly with the rise of large language models, the quest for enhanced interpretability remains a focal point of research and development. Future directions in model interpretability aim to bridge the gap between complex model behavior and human understanding. Researchers are pursuing innovative techniques that not only clarify how these models arrive at specific decisions but also facilitate the identification of underlying patterns in training data.

One emerging trend is the development of visualization tools designed to unpack high-dimensional data representations. By translating the abstract operations of large models into more digestible visual formats, practitioners can glean insights into model behavior. For example, interpretable visualizations may highlight which features contribute most to a model’s predictions, guiding users in reflective analysis and model refinement. Such tools are becoming increasingly essential as organizations seek to explain the rationale behind model outputs to non-technical stakeholders.

With the growing emphasis on ethical AI, researchers are also prioritizing the integration of interpretability into model design processes. This proactive approach focuses on incorporating transparency features directly into the model architecture, rather than retrofitting interpretability as an afterthought. Moreover, practitioners are advocating for the establishment of best practices and standards in interpretability, enabling consistent evaluation and reporting across various applications of AI. By establishing a shared understanding of interpretability metrics, organizations can more effectively assess and mitigate risks associated with model deployment.

In summary, the future of model interpretability lies at the intersection of innovation and ethical practice. As AI continues to evolve, the demand for more interpretable models will shape ongoing research agendas, driving the development of methodologies that cater to both technical accuracy and user comprehension.

Conclusion: The Path Forward for Interpretable AI

Throughout this discussion, we have explored the significant relationship between large models and the development of interpretable heads within artificial intelligence systems. The ability to interpret the decisions made by AI is crucial, especially in applications where human lives and well-being are at stake. As we delve deeper into the intricacies of AI, it becomes increasingly evident that significance is not merely a matter of expansive data or complex algorithms, but rather a concerted effort towards achieving clarity in the representation of decisions made by these systems.

The essence of fostering interpretable heads in large models lies in balancing complexity with clarity. While it is compelling to create advanced models capable of performing with precision across various tasks, the interpretability of these models cannot be overlooked. The presence of interpretable heads allows stakeholders to gain insights into how a model processes information and makes decisions. This transparency not only enhances user trust but also aids in the identification of biases, errors, and areas for further improvement.

Moreover, as we push the boundaries of AI development, stakeholders must recognize the pivotal role that interpretability plays in ensuring ethical AI practices. Researchers, developers, and policymakers should collaborate to craft frameworks that prioritize both model performance and interpretability, ensuring that AI systems provide meaningful insights into their operations. Such collaborative efforts could lead to innovative methodologies that promote a deeper understanding of AI behavior while maintaining the inherent advantages offered by large models.

In summary, large models offer a promising frontier for artificial intelligence, particularly when it comes to developing interpretable heads. By advocating for interpretability, we can build AI systems that are not only efficient but also responsible, fostering greater accountability and ethical considerations within the evolving landscape of technology.