Introduction to Qlora and 4-Bit Adaptation

In recent years, the field of machine learning has grown rapidly, driven by the need for efficient and effective model optimization techniques. One such advancement is Qlora, a novel approach designed to enable 4-bit adaptation of machine learning models. Qlora allows models to maintain their performance while significantly reducing their memory footprint, which is essential in resource-constrained environments.

4-bit adaptation refers to the process of representing model weights with only 4 bits, as opposed to traditional representations that may use 8 bits or more. This reduction in bit-width can lead to substantial improvements in computational efficiency and reduced storage requirements. However, it raises important challenges in maintaining the integrity and quality of model outputs. The ability to achieve 4-bit adaptation without compromising quality is what distinguishes Qlora from other techniques.

The significance of Qlora lies in its potential applications across various domains, including natural language processing and computer vision. In these fields, large models necessitate considerable computational power, making the development of efficient adaptation strategies like Qlora crucial. By minimizing the model size while preserving accuracy, developers can deploy machine learning applications on devices with limited resources, improving accessibility and usability.

In the evolving landscape of machine learning, the commitment to adopting methodologies that allow for low-bit adaptations while ensuring high-quality outputs is pivotal. As research continues to explore the capabilities of Qlora, it has garnered attention for its innovative approach to handling the trade-offs inherent in model optimization. Understanding Qlora’s mechanisms and its role in achieving 4-bit adaptation will serve as a vital foundation for exploring its methodologies and benefits in subsequent sections.

The Importance of Model Efficiency in AI

Model efficiency plays a crucial role in the advancement of artificial intelligence systems. As AI continues to evolve, the need for highly efficient models has become paramount. Reducing model size and complexity not only enhances performance but also facilitates faster processing. An efficient model can significantly reduce the time required for data processing and inference, which is essential in applications requiring real-time responses.

In addition to speed, operational costs are greatly impacted by the efficiency of AI models. Smaller and less complex models generally require less computational power and memory, which translates into lower infrastructure and maintenance costs. Organizations can benefit from reduced expenses associated with cloud services or data center management when adopting efficient model architectures. This financial aspect is particularly appealing for startups and smaller enterprises looking to leverage AI capabilities without incurring exorbitant costs.

Furthermore, model efficiency has profound implications on energy consumption. As the world increasingly shifts towards sustainability, the need for energy-efficient AI solutions cannot be overstated. Large models tend to consume substantial amounts of energy during training and inference phases, contributing to a higher carbon footprint. Conversely, by adopting approaches such as 4-bit adaptation, it is possible to achieve comparable performance levels while significantly mitigating energy use. The benefits of implementing efficient AI models extend beyond cost savings and performance improvements; they play a vital role in aligning technological advancements with environmental sustainability goals.

In essence, fostering model efficiency in artificial intelligence is not merely about enhancing performance. It is a multifaceted approach that encompasses expedited processing, reduced operational costs, and minimized energy consumption, all of which contribute to the overarching goal of creating impactful and sustainable AI solutions.

Technical Foundation: Understanding the Mechanism of Qlora

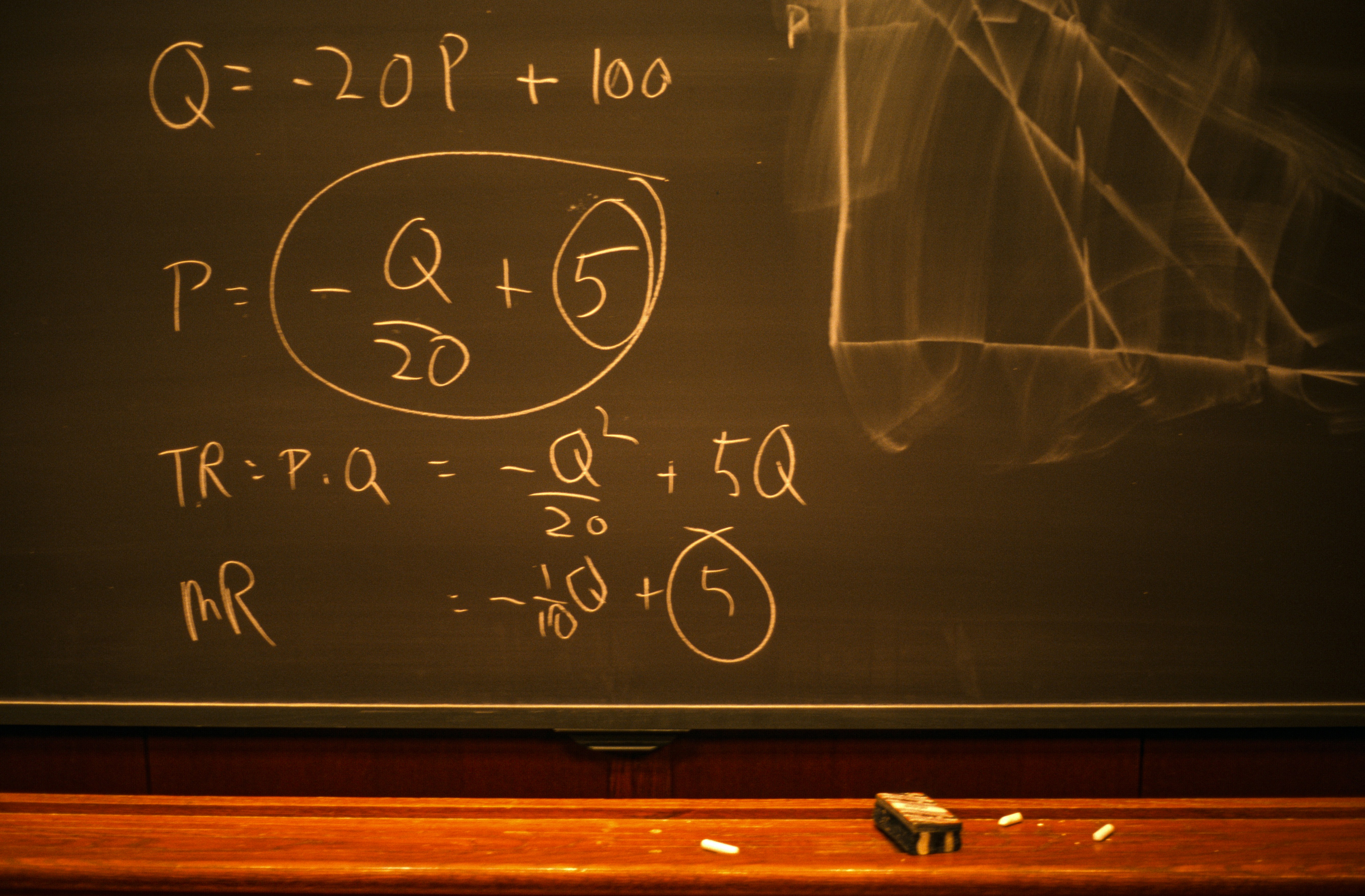

Qlora represents a significant advancement in the field of model adaptation techniques, specifically achieving a compression ratio that allows for 4-bit adaptation without compromising quality. At the heart of Qlora’s operational capabilities are its sophisticated algorithms designed to maintain critical information while reducing the model size, thus enhancing efficiency and reducing computational resource requirements.

The mechanics of Qlora involve a two-step process: quantization and fine-tuning. Initially, it employs quantization techniques that effectively truncate model weights from their original format to the compressed 4-bit representation. This reduction is not arbitrary; it carefully evaluates and selects which parts of the model can be downscaled without detrimental effects on performance. Through intelligent clustering of weights and the implementation of dynamic programming approaches, Qlora assures that essential features and patterns within the model remain intact.

Moreover, Qlora encompasses a feedback loop mechanism, which continuously evaluates the performance metrics as the model adapts to the new configuration. This aspect is vital, as it facilitates iterative refining of both the quantization and the fine-tuning phases. By analyzing the output quality and adjusting the quantization thresholds accordingly, Qlora can achieve a balance between model size and operational integrity.

In addition, the algorithms utilized in Qlora incorporate techniques from neural architecture search and model pruning. This enables the adaptation process to identify and preserve key model attributes while discarding redundancies that do not contribute significantly to the overall performance. The outcome is a streamlined model that operates efficiently with minimal loss of quality, setting a new standard in 4-bit adaptation methodologies.

Advantages of 4-Bit Adaptation with Qlora

Qlora represents a significant advancement in the field of machine learning, particularly in the context of 4-bit adaptation. One of the primary advantages of employing Qlora is its ability to significantly improve computational efficiency. By reducing the precision of model weights and activations to 4-bits, Qlora facilitates faster computations compared to traditional methods that rely on higher bit-depth representations. This efficiency is especially beneficial when dealing with large datasets or complex neural networks, allowing researchers and developers to maximize their resource utilization.

Another notable advantage of using Qlora for 4-bit adaptation lies in the reduction of inference times. In various applications, particularly in real-time scenarios such as autonomous vehicles or interactive AI systems, speedy response times are paramount. The streamlined computations enabled by Qlora ensure that models can deliver quick predictions without sacrificing accuracy. This improvement can lead to more effective and responsive systems, ultimately enhancing user experience.

Furthermore, 4-bit adaptation with Qlora contributes to significant storage savings. Traditional models that utilize higher bit depths consume considerable disk space, which can be a constraint, particularly in cloud environments or on edge devices with limited capacity. In contrast, Qlora’s lightweight models can be stored and deployed more easily, making them more suitable for a variety of applications, including mobile and IoT devices.

Lastly, the ability to operate effectively within resource-constrained environments is perhaps one of the most impactful benefits of 4-bit adaptation using Qlora. As the demand for efficient machine learning applications continues to grow, especially in handheld devices and smart gadgets, Qlora’s capabilities provide a reliable solution for developers aiming to implement AI in such scenarios without compromising performance.

Challenges of 4-Bit Adaptation and How Qlora Overcomes Them

Adapting machine learning models to 4-bit precision presents several noteworthy challenges. One of the primary concerns is the potential loss of information. When reducing the precision of numerical representations, the inherent detail required for high-quality predictions may be compromised. This information loss can result in diminished model performance, particularly in complex tasks that demand nuanced understanding and precise calculations.

Another challenge associated with 4-bit adaptation is the risk of increased quantization error. Quantization involves mapping a range of values to a limited set of discrete levels, which can inadvertently introduce inaccuracies in the model’s outputs. These discrepancies can lead to suboptimal decision-making capabilities, thus raising questions about the feasibility of deploying low-precision models in critical applications.

Qlora addresses these challenges through a sophisticated approach that ensures minimal degradation of model performance while achieving the desired level of compression. One of the key strategies employed by Qlora is advanced quantization techniques that intelligently select and prioritize essential information during the adaptation process. By focusing on retaining the most relevant features of the model, Qlora mitigates the effects of information loss that typically accompany lower-bit adaptations.

Moreover, Qlora incorporates enhanced optimization algorithms that are specifically designed to minimize quantization error. These algorithms make it possible to recalibrate the model post-adaptation, ensuring that any adverse impact on performance is effectively countered. These methodologies collectively enable Qlora to maintain a competitive edge in performance, even as it transitions to a 4-bit framework.

Ultimately, the innovations introduced by Qlora not only overcome the inherent challenges of 4-bit adaptation but also pave the way for more efficient model deployment across a range of applications.

Real-World Applications of Qlora’s 4-Bit Adaptation

Qlora’s innovative approach to 4-bit adaptation has significant implications across various fields, particularly in enhancing the efficiency of mobile AI applications. In the context of smartphones, where resource limitations and power consumption are critical factors, Qlora enables the deployment of advanced AI models that would traditionally require more computational resources. By reducing the bit representation of weights and activations, Qlora facilitates faster inference without substantial loss of accuracy, thereby improving user experience in applications such as image recognition, real-time language translation, and augmented reality.

Edge computing is another area where Qlora’s 4-bit adaptation shines. As industries increasingly move towards decentralized processing to minimize latency, the need for efficient AI models grows. Qlora’s methodology allows for high-performance models to run on edge devices, such as IoT sensors and gateways, enabling real-time data processing and analysis. This capability is particularly beneficial in fields such as smart manufacturing and healthcare, where immediate decision-making could lead to improved asset management and patient outcomes.

Moreover, in scenarios demanding real-time data processing, such as financial trading or autonomous vehicle navigation, Qlora’s 4-bit adaptation proves invaluable. The ability to rapidly process vast amounts of data using lightweight models allows businesses and systems to respond to changes swiftly. The technology not only enhances existing frameworks but also opens the door for new applications where swift data processing is paramount. Overall, the versatility of Qlora’s 4-bit adaptation showcases its potential to transform various industries by increasing the performance and scalability of AI-driven solutions.

Comparative Analysis: Qlora vs. Other Compression Techniques

Model compression techniques are increasingly crucial for optimizing neural networks, particularly in environments with limited computational resources. Among these techniques, Qlora stands out as a method that achieves 4-bit adaptation while maintaining high fidelity. This section will explore how Qlora compares with other prevalent compression strategies, including pruning, quantization, and knowledge distillation.

Pruning is a widely used technique that reduces the number of parameters by removing less significant weights from the model. While effective in decreasing model size, it can lead to a significant drop in performance if not implemented judiciously. In contrast, Qlora’s 4-bit adaptation process focuses on minimizing quality loss, allowing for considerable model efficiency without the adverse effects often associated with aggressive pruning.

Quantization is another prevalent method that transforms the weight representations to lower precision formats. Standard quantization typically drops the bit-width of weights to 8-bits or lower. While this can improve inference speed and reduce memory footprint, it may compromise model accuracy. Qlora, on the other hand, excels by offering a balanced approach that maintains performance levels comparable to full-precision models while achieving a remarkable compression ratio. This aspect makes Qlora particularly appealing for real-time applications.

In contrast to both pruning and quantization, knowledge distillation facilitates the transfer of knowledge from a large, well-trained model (teacher) to a smaller, more efficient model (student). Although this technique can yield lower complexity models without significant performance degradation, it requires substantial training time and data to effectively train the student model. Qlora’s adaptive approach minimizes the need for extensive retraining cycles, thereby streamlining the model adaptation process.

In essence, while each of these compression techniques has unique advantages and trade-offs, Qlora offers a refined solution that maintains quality and efficiency, making it a formidable alternative in the landscape of model compression. This analysis serves to highlight the superior adaptability and performance of Qlora relative to its contemporaries.

Future Prospects of 4-Bit Adaptation Technologies

The landscape of artificial intelligence (AI) and machine learning is rapidly evolving, particularly with the advent of innovative technologies like 4-bit adaptation. As the demand for efficient computational methods and resource management grows, the relevance of 4-bit adaptation technologies, including Qlora, continues to expand across various sectors.

One of the primary future enhancements in 4-bit adaptation is expected to revolve around increased training efficiency and improved model performance. As researchers delve deeper into optimization techniques, it is likely that novel approaches will emerge, allowing for even smaller model sizes without compromising quality. The integration of 4-bit adaptation could lead to more compact AI applications that maintain high accuracy, relevant for domains such as natural language processing and image recognition.

Furthermore, the applications of 4-bit adaptation technologies are poised to broaden significantly. Industries like healthcare, finance, and autonomous systems may leverage these advancements to enhance their AI capabilities. For instance, in healthcare, rapid data processing is essential, and 4-bit adaptation can facilitate real-time analytics for patient management systems. Similarly, in finance, optimized algorithms can aid in fraud detection and algorithmic trading, providing more efficient data processing solutions.

Continued research and collaboration among academia and industry stakeholders will play a crucial role in accelerating the adoption of 4-bit technologies. With more resources dedicated to exploring the possibilities of Qlora and similar frameworks, we can anticipate breakthroughs that will provide transformative impacts across various fields. As the understanding of 4-bit adaptation deepens, it may pave the way for new methodologies that not only enhance performance but also broaden the scope of AI applicability.

Conclusion: The Impact of Qlora on AI Development

The advent of Qlora represents a notable advancement in the field of artificial intelligence, particularly in the realm of model efficiency and performance. By employing its innovative 4-bit adaptation technology, Qlora facilitates substantial reductions in memory and computational resource requirements while preserving quality. This transformative capability not only optimizes model deployment but also enables the utilization of sophisticated AI models on less powerful hardware, thereby enhancing accessibility.

Throughout this discussion, the advantages of adopting Qlora’s approach have been highlighted. Key points include the emphasis on maintaining performance integrity despite the quantization process, as well as the benefits realized in training efficiency. The ability to harness 4-bit models ensures that high-quality outputs can be achieved without the typical trade-offs associated with lower precision; thus, democratizing the use of advanced AI technologies.

Moreover, the adoption of Qlora encourages a shift towards sustainable AI development practices. As industries increasingly seek to minimize their carbon footprint and maximize efficiency, technologies that allow for reduced computational demands will play a pivotal role. Consequently, the integration of features offered by Qlora can significantly impact various sectors by promoting environment-friendly initiatives.

In conclusion, the implications of Qlora’s 4-bit adaptation extend beyond mere technical specifications. They position Qlora as a pivotal player in the future of AI, with the potential to reshape how models are developed, deployed, and utilized across diverse applications. As researchers and developers continue to explore the reach of these innovations, the broader AI landscape stands to benefit immensely from Qlora’s contributions to effective and optimized model utilization.