Introduction to Catastrophic Forgetting

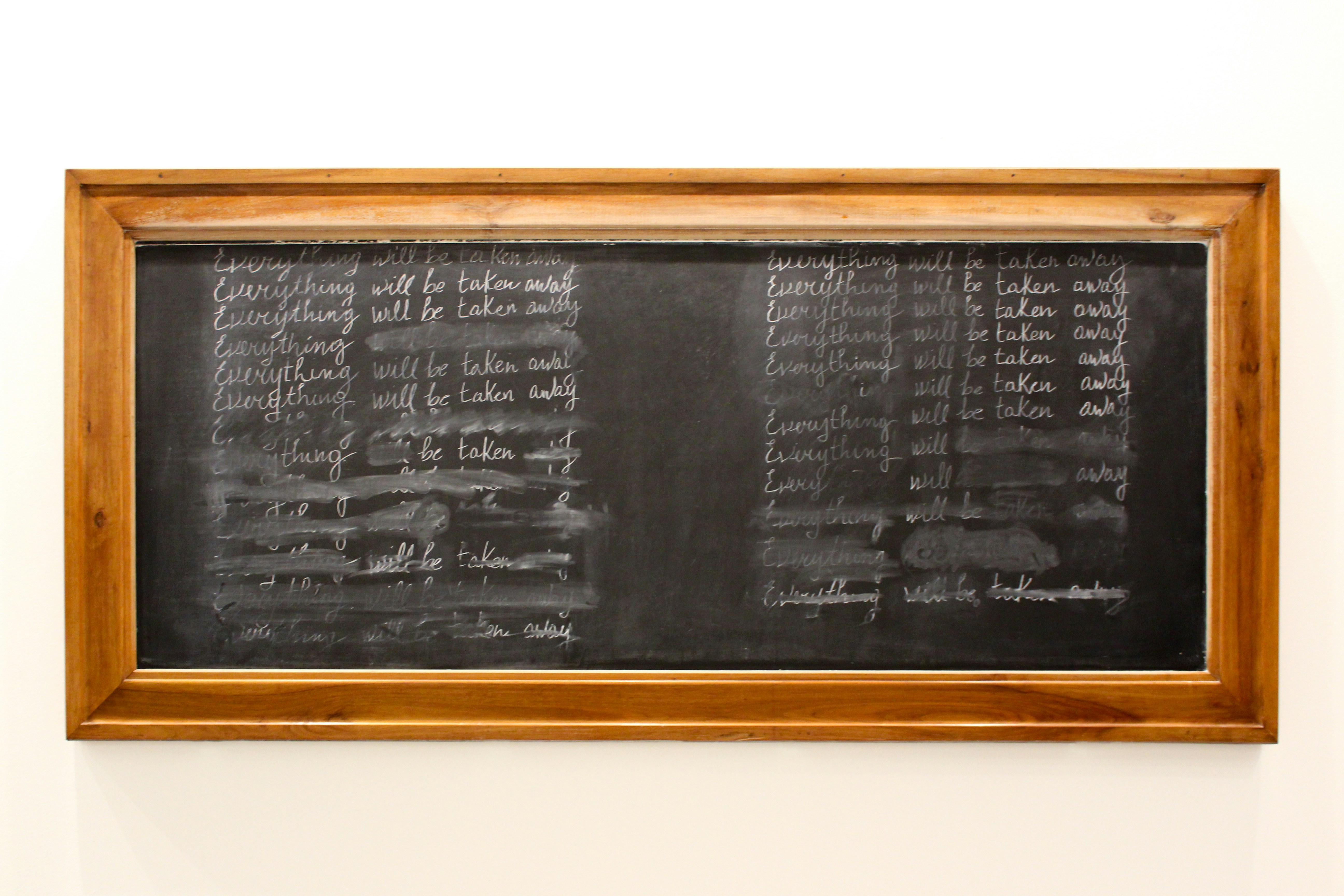

Catastrophic forgetting, often referred to as catastrophic interference, is a significant challenge faced in the field of continual deep learning. This phenomenon occurs when a neural network, upon acquiring new knowledge, exhibits a marked decline in its ability to retain previously learned information. As machine learning models are increasingly employed for dynamic tasks requiring perpetual learning, understanding catastrophic forgetting’s implications becomes vital.

The essence of catastrophic forgetting lies in the model’s reliance on its internal memory structures, which are designed to store information derived from prior experiences. When a neural network is trained on a new dataset, the adjustments made to the weights and biases in its layers may inadvertently overwrite the existing parameters that encapsulate earlier learning. Consequently, this erasure results in diminished performance regarding previously learned tasks—an issue that poses significant barriers for models intended for continual learning.

The consequences of catastrophic forgetting extend beyond theoretical implications and into practical applications. For instance, in applications such as robotic control, natural language processing, and image recognition, it is critical for systems to adapt to evolving environments without sacrificing the knowledge gained from prior experiences. If a robotic system trained to perform specific tasks fails to retain that knowledge while learning new behaviors, it may encounter operational inefficiencies that can lead to increased costs or even failures in critical scenarios.

Given these challenges, research into strategies that mitigate catastrophic forgetting, such as experience replay, regularization techniques, and architectural modifications, is progressing rapidly. The goal is to enhance memory retention within artificial neural networks, thereby improving their practicality and effectiveness in real-world applications. Addressing catastrophic forgetting is essential for ensuring that deep learning models remain robust and versatile as they continue to be integrated into various domains.

The Mechanism of Catastrophic Forgetting

Catastrophic forgetting occurs in neural networks when learning new information leads to the degradation of previously acquired knowledge. This phenomenon is particularly prevalent in settings where models are trained sequentially on multiple tasks without the ability to retain earlier task-specific parameters. Understanding the mechanism of catastrophic forgetting involves examining how weight updates during training on new tasks can interfere with weights associated with previously learned tasks.

During the training process, a neural network adjusts its weights in response to the loss calculated from its predictions. When a network is exposed to new data associated with a different task, the algorithm may update weights significantly to minimize error. However, herein lies the critical issue: these changes can inadvertently disrupt the representations needed for the original tasks, leading to a decline in performance on prior knowledge. This adjustment is often captured mathematically using gradient descent, where the updates can be represented as:

W' = W - η ∇L

In this formula, W’ denotes the updated weights, W are the original weights, η is the learning rate, and ∇L represents the gradient of the loss function. Although the model attempts to reduce loss on the new task, significant weight adjustments can overlap with weights critical for previous tasks, producing a conflict.

Moreover, the phenomenon can be exacerbated when tasks are highly dissimilar. If the distribution of the new tasks diverges significantly from past experiences, it can further magnify the potential for weight interference, leading to an even sharper degradation of previous knowledge. Consequently, the model may exhibit a significant drop in accuracy on older tasks, highlighting the importance of effective mechanisms to mitigate catastrophic forgetting.

Factors Influencing Catastrophic Forgetting

Catastrophic forgetting is a significant challenge in continual deep learning, where neural networks may lose previously acquired knowledge upon learning new tasks. Several factors influence the severity of this phenomenon. One of the most prominent is the frequency at which task changes occur. Frequent shifts between tasks can overwhelm the learning capacity of a model, leading to a more pronounced forgetting effect. When a neural network is tasked with learning new information rapidly, it may inadvertently overwrite previous knowledge, resulting in deteriorated performance on prior tasks.

Another critical factor is the similarity of the tasks involved. When new tasks are too similar to previously learned ones, the network may struggle to differentiate between them, complicating the retention of older knowledge. This issue becomes particularly evident in scenarios where tasks share overlapping features or label spaces. In such cases, the model’s decision boundaries might shift significantly, further exacerbating the risk of forgetting what it has learned earlier.

The architecture of neural networks also plays a crucial role in influencing catastrophic forgetting. Certain architectures, like fully connected networks, can be particularly susceptible to forgetting due to limited capacity to manage diverse information streams. Enabling mechanisms such as dropout or regularization can mitigate this issue, but they may also impede the model’s ability to learn new tasks effectively. Moreover, using advanced architectures that incorporate memory retention strategies—like recurrent neural networks or models with dedicated memory components—can positively impact knowledge retention.

The interplay of these factors greatly affects learning dynamics. Understanding their relationships is vital for developing strategies that can enhance knowledge retention while minimizing the negative impact of catastrophic forgetting in continual deep learning.

Continual Learning and Its Challenges

Continual learning is an area of machine learning that focuses on enabling models to learn from a continuous stream of data while avoiding the detrimental effects associated with conventional training methods. Unlike traditional machine learning approaches that necessitate a complete retraining of models when new data becomes available, continual learning seeks to improve and update models incrementally. The goal is to facilitate a dynamic learning environment where machines can adapt to new information, tasks, and even environments over time.

The challenges faced in continual learning primarily arise from the need to retain previously acquired knowledge while integrating new data. One of the most significant issues is catastrophic forgetting, where a model forgets previously learned information when trained on new tasks. This phenomenon occurs because the neural networks typically adjust their weights based on the latest inputs, which can overwrite the information learned from prior data sets. As a result, models often perform poorly on earlier tasks, leading to a decline in overall performance.

In the realm of deep learning, these challenges become even more pronounced. Deep neural networks, while powerful, are inherently prone to catastrophic forgetting due to their complex architectures and the vast number of parameters they utilize. Traditional approaches to mitigate this issue—such as storing entire datasets or employing specific regularization techniques—are often not scalable or feasible in real-world applications. Consequently, researchers seek innovative strategies that allow for the retention of past knowledge while successfully assimilating new information without substantial degradation of prior learning.

Strategies to Mitigate Catastrophic Forgetting

Catastrophic forgetting remains a significant challenge in the field of continual deep learning, where models face the dilemma of retaining previous knowledge while acquiring new information. Various approaches have been proposed to alleviate this issue, including regularization methods, rehearsal strategies, and the implementation of memory-augmented networks.

Regularization techniques, such as Elastic Weight Consolidation (EWC), work by identifying and maintaining the critical parameters of a neural network that are essential for previous tasks. By applying penalties for changes to these important weights during training on new tasks, EWC helps preserve acquired knowledge. Other regularization methods may employ approaches like Knowledge Distillation, which encourages the model to retain outputs from earlier tasks while learning new ones, providing a balance between old and new learning.

Rehearsal methods employ a different strategy by periodically revisiting past experiences. These techniques could involve retaining samples from previously learned tasks and integrating them into training for new tasks, thereby providing the model with a more robust learning experience. Techniques such as Experience Replay and Generative Replay aim to mitigate the forgetting problem by selectively reintroducing previous task data, though this approach can introduce additional computational overhead and complexity in managing the rehearsal data.

Memory-augmented networks represent another innovative approach to address catastrophic forgetting. By utilizing external memory resources, these networks can store and retrieve information from past tasks more effectively. Techniques such as Neural Turing Machines or Differentiable Neural Computers enable models to access stored knowledge dynamically, allowing for the efficient retention of learned information across multiple tasks.

While each of these methods has shown promise in mitigating catastrophic forgetting, it is essential to recognize their limitations. Regularization methods may struggle with task similarity, rehearsal can be limited by storage capacity, and memory-augmented networks may introduce complexity. Therefore, a combination of these strategies is often recommended to enhance the effectiveness of continual learning systems.

Memory Consolidation in Humans vs. Computers

Memory consolidation is a crucial aspect of how both humans and computers process and retain information over time. In humans, this process is largely influenced by various biological mechanisms, including the roles of the hippocampus and neocortex. During sleep, for instance, the human brain engages in a process called slow-wave sleep (SWS), which is essential for transferring information from short-term to long-term memory. This biological phenomenon can provide valuable insights for developing algorithms that address catastrophic forgetting in artificial neural networks.

Humans utilize a combination of explicit and implicit memory systems, aiding in the retention of new information by integrating it with pre-existing knowledge. This integration process allows for a more resilient memory structure, which is less susceptible to degradation. Conversely, many machine learning models, particularly neural networks, often struggle to retain previously learned information when presented with new data. This challenge, known as catastrophic forgetting, impedes their ability to build on past experiences.

Inspired by human memory consolidation, several techniques from neuroscience can be adapted for artificial systems. For instance, concepts such as synaptic consolidation—where frequent use of certain neural connections strengthens them—can be emulated in algorithms. Techniques like rehearsal strategies or memory replay, where previously learned tasks are revisited periodically, can help mitigate the loss of information. Additionally, incorporating mechanisms that mimic biological memory, such as forgetting curves, could allow deep learning models to prioritize essential information while gradually fading less critical data.

By establishing a deeper understanding of human memory processes and their biological foundations, researchers can devise more effective strategies to enhance memory retention in computers, ultimately paving the way for advancements in continual deep learning. The integration of neuroscience principles into algorithm design represents a promising frontier in addressing the challenges posed by catastrophic forgetting.

Real-World Applications and Implications

Catastrophic forgetting poses significant challenges across various domains in continual deep learning, particularly in real-world applications such as robotics, autonomous systems, and personalized recommendation systems. In robotics, for instance, continual learning is essential for adaptive behavior in dynamic environments. When robots are trained on sequential tasks, they often exhibit detrimental effects due to forgetting previously learned knowledge. This phenomenon compromises their ability to execute tasks effectively, especially in unpredictable scenarios where adaptability is crucial.

Similarly, in autonomous systems like self-driving cars, the reliability of models is paramount. These vehicles must learn from diverse and evolving datasets as they navigate through different driving conditions and environments. However, if an autonomous system experiences catastrophic forgetting after updating its model with new data, it may result in poor decision-making capabilities. Such failures not only affect operational efficiency but also pose safety risks, making it imperative to develop robust strategies that mitigate forgetting.

In the realm of personalized recommendation systems, catastrophic forgetting can lead to suboptimal recommendations. As these systems learn user preferences over time, forgetting critical past interactions can adversely affect user satisfaction and engagement. For example, if a streaming service forgets a user’s previously watched genres after an update, it may fail to suggest relevant content, frustrating the user and diminishing their overall experience. Addressing catastrophic forgetting is therefore vital for enhancing the personalization and effectiveness of these systems.

Real-world case studies further highlight the impact of catastrophic forgetting on performance and innovation. For instance, research in continual learning frameworks has demonstrated that enhancing memory retention can improve the stability and performance of deep learning models in demanding applications. Strategies such as experience replay and architectural regularization have shown promise in counteracting the effects of forgetting, leading to more resilient and intelligent systems.

The Future of Continual Learning Research

The future of continual learning research is poised for exciting developments as scholars and practitioners seek to mitigate the challenges associated with catastrophic forgetting. One emerging trend is the integration of self-supervised learning techniques, which can enhance the ability of neural networks to learn more flexibly and robustly from diverse data sources. Self-supervised learning provides a framework where models can leverage unlabeled data for training purposes, significantly enriching the learning experience and potentially diminishing the adverse effects of forgetting.

Moreover, the exploration of hybrid models combines traditional supervised learning with unsupervised and self-supervised methodologies. These hybrid architectures allow for more effective parameter sharing and finer control over model training, thus fostering retention of previously learned information. Such an approach could dramatically improve the performance of deep learning models in environments where data is continuously evolving.

Additionally, research may delve into enhanced memory mechanisms, which would enable models to store and recall critical information more effectively. By using techniques such as episodic memory and external memory networks, continual learning systems can reinforce their learning without succumbing to the limitations of overfitting or catastrophic forgetting. This focus on memory may lead to more robust architectures capable of enduring the challenges posed by real-world applications.

Collaboration between various disciplines, such as neuroscience and cognitive science, will likely yield insights that could further inform the development of continual learning frameworks. Understanding the underlying mechanisms of human learning may provide valuable lessons for creating more resilient artificial intelligence systems.

In essence, the future of continual learning research hinges on innovative methodologies that address the dual challenges of learning from new data while preserving previously acquired knowledge. By embracing avant-garde strategies, researchers can pave the way for more adaptive and intelligent systems capable of working seamlessly in dynamic environments.

Conclusion

In this discourse on catastrophic forgetting within the realm of continual deep learning, we have painstakingly examined the core elements that contribute to this phenomenon. Catastrophic forgetting occurs when a neural network trained on a series of tasks fails to retain knowledge from earlier tasks after being exposed to new data. This challenge represents a significant obstacle in the development of adaptive and intelligent AI systems, hindering their ability to generalize knowledge over time.

We highlighted various strategies that researchers are leveraging to mitigate the effects of catastrophic forgetting. Techniques such as elastic weight consolidation, experience replay, and the use of regularization methods have shown promise in enhancing model retention capabilities. It is crucial for researchers and practitioners in machine learning to address these issues head-on to foster the evolution of continually learning models that can maintain high performance across multiple tasks without sacrificing previously acquired knowledge.

The ongoing pursuit to resolve catastrophic forgetting also opens avenues for further exploration. Future research could delve into the synergy between cognitive processes in humans and artificial systems, aiming to draw parallels that bolster understanding and evolution in AI frameworks. Moreover, studying how different architectures might inherently resist forgetting could pave the way for innovative solutions.

Encouraging a dialogue among AI researchers is integral in creating a comprehensive approach to tackle this issue. Continued collaboration, knowledge sharing, and experimentation will undoubtedly contribute to breakthroughs in this field. By addressing catastrophic forgetting, we can enhance the capabilities of artificial intelligence, leading to systems that are not only intelligent but also resilient and adaptive in an ever-evolving environment.