Introduction to Global Hallucinations

Global hallucinations, particularly in the fields of artificial intelligence (AI) and machine learning (ML), refer to instances where AI systems generate outputs that are factually incorrect, misleading, or entirely fabricated. This phenomenon occurs when the algorithms make unfounded assumptions or draw inferences that deviate significantly from reality. As AI technology continues to advance and permeate various sectors, including healthcare, finance, and media, understanding the implications of global hallucinations becomes essential.

The reliability of AI-generated content is deeply affected by these hallucinations, as erroneous data can lead to flawed decision-making processes. For instance, in healthcare, inaccurate diagnostic suggestions from AI models can result in misdiagnoses that may jeopardize patient safety. In the finance sector, wrong interpretations of market indicators can lead to poor investment decisions, affecting not only organizations but also individual investors. In media, hallucinated information can spread misinformation, potentially damaging reputations and trust in journalistic integrity.

Addressing the issue of global hallucinations is crucial for the successful integration of AI into these critical sectors. Without effective mitigation strategies, the drift toward accepting AI outputs without verification can undermine progress and create significant risks. Both developers of AI tools and users must be aware of the potential for hallucinations and take proactive steps to ensure the accuracy and dependability of AI outputs. By fostering an environment that emphasizes validation and cross-referencing of AI-generated content, we can work towards minimizing the occurrences of global hallucinations and enhancing the overall credibility of AI applications.

Understanding Chain-of-Verification

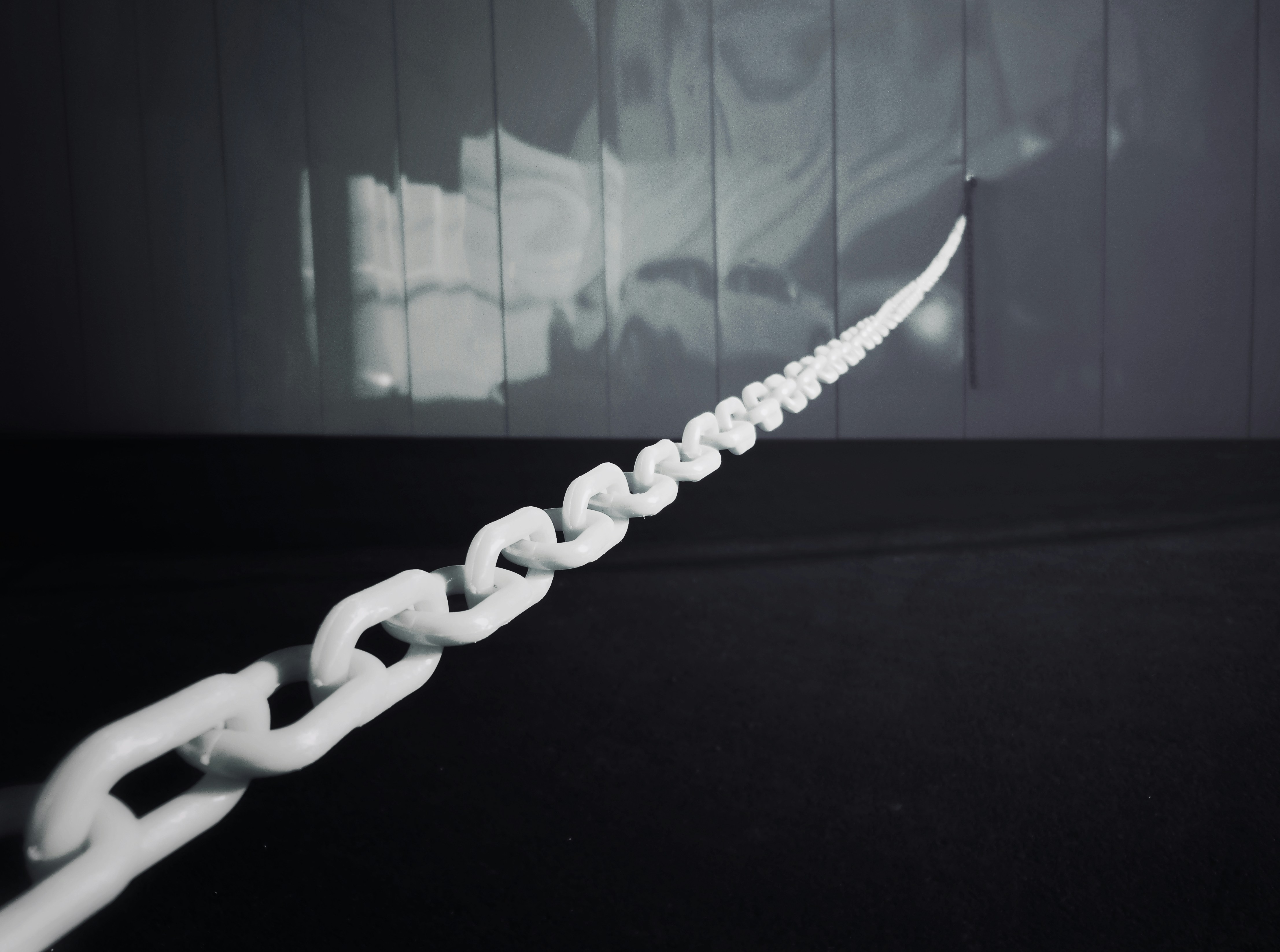

The concept of chain-of-verification serves as a critical framework within information systems, aiming to ensure the reliability and accuracy of data. This approach is particularly relevant in the context of artificial intelligence, where trust in data quality is fundamental. At its core, chain-of-verification operates by establishing a systematic process for validating information through various levels of scrutiny. Each link in this chain plays a vital role in the overall verification process.

One primary principle of chain-of-verification is evidence verification, which requires the assessment and endorsement of data by different sources or authorities. This involves collecting information through multiple means, such as documentation, eyewitness accounts, or other credible sources. By cross-referencing these inputs, information systems can cultivate a more holistic view of the data at hand. The integrity of the data is enhanced through this meticulous process, where each piece is compared and contrasted to confirm its authenticity.

Another key aspect of the chain-of-verification is the commitment to rigorous validation protocols. Establishing a set of criteria for evaluating the sources of information ensures that only those meeting certain standards are utilized in the decision-making process. Maintaining a consistent methodology for validation is imperative, as it prevents the propagation of falsehoods, which can lead to misinformation and, consequently, global hallucinations in AI outputs.

In this way, the chain-of-verification not only reinforces the accuracy of data but also contributes to a broader sense of trust within information systems. With ongoing scrutiny and cross-referencing, AI systems can derive insights from data that are not only reliable but also reflect a greater understanding of the complexities inherent within vast information landscapes.

The Relationship Between Verification and Hallucinations

The phenomenon of hallucinations in artificial intelligence (AI) systems refers to the generation of outputs that are inaccurate or entirely fabricated, presenting a significant challenge in the field of AI development. Verification processes play a crucial role in reducing these hallucinations by ensuring that the information provided by AI systems is accurate, reliable, and grounded in valid data. The direct connection between verification and hallucinations can be understood through examining how robust verification mechanisms function and the impacts they have on AI outputs.

Verification entails a systematic approach to evaluating the responses generated by AI models against established datasets and factual information. By implementing a rigorous verification framework, discrepancies and inaccuracies in outputs can be identified and rectified. This process not only enhances the quality of information that users receive but also builds trust in AI systems, diminishing the likelihood of users making decisions based on unreliable data. In the absence of robust verification, AI systems may produce outputs that contribute to misinformation, potentially leading users to erroneous conclusions or actions.

Psychological factors also play an essential role in this relationship. Individuals often place trust in information presented by AI, sometimes viewing it as an authoritative source. When AI generates hallucinated responses, users may consequently act upon this flawed information, leading to detrimental results. By employing a thorough verification process, developers can reduce reliance on hallucinated outputs, ensuring users make decisions grounded in verified and accurate data. This not only contributes to safer decision-making but also fosters a greater understanding of how information reliability affects user judgment.

Case Studies: Successful Implementation of Chain-of-Verification

Various organizations across different sectors have successfully implemented a chain-of-verification system, significantly reducing the incidence of global hallucinations and improving operational effectiveness. One prominent example is a leading media organization that adopted a rigorous chain-of-verification process for news reporting. By instituting a multi-tiered verification system, the organization ensured that every piece of information went through fact-checkers, editors, and legal teams before being published. This initiative not only enhanced the credibility of their news reports but also fostered greater trust among their audience. The implementation resulted in a measurable decrease in misinformation incidents, thereby showcasing the efficacy of a robust verification framework.

Another notable case is a renowned academic institution that faced challenges regarding the reliability of research data used in public health communications. To address this, they established a comprehensive chain-of-verification process for all research outputs. This included unifying data collection methods, employing peer review at multiple stages, and utilizing automated systems to flag discrepancies in findings. The result was a marked enhancement in data integrity, leading to improved public trust in the research outcomes disseminated by the university and its affiliates.

In the corporate sector, a multinational technology company introduced a chain-of-verification for its product quality assurance protocols. By employing a thorough tracking system for product materials, designs, and testing phases, the company could quickly identify and rectify potential sources of errors. This proactive approach not only mitigated the risk of global hallucinations in product information but also improved customer satisfaction ratings, as stakeholders felt more confident in the reliability of the company’s products. The successful implementation of these chain-of-verification systems illustrates the tangible benefits they bring in enhancing operational effectiveness and fostering public trust across various industries.

Challenges in Implementing Chain-of-Verification

Implementing a chain-of-verification system brings several challenges that organizations must address to ensure its success. One significant hurdle is the resistance to change found within various stakeholders. Employees and management alike may be accustomed to existing procedures and hesitant to adopt new verification processes. This resistance can stem from a lack of understanding of the benefits of chain-of-verification, misconceptions about its complexity, or apprehension about job security due to technology integration.

Moreover, technological limitations can pose obstacles in the successful deployment of these systems. Many organizations may lack the infrastructure needed to support sophisticated verification mechanisms. This includes both hardware and software components, which are essential for processing and storing data securely. As technology rapidly evolves, keeping pace with new advancements becomes crucial, yet challenging, particularly for small and medium-sized enterprises lacking resources.

The complexity inherent in the verification processes themselves cannot be understated. Designing a reliable chain-of-verification mechanism requires balancing multiple factors, including accuracy, speed, and cost-effectiveness. This complexity can deter organizations from initiating such a project, as they may underestimate the time and effort required for implementation. Furthermore, integrating chain-of-verification with existing systems demands thorough planning and consideration of potential disruptions to ongoing operations.

Lastly, a significant barrier is the need for skilled personnel to manage and execute chain-of-verification. These systems require not only technical expertise but also training in best practices and methodologies related to verification processes. Organizations often face challenges in recruiting or upskilling employees who possess the necessary qualifications. In light of these hurdles, understanding the multifaceted challenges in implementing chain-of-verification is essential for devising effective strategies to address them and enhance overall reliability in verifying data.

Technological Innovations Supporting Verification Processes

The landscape of verification processes has been significantly transformed by the advent of various technological advancements. Among these, artificial intelligence (AI) has emerged as a key player. AI tools enhance the efficiency of verification systems by automating the data comparison and validation processes. Machine learning algorithms are particularly beneficial, allowing systems to learn from historical data, thereby increasing accuracy in recognizing patterns that indicate verified or unverified information. The potential of AI in terms of scalability and adaptability holds promising prospects for chain-of-verification systems.

Moreover, blockchain technology has gained traction as a robust solution for ensuring data integrity throughout the verification journey. By providing a decentralized and immutable ledger, blockchain allows each verification step to be recorded transparently, fostering trust among parties involved. This is especially important in combating global hallucinations, where misinformation can rapidly spread. The decentralized nature of blockchain means that no single entity can manipulate or alter the stored information, and this strengthens the entire verification process.

In recent years, automated verification methods have come to the forefront, streamlining processes that were traditionally manual. These systems employ advanced algorithms to cross-check data against multiple sources in real-time, significantly reducing the time required for verification. Automation not only enhances accuracy but also frees up human resources for more complex decision-making tasks. Furthermore, the integration of AI with automated verification systems creates a synergistic effect, leading to higher accuracy rates and faster results.

The future of verification processes is poised for further innovations as technology continues to evolve. With enhancements in AI, blockchain, and automation, we can anticipate a more sophisticated approach to verifying information, ultimately leading to a reduction in global hallucinations and increased reliability across various fields.

Ethical Considerations in Verification Practices

The advent of digital media has underscored the critical importance of verification practices, particularly in mitigating global hallucinations—a phenomenon where inaccurate or misleading information spreads rapidly across platforms. To navigate the intricate landscape of verification, organizations must consider various ethical implications that arise from their verification methods.

One primary concern is the potential bias inherent in data selection. Verification processes may unintentionally favor certain narratives or perspectives over others, leading to a skewed representation of reality. Organizations must be vigilant in their approach, ensuring that the data they choose to verify is comprehensive and inclusive. This responsibility extends not only to the selection of data sources but also to the diversity of viewpoints represented within those sources.

Furthermore, the responsibility of organizations extends beyond mere verification to encompass the obligation to ensure accuracy in data dissemination. In a world increasingly reliant on information, organizations must adopt a proactive stance in their verification practices, employing rigorous methodologies that prioritize factual accuracy. The implications of misinformation are profound, as erroneous data can influence public opinion, policy decisions, and individual behaviors.

To navigate these challenges, a strong ethical framework is essential. This framework should guide verification practices by establishing clear guidelines for data selection, evaluation, and dissemination. Organizations should foster transparency in their methodologies, allowing scrutiny and accountability. By addressing ethical considerations seriously, they can diminish bias, enhance verification accuracy, and foster trust among audiences.

In conclusion, ethical considerations play a pivotal role in effective verification practices. By critically examining data selection, ensuring organizational responsibility, and combatting misinformation, stakeholders can create an environment grounded in accuracy and equity, ultimately reducing the prevalence of global hallucinations.

Future Perspectives: The Evolution of Verification in AI

As artificial intelligence (AI) continues to advance, the methods of verifying its outputs and processes are likely to evolve significantly over the next decade. The increasing integration of AI into societal functions—from healthcare to law enforcement—places heightened importance on developing robust verification systems. This emphasis stems from the need for accuracy and accountability, especially as organizations grapple with the potential repercussions of AI-generated content.

A key trend shaping the future of verification is the demand for greater transparency and explainability in AI systems. Stakeholders, including consumers and regulatory bodies, are increasingly calling for clarity regarding how AI systems reach conclusions. This shift will likely prompt the adoption of verification practices that not only confirm correctness but also elucidate the underlying decision-making processes. Consequently, AI developers will be required to implement verification protocols that focus on generating understandable explanations alongside verified outputs.

Moreover, as AI technologies develop, so too must the regulatory frameworks governing their use. Anticipated changes in legislation surrounding data privacy and ethical standards may mandate stricter verification processes. Regulatory agencies could introduce guidelines ensuring that AI systems undergo rigorous verification protocols designed to minimize biases and prevent the spread of misinformation. This potential alignment of regulatory requirements and verification practices will encourage organizations to prioritize ethical considerations as integral components of the AI development lifecycle.

The growing intersection of AI with other technologies, such as blockchain, may further enhance verification capabilities. By utilizing decentralized verification methods, stakeholders could create more resilient systems that are less susceptible to tampering or misinterpretation. The evolution of verification processes in AI will undoubtedly require a collaborative approach, uniting developers, ethicists, and regulators to address the complex challenges that lie ahead.

Conclusion: The Path Forward

In summarizing the pivotal role of chain-of-verification in combating global hallucinations in AI systems, it is important to reflect upon the key insights discussed. Throughout this blog post, we have established that the implementation of structured verification processes is indispensable for enhancing the reliability and trustworthiness of artificial intelligence outputs. Such validation not only minimizes instances of unsubstantiated information but profoundly elevates the overall integrity of AI systems.

Moreover, as technology continues to evolve rapidly, the mechanisms we employ for verification and validation must also adapt. Continuous innovation in verification methodologies can provide robust frameworks that preemptively address potential inaccuracies before they manifest. In this light, fostering collaboration among AI researchers, developers, and ethical oversight organizations remains crucial. This collective diligence will ensure the development of AI systems that are not only advanced but also responsible in their information dissemination.

It is also vital that we prioritize education and awareness regarding the significance of verification in AI. Encouraging stakeholders, from developers to end-users, to engage with and understand the concept of chain-of-verification can lead to broader acceptance and application of these practices. Only through mutual understanding and proactive measures can we cultivate an environment where AI technologies align more closely with human expectations and ethical standards.

Ultimately, by remaining vigilant and dedicated to refining practices surrounding chain-of-verification, we can significantly reduce global hallucinations and build AI systems that users can trust. The need for trustworthy AI is paramount, and through shared efforts, we can pave the way for a future where artificial intelligence serves as a reliable partner in our daily lives.