Introduction

In the realm of machine learning, interpolation plays a pivotal role in the relationship between training and testing errors. Interpolation refers to the process of estimating unknown values by using known values within a specific range. This concept becomes particularly relevant when analyzing how well a model can generalize from its training data to unseen instances. As models are trained, they inevitably encounter variations in performance, most notably seen in the test errors that arise when the model is evaluated against new data.

A fundamental observation in machine learning is that as the training progresses, the model’s error on the test set can exhibit intriguing behavior. Specifically, there is often a notable decrease in test error that occurs after reaching a specific point, commonly referred to as the interpolation threshold. This threshold marks a critical juncture, wherein the model begins to exhibit improved performance on unseen data as it has learned to adequately represent the underlying patterns present in the training dataset.

The significance of this threshold extends beyond academic curiosity; understanding the behavior of test error in relation to interpolation is crucial for practitioners who aim to develop robust machine learning models. Many algorithms excel at learning the training data, but it is the ability to generalize—maintaining low test error—that determines the effectiveness of a machine learning solution in real-world applications. As such, in the sections that follow, we will delve deeper into the mechanics behind the interpolation threshold, exploring its implications for both practitioner and researcher alike.

Defining Interpolation in Machine Learning

Interpolation in machine learning refers to the method of estimating unknown values within the range of a discrete set of known data points. It plays a crucial role in the domain of predictive modeling, where the goal is to enable a model to make accurate predictions based on input data. By using interpolation techniques, machine learning algorithms can create a smooth transition between existing observed data, providing estimates for new data points that fall within specified intervals.

At the core of interpolation is the principle of fitting data. Various algorithms utilize different approaches to achieve this, and the effectiveness of these methods can significantly impact the performance of the model. Common interpolation techniques include linear interpolation, polynomial interpolation, and spline interpolation, each serving the purpose of forming a continuous function that captures the relationship among dataset coordinates. While linear interpolation connects adjacent data points with straight lines, polynomial interpolation uses polynomials to create smoother curves.

The significance of interpolation arises particularly when dealing with sparse data or when refining prediction accuracy. It can enhance a model’s capacity to generalize, ensuring that trained algorithms grasp the underlying patterns of the data rather than overfitting to specific points. This ability to estimate values and define functional relationships is imperative, especially in scenarios where the model is required to operate on unseen data. As a result, understanding the nuances of interpolation is vital for anyone engaging with machine learning principles.

The Interpolation Threshold Explained

The interpolation threshold is a crucial concept in statistical modeling that defines the point at which a model transitions from extrapolating data points to interpolating them. Extrapolation occurs when a model makes predictions outside the range of the observed data. In contrast, interpolation provides estimates within the known data boundaries. Understanding this threshold is essential for evaluating how a model behaves with varying complexity.

As models become increasingly complex, they illustrate a propensity to offer precise fits to training data, thereby potentially yielding misleading signals. The interpolation threshold emerges when complexity permits the model to approximate the training data accurately rather than merely fitting it closely without generalizing predictions beyond known data points. At this stage, the model achieves a balance, ensuring that it provides reliable predictions while being robust to noise in the training set.

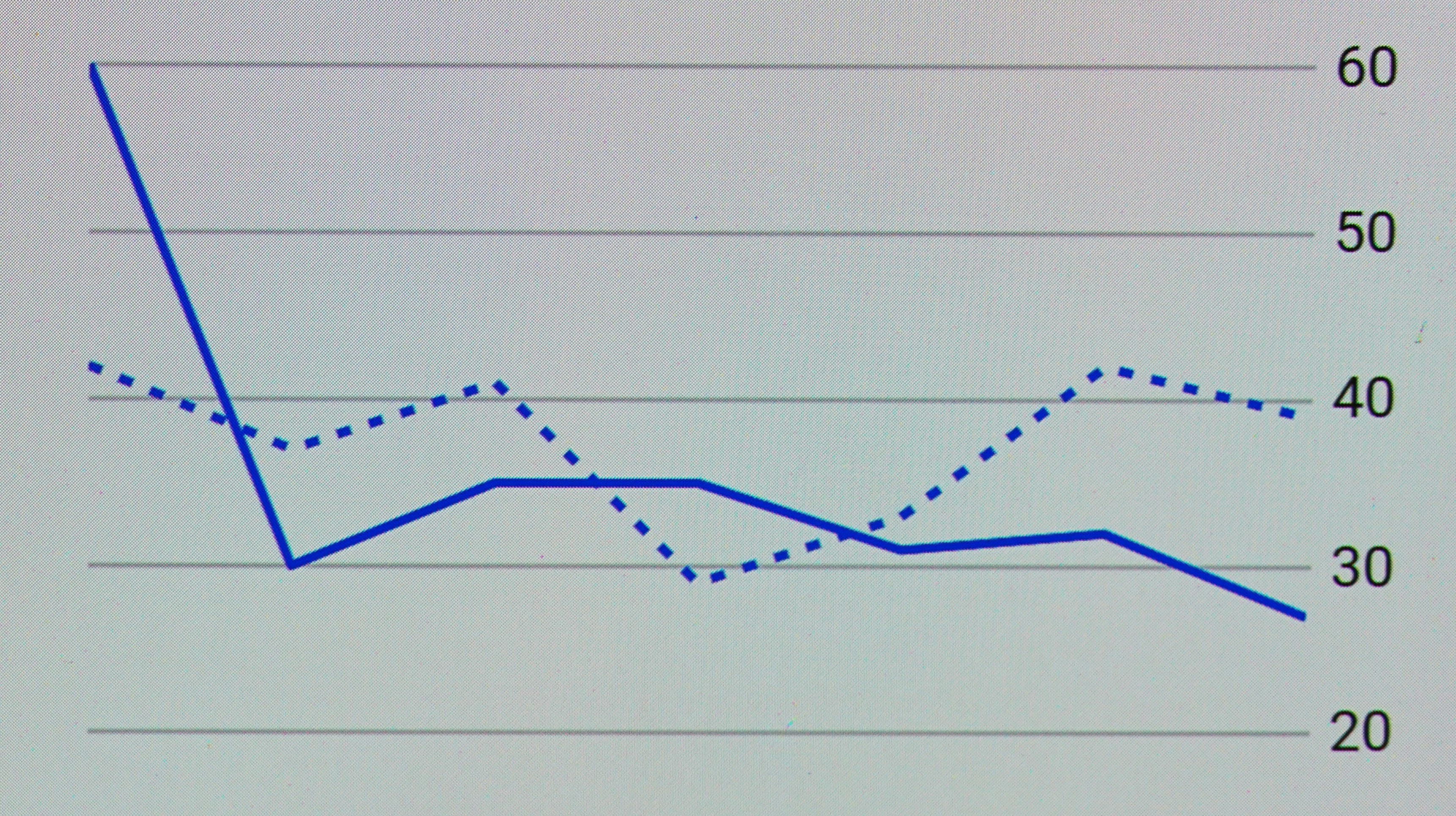

The behavior of a model post-threshold can significantly inform practitioners about its predictive accuracy. For instance, as the model complexity increases, it may initially experience a decline in test error due to enhanced capability in capturing intricate patterns inherent in the training data. However, exceeding the threshold can result in overfitting, where the model begins to reflect noise rather than underlying trends. Navigating this delicate balance is pivotal because it underscores the necessity to monitor relationships within the dataset and the degree of complexity applied.

Ultimately, recognizing the interpolation threshold equips data scientists and statisticians with better insights into model selection and validation. It lays the groundwork for ensuring predictions remain relevant and grounded, contributing to higher overall confidence in applied analytics.

Understanding Test Error

Test error is a critical metric in the field of machine learning that quantifies the performance of a model on unseen data. It is calculated by evaluating the model’s predictions against the actual outcomes in a designated test dataset, which has not been used during the training phase. This measure is essential for understanding how well the model generalizes to new data, as explicit overfitting or underfitting issues may arise when training on limited datasets.

To compute test error accurately, one typically employs various performance metrics such as accuracy, precision, recall, or F1-score, depending on the nature of the task at hand (e.g., classification or regression). By contrasting test error with training error, we can gain insights into the model’s capabilities. Training error reflects the model’s performance on the data it was trained on and may often be lower than the test error due to memorization of the training samples.

The relationship between training error and test error is central to model evaluation. A significant discrepancy where training error is substantially lower than test error may indicate overfitting. In contrast, both errors being similarly high may suggest that the model is underfitting, implying that it lacks the capacity to learn from the training data effectively. The goal is to minimize both types of error and achieve a balance that ensures the model performs well on unseen data.

Evaluating test error is not just about quantifying accuracy; it is a fundamental step toward refining models to improve their predictive capabilities. Understanding the nuances of test error allows practitioners to make informed adjustments and optimizations to their algorithms, ultimately leading to enhanced machine learning systems.

The Importance of Overfitting

Overfitting is a critical concern in the field of machine learning, as it directly influences the performance and generalization abilities of predictive models. When a model becomes overfit, it learns not only the underlying patterns in the training data but also the noise and outliers, which ultimately hinders its effectiveness on new, unseen data. This phenomenon occurs when a model is too complex relative to the amount of training data available; in essence, it begins to memorize rather than generalize.

As models transition from fitting the training data to nearing the interpolation threshold, they might start incorporating irregularities specific to that dataset. This behavior is distinctive as it leads to a drop in the test error initially, creating an illusion of improvement. However, once the model crosses the interpolation threshold, any additional fitting to the noise within the training set typically results in an increased test error, manifesting the detrimental impacts of overfitting. The ideal scenario would involve a model that strikes the right balance between bias and variance, thereby minimizing overfitting while maintaining a strong performance.

It is essential to monitor overfitting in the model training process to ensure that the focus remains on generalization instead of mere replication of the training set. Techniques such as cross-validation, regularization, and the use of simpler models can be employed to mitigate overfitting. Ensuring that your model only captures the true distribution of the data will not only enhance its performance on the test set but also extend its applicability to real-world scenarios. Therefore, understanding overfitting and its implications is vital for building robust machine learning models capable of accurately predicting outcomes based on new data.

Why Does Test Error Drop Post-Interpolation?

The phenomenon of a drop in test error after reaching the interpolation threshold is a pivotal area of study in the field of machine learning. This reduction can be attributed to the improved ability of models to generalize from training data to unseen data, which becomes evident as the model complexity and capacity become more aligned with the dataset at hand.

As models begin to interpolate effectively, they reach a point where they no longer merely memorize the training instances but start to recognize underlying patterns within the data. This transition often occurs once the interpolation threshold is crossed, indicating that the model is sufficiently complex to capture the relevant structures without succumbing to overfitting. The theoretical basis for this behavior can be traced back to principles such as the bias-variance tradeoff, which posits a delicate balance between overfitting (low bias, high variance) and underfitting (high bias, low variance). Once a sufficient level of complexity is achieved, the model’s bias decreases while variance stabilizes, leading to a decrease in test error.

Furthermore, this improvement in generalization can be explained through the lens of regularization techniques, which serve to penalize excessive complexity in models. These techniques help maintain a delicate balance, allowing the model to achieve a level of flexibility needed for effective generalization beyond the training set.

The notion of ‘double descent’ also underpins this phenomenon, suggesting that as model complexity continues to grow, the initial phase may lead to increased test error; however, upon surpassing a certain threshold, test error noticeably declines as the model better captures the complexity of the data. Hence, understanding why test error drops after interpolation is essential not just for practical applications, but also for advancing our theoretical knowledge in statistical learning.

Case Studies and Examples

The phenomenon of a drop in test error after the interpolation threshold is a vital aspect observed in various machine learning applications. One prominent example can be seen in image classification tasks, particularly those utilizing convolutional neural networks (CNNs). In such scenarios, models often achieve near-zero training error when the complexity of the model is well-matched to the data at hand. Once the interpolation threshold is surpassed, the test error tends to decline significantly, showcasing the model’s improved performance on unseen data compared to when it operated below this threshold.

Another illustrative case involves regression tasks within financial modeling. Models that predict stock prices using historical data demonstrate this behavior when additional features or more complex algorithms are employed. Upon reaching the interpolation threshold, where the training data conditions create a highly tailored model, a notable reduction in test error is typically evident. This is particularly useful in risk assessment and portfolio management, where minimizing prediction errors can have significant financial implications.

In natural language processing (NLP), the transition through an interpolation threshold can also be observed. For instance, language models that leverage vast datasets with diverse contexts tend to show a marked decrease in test error after training beyond a certain capacity. This is especially true in tasks requiring sentence completion or text summarization, where a well-fitted model begins to better generalize language patterns learned from the training dataset, effectively enhancing its predictive accuracy on novel examples.

Hence, monitoring the drop in test error in relation to the interpolation threshold is crucial across multiple domains. Recognizing when this threshold is reached can guide practitioners in optimizing their models, ensuring that they harness the full potential of their learning algorithms, ultimately leading to more reliable predictions in real-world scenarios.

Implications for Model Selection and Evaluation

The observation of a drop in test error after the interpolation threshold has significant implications for model selection and evaluation strategies. This phenomenon suggests that certain models may better capture the underlying data structure when evaluated within specific ranges, thereby enhancing their predictive accuracy. Consequently, it is essential for practitioners to understand how models behave in relation to interpolation effects during both the selection and evaluation phases.

When considering various models, it becomes imperative to assess their performance relative to test error reductions that occur after reaching the interpolation threshold. Models that demonstrate a larger drop in error may indicate an ability to generalize effectively beyond training data, making them more favorable options. This information can be critical when deciding on the best model for a particular dataset.

Furthermore, it is advisable to conduct thorough cross-validation techniques that take into account this behavior. Utilizing stratified sampling can ensure that different segments of data are evaluated, providing a more holistic view of model performance in relation to interpolation dynamics. Such practices may foster insights into model robustness, particularly in scenarios where refined predictions are critical.

Additionally, to optimize model selection further, practitioners should remain mindful of choosing models that not only excel in minimizing training error but also exhibit consistent performance across varying thresholds. This may include exploring regularization techniques or ensemble methods that can stabilize predictions around the interpolation point. By emphasizing a model’s error behavior related to interpolation thresholds, one can achieve a more nuanced understanding of its practical application.

Conclusion

In this article, we have explored the critical concept of the interpolation threshold and its significant role in reducing test error within machine learning models. Understanding how the interpolation threshold influences model performance allows practitioners to optimize their approaches, ensuring that their predictions are more accurate and reliable.

The interpolation threshold marks a pivotal point where the model transitions from underfitting or overfitting scenarios toward a more balanced state of generalization. By recognizing this threshold, data scientists and machine learning engineers can make informed decisions about the complexity of the models they employ. Consequently, this knowledge can lead to a notable decrease in test error, enhancing the overall efficacy of machine learning solutions.

Moreover, the relationship between the interpolation threshold and test error embodies a fundamental principle that can be applied across diverse machine learning applications. Whether dealing with regression tasks or classification problems, a clear grasp of this threshold is crucial for achieving optimal results. Future research should continue to investigate this vital area to refine our understanding and improve methodologies for overcoming challenges associated with test error.

In conclusion, the interplay between the interpolation threshold and test error is a foundational aspect of machine learning that warrants thorough examination. As the field evolves, maintaining awareness of these key parameters will be essential for advancing model accuracy and reliability.