Introduction to Synthetic Data

Synthetic data is a valuable innovation in the field of data science and analytics. It refers to artificially generated data that mimics the statistical characteristics of real-world data without disclosing any personal or sensitive information. This type of data is usually created through algorithmic processes that leverage existing datasets to generate new examples that are useful for training algorithms or conducting analyses.

The generation of synthetic data typically involves a variety of technologies, including machine learning models and statistical methods. Techniques such as Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and other simulation-based approaches are commonly utilized to create datasets that accurately reflect the underlying patterns in the original data. By training these models to recognize certain features and relationships, they can produce new instances that retain American Expressers, while ensuring compliance with data protection regulations.

One of the primary advantages of synthetic data is its ability to address privacy concerns often associated with using traditional data sources. By generating data that does not contain identifiable information, organizations can mitigate risks related to data breaches and comply with stringent regulations, such as the General Data Protection Regulation (GDPR). This makes synthetic data especially appealing for industries handling sensitive information, like healthcare and finance.

Furthermore, synthetic data can be more cost-effective than gathering real-world data. Traditional data collection methods often require significant financial resources, time, and infrastructure. In contrast, once the initial generative models are developed, creating synthetic data can be performed quickly and at a fraction of the cost. As a result, organizations can focus on developing their models and algorithms without the burden of extensive data acquisition efforts.

Understanding Scaling Curves in Data Science

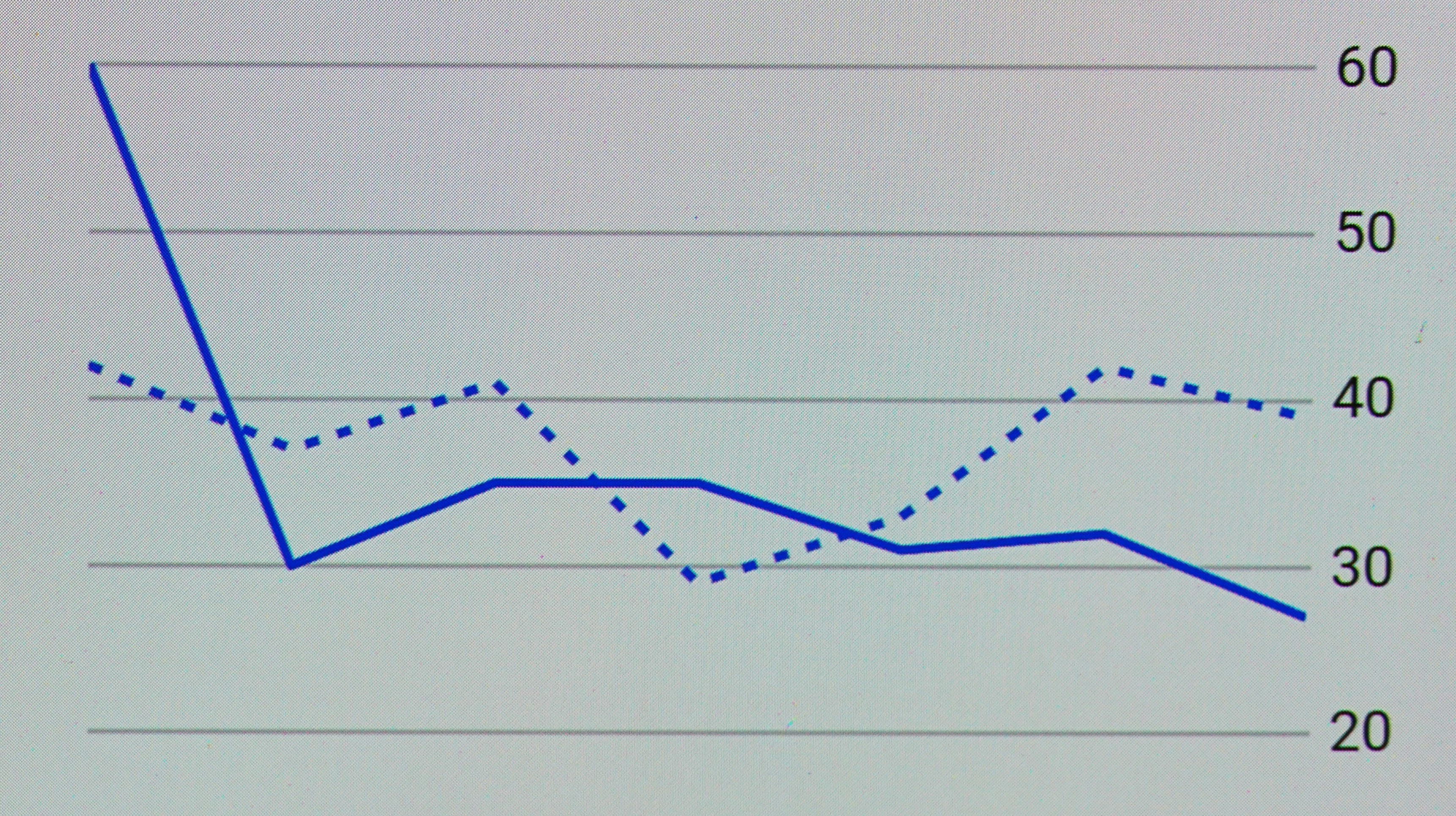

In the field of data science, scaling curves are a critical concept that illustrates the relationship between the volume or quality of data and the performance of algorithms. These curves essentially depict how an increase in data impacts the effectiveness of a given machine learning model. One of the primary observations from scaling curves is the phenomenon of diminishing returns, which suggests that while adding data can improve model performance, the extent of this improvement diminishes after a certain point.

The scaling curves typically demonstrate that, as more data is made available for training machine learning models, the accuracy and predictive performance of these models tend to improve. However, this improvement is not linear; initially, small increases in data volume may lead to significant enhancements in model performance. As the data volume continues to rise, each additional unit of data contributes less to the performance gains of the model, highlighting the principle of diminishing returns.

Understanding how scaling curves function is essential for data scientists as they strategize their data collection and model training efforts. By recognizing where their current performance lies on the scaling curve, practitioners can make informed decisions on whether to focus on acquiring more data or enhancing existing data quality. This understanding is paramount when evaluating the cost-effectiveness of data acquisition against performance improvements. Furthermore, it underscores the importance of not only the volume of data but also its quality in influencing algorithm performance.

Ultimately, scaling curves provide vital insights into the complexity and efficiencies of machine learning processes, guiding data scientists in optimizing model performance while strategically leveraging their data resources.

The Role of Synthetic Data in Machine Learning

Synthetic data has emerged as a transformative tool in the field of machine learning, offering the ability to create vast amounts of data that can complement and enhance real-world datasets. Traditional machine learning models greatly rely on the quality and quantity of the data available for training. However, real datasets can often be limited by privacy concerns, high costs of data collection, or inevitable biases that undermine the model’s performance. This is where synthetic data plays a crucial role.

By providing a reliable means to generate data that mimics real-world scenarios, synthetic data can augment existing datasets, thereby improving the overall robustness of machine learning models. For instance, in domains like healthcare or finance, collecting real data can be challenging due to privacy regulations and ethical considerations. Synthetic data enables researchers to train algorithms without compromising sensitive information, allowing for safer experimental environments.

Moreover, the incorporation of synthetic data can help in mitigating various biases that often plague traditional datasets. Many real-world datasets can be skewed or unrepresentative of the broader population, leading to models that perform poorly when applied to diverse scenarios. Using synthetic data, developers can ensure that their training datasets are more balanced and encompass a wider range of conditions, thus enhancing the model’s applicability and fairness.

Furthermore, synthetic data generation approaches such as Generative Adversarial Networks (GANs) and variational autoencoders have shown great promise. These techniques can create highly realistic and varied data samples, enabling the training of more adaptable and effective machine learning models. In conclusion, leveraging synthetic data not only amplifies the quantity of available data but also enhances the quality, which is instrumental in developing robust, fair, and efficient machine learning systems.

Synthetic data has proven to be a transformative tool across various industries, enabling organizations to innovate and improve their services effectively. One prominent example is the healthcare sector, where synthetic data has been utilized for medical research and training algorithms. For instance, a hospital in New York partnered with a data science firm to generate synthetic patient records. By leveraging these artificial datasets, researchers could develop and test predictive models for disease progression without compromising the confidentiality of patient information. This application not only expedited the process of research but also allowed for the inclusion of diverse demographic variables that may not have been sufficiently represented in traditional datasets.

In the finance industry, synthetic data has played a crucial role in enhancing fraud detection algorithms. A leading bank implemented synthetic data to simulate various financial transactions under different scenarios. By utilizing these datasets, the bank enhanced its machine learning models, resulting in improved identification of fraudulent activities. The synthetic data allowed the institution to robustly test and refine their detection systems without risking customer confidentiality, thus effectively bending their scaling curves upward by increasing accuracy and speed.

Furthermore, the field of autonomous vehicles showcases another compelling application of synthetic data. Companies like Waymo have invested in generating vast amounts of synthetic driving scenarios to train their self-driving algorithms. By creating diverse and complex environments with synthetic data, these companies are able to anticipate challenges that could arise in real-world driving situations. This strategic use of synthetic datasets has not only accelerated the development of autonomous technologies but also significantly increased the safety and reliability of such systems.

Challenges and Limitations of Synthetic Data

Synthetic data has emerged as a promising alternative for training machine learning models and conducting data analysis, yet it is not without its difficulties. One of the primary challenges is the potential for overfitting. Models trained on synthetic datasets may perform well in controlled environments but could fail to generalize effectively to real-world scenarios. This limitation stems from the artificial nature of the data; if the synthetic data lacks variety or fails to accurately reflect the complexities of real-world phenomena, it may lead to biased or overly simplistic models.

Furthermore, the generation of unrealistic data poses a significant concern. Synthetic data is produced based on algorithms and may not capture the nuanced distributions present in real datasets. When synthetic data fails to emulate the peculiarities of actual data, it can mislead analyses and produce invalid results. This issue is compounded by the subjective nature of defining what constitutes realistic data, which can vary across different domains and use cases.

Ensuring data quality is another challenge in the realm of synthetic data. The underlying algorithms that generate this data must be meticulously designed and tested to guarantee that the output meets the required standards of validity and reliability. Any oversight in this process can result in subpar data that could compromise the integrity of research and decision-making processes.

In addition to technical limitations, there are ethical implications and regulatory concerns associated with synthetic data. As organizations increasingly turn to synthetic data for training AI systems, they must navigate complex legal frameworks that govern data privacy and usage. The potential for misuse or harmful applications of synthetic data raises questions about accountability and ethical responsibility. Organizations must remain vigilant in addressing these challenges to harness synthetic data’s full potential while safeguarding ethical considerations.

Future Trends in Synthetic Data Generation

The continuous evolution of synthetic data generation technologies represents an important frontier in the fields of artificial intelligence and machine learning. As advanced AI models proliferate, their capability to produce high-quality synthetic data is becoming increasingly sophisticated. One of the most promising advancements in this domain is the application of generative adversarial networks (GANs). These neural networks pit two models against one another, forcing them to improve iteratively until the generated data is indistinguishable from real-world data.

As GANs develop, they allow for the creation of complex, high-dimensional datasets that can mimic the nuances of real data more closely than ever before. This capability can significantly enhance the performance of data-driven projects that rely on robust datasets, thereby pushing the boundaries of what is achievable in AI applications. Furthermore, the utilization of GANs is expected to streamline the data generation process, reducing the time and resources required to collect and annotate real-world data. Consequently, organizations will be able to scale their data-driven initiatives more effectively, addressing the current limitations seen in scaling curves.

Additionally, other emerging technologies, such as reinforcement learning and transfer learning, are anticipated to play a role in the future of synthetic data generation. These techniques not only enhance the learning efficiency of AI models but also improve their capacity to generate synthetic data that is contextually relevant to specific applications. The interplay between these advanced methodologies presents exciting possibilities for the future, particularly regarding the seamless integration of synthetic and real datasets.

In summary, the future of synthetic data generation is likely to be characterized by the synergy of advanced AI models and sophisticated techniques, such as GANs. These developments may redefine scalability thresholds for data-centric projects, ultimately transforming how organizations approach data-driven decisions.

Synthetic Data vs. Real Data: A Comparative Analysis

Synthetic data and real data serve as essential components in the development and training of machine learning models. Each type of data offers distinct advantages and poses specific challenges that can affect the performance of predictive algorithms. Understanding these differences is crucial for data scientists and engineers in selecting the appropriate data type based on their project requirements.

Real data, often derived from actual events or transactions, is invaluable for its authenticity. It reflects real-world variability and complexity, making it particularly beneficial in scenarios where capturing an accurate representation of natural occurrences is paramount. However, real datasets may also contain noise, biases, and limited availability. Furthermore, privacy concerns can restrict access to sensitive information, which is where synthetic data becomes advantageous.

Synthetic data, on the other hand, is generated through algorithms rather than collected from direct observations. This method facilitates the creation of datasets that can maximize the diversity of samples, addressing the limitations often found in real data. Such controlled datasets can improve model robustness by including scenarios that may be rare or underrepresented in actual data. They are particularly useful for augmenting datasets to enhance machine learning models without privacy concerns, thereby allowing for comprehensive training.

In practice, using synthetic data in tandem with real data often yields the most effective results. This hybrid approach allows practitioners to leverage the strengths of both types while mitigating their weaknesses. For instance, synthetic datasets can fill the gaps in real data or be employed in initial model training phases, while real data validation can confirm the synthetic model’s outputs in real-world applications. Consequently, understanding when each type of data is preferable strengthens the model development process and enhances overall performance.

Implications for Scaling in Diverse Industries

Synthetic data, generated through algorithms rather than collected from real-world events, has been gaining traction across various industries owing to its capacity to provide robust datasets while maintaining privacy and security. One of the most notable implications of synthetic data for scaling lies in retail, where firms are increasingly leveraging this technology to refine inventory management and enhance customer experiences. By simulating a multitude of shopping behaviors and preferences, retailers can optimize their supply chains and better predict demand, leading to improved operational efficiencies.

Similarly, in the marketing sector, synthetic data is transforming how companies gather insights and evaluate campaign performance. Marketers can generate realistic customer profiles and behaviors to test different marketing strategies without the constraints of data availability or privacy regulations. This allows organizations to experiment with personalized marketing approaches and evaluate potential outcomes, ultimately driving growth and improving return on investment.

In the technology realm, synthetic data is equally impactful, especially in the realm of machine learning and artificial intelligence. By creating vast amounts of high-quality, annotated data, technology firms can accelerate the training of models while minimizing biases and ethical concerns associated with traditional data collection methods. This capability allows providers to innovate more rapidly and effectively, potentially accelerating advancements in software development and application deployment.

Overall, the use of synthetic data presents numerous opportunities for scaling in diverse sectors. Its ability to enhance operational efficiencies and foster innovation underscores its potential to influence growth trajectories significantly. As industries increasingly adopt this technology, its implications will likely extend beyond immediate operational benefits, impacting strategic decision-making and long-term sustainability as well.

Conclusion: The Future of Data Scaling with Synthetic Data

The evolution of data science is closely tied to the effective utilization of data to drive advancements in machine learning and artificial intelligence. As we have explored throughout this blog post, synthetic data has emerged as a transformative tool that holds the potential to significantly impact scaling curves in these fields. By generating large volumes of realistic, diverse, and accurate datasets, synthetic data can help overcome some of the limitations faced with traditional data collection methods, such as privacy concerns and data scarcity.

One of the major advantages of synthetic data lies in its ability to provide high-quality training data for machine learning models without the cumbersome constraints of acquiring real-world data. With the application of synthetic datasets, organizations can ensure that their models are trained on comprehensive examples that are more representative of real-world scenarios. This capability not only accelerates the development cycle but also potentially improves model performance, allowing for scalability far beyond what was previously imaginable.

Looking ahead, the role of synthetic data in shaping the future of data scaling appears promising. As advancements in data generation technologies continue, the boundaries of what can be achieved with synthetic datasets are likely to expand. This shift will not only drive innovation across various sectors but will also facilitate the establishment of ethical standards and governance for data handling and usage.

In conclusion, the integration of synthetic data into the realms of data science and artificial intelligence suggests a paradigm shift in how we approach data scaling. The implications are significant: by leveraging synthetic data, we can better meet the demands of increasingly complex models, support robust research, and develop AI applications that truly reflect the nuances of the real world. The future of data scaling is not just about increasing the volume of data but enhancing its quality and applicability through synthetic means.