Introduction to Scaling Curves in Machine Learning

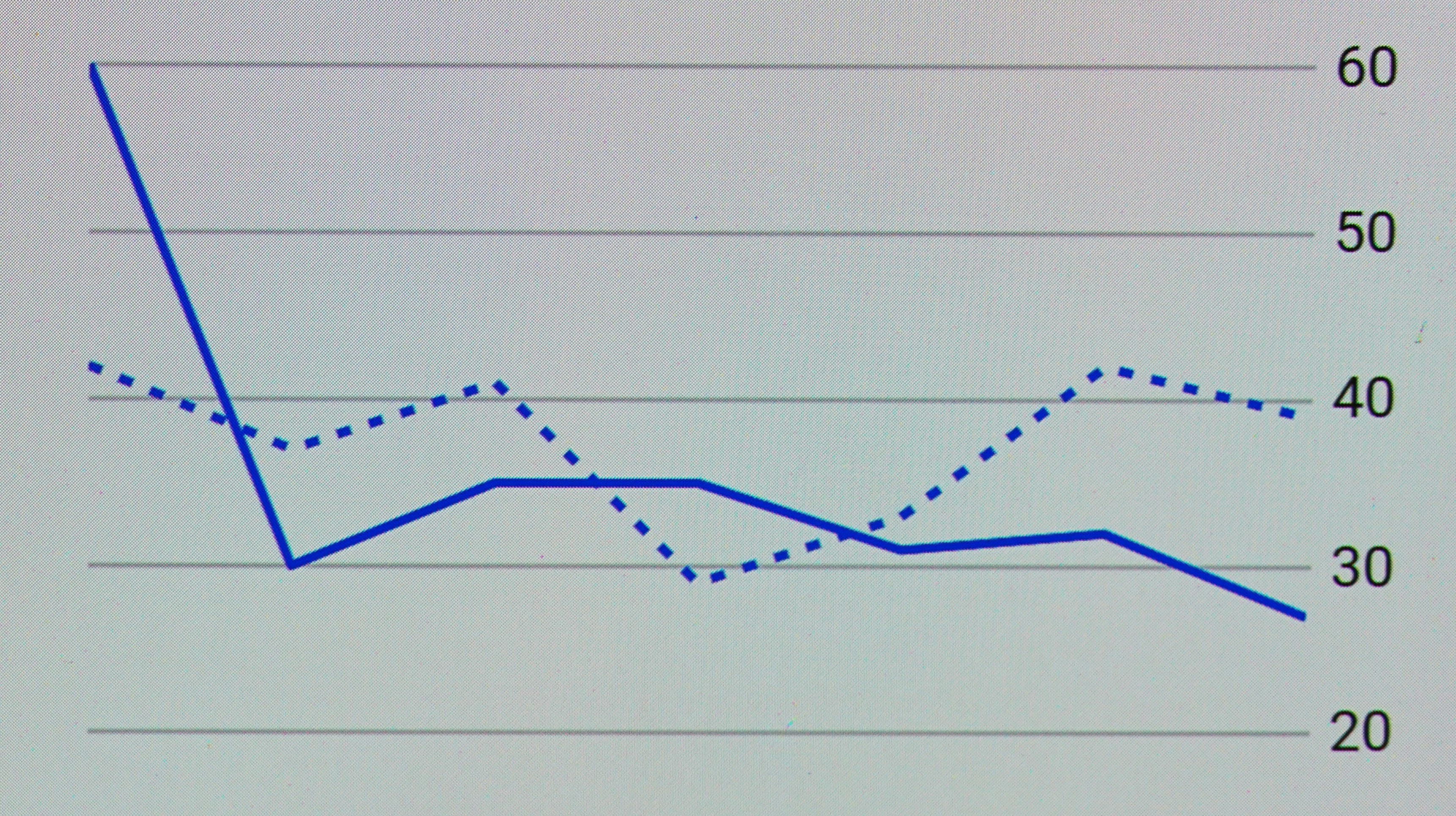

Scaling curves in machine learning depict the relationship between the volume of training data and the performance of a predictive model. This concept is pivotal as it aids in understanding how additional data influences model accuracy. Initially, models generally demonstrate improved performance as data quantity increases. However, the benefits tend to plateau after reaching a certain threshold, indicating a fundamental limitation in the traditional assumption regarding data scaling.

The scaling curve essentially reveals two significant aspects: first, the rate of improvement gained by adding more data, and second, the point at which the returns diminish. In the early stages, even modest additions to the dataset can yield significant enhancements in the model’s predictions. Yet, this improvement becomes increasingly marginal with each successive increment in the dataset’s size.

Challenges arise when attempting to harness larger datasets effectively. Issues such as data quality, redundancy, and computational inefficiency become pronounced as one scales. Furthermore, the costs related to data acquisition and storage can escalate, which makes it less feasible to keep gathering new data to achieve incremental improvements. Traditional models, while robust in learning from sizable datasets, often struggle to balance the quantity and quality required for optimal performance.

The quest for better performance without extensively increasing data size has driven researchers and practitioners to consider alternative solutions. This is where synthetic data comes into focus, as it provides the opportunity to augment existing datasets without the associated costs and challenges of acquiring real-world data. It holds promise as a viable strategy for overcoming the limitations posed by conventional scaling curves in machine learning.

What is Synthetic Data?

Synthetic data is a form of artificially generated information that simulates the properties and characteristics of real-world data. This type of data is produced using various methodologies, including algorithms and simulation techniques, which allow for the creation of datasets that can closely resemble actual data without compromising privacy or security. The primary advantage of synthetic data is its scalability; it can be generated in vast quantities and tailored to meet specific research and analytical needs.

There are several forms of synthetic data, including numerical data, text data, and image data, each of which can be generated using different techniques. For instance, generative adversarial networks (GANs) are commonly employed to create realistic images, while statistical sampling methods may be used to produce numerical datasets that adhere to certain distributions. The ability to control for specific variables is another significant benefit, as researchers can craft datasets that include particular attributes or distributions, thus enabling more focused hypotheses testing and model training.

In contrast to real-world data, synthetic data possesses distinct characteristics that make it both advantageous and unique. Real-world data is often heterogeneous and influenced by a multitude of uncontrolled variables. In comparison, synthetic datasets offer uniformity and consistency, which can aid in experiments where researchers aim to isolate the effects of specific variables. Moreover, synthetic data can be generated without the same ethical concerns that accompany real-world data, particularly when dealing with sensitive information. Thus, while synthetic data may not encapsulate every nuance present in actual datasets, it remains a potent tool for various applications in research, machine learning, and data analysis.

The Role of Synthetic Data in Machine Learning

Synthetic data has emerged as a transformative resource in the field of machine learning, particularly in settings where access to real data is constrained or presents significant challenges. One of the most prominent applications of synthetic data is in the training of autonomous vehicles. In this context, synthetic data is generated to simulate diverse driving scenarios, which helps ensure that machine learning models can be robustly trained on various conditions, including rare and potentially dangerous situations that may not occur frequently in real-world data. This can lead to enhanced model performance, ensuring safer navigation in complex environments.

Moreover, synthetic data plays a crucial role in enhancing privacy within sensitive datasets. By creating data that mimics the statistical properties of original datasets without containing any actual user information, organizations can effectively share and utilize this data while adhering to privacy regulations. This approach is particularly beneficial in healthcare and finance, where sensitive information needs protection. Such synthetic datasets allow for advanced analytics and model development without risking personal data exposure.

In addition to serving ethical and safety needs, synthetic data addresses the critical issue of data availability for underrepresented groups in various fields. For instance, in biomedical research, certain demographics may be underrepresented, leading to biased outcomes. Generating synthetic data can help fill these gaps, enabling researchers to create more equitable and comprehensive models that reflect the diversity of the population. The advantages of synthetic data are clear; it not only enhances the quality of machine learning solutions but also promotes inclusive and responsible data practices.

Limitations of Current Scaling Curves

The realm of machine learning relies heavily on data, often summarised through scaling curves, which illustrate the relationship between the amount of training data and the model’s performance. However, these scaling curves exhibit notable limitations that hinder their effectiveness. Primarily, the assumption that more real-world data leads to consistently improved performance does not hold true across varying contexts.

One significant limitation is the quality of data. As organizations collect vast amounts of real data, it may not possess the necessary attributes or relevance for specific tasks. Poor-quality data can lead to overfitting, where the model learns noise rather than useful patterns. Consequently, simply increasing the volume of data can paradoxically result in diminished performance rather than enhancement, underscoring the necessity for a nuanced understanding of data quality.

Moreover, the phenomenon known as diminishing returns impacts scaling curves. After a certain volume of data is achieved, additional data may yield negligible improvements. This reinforces the idea that there is a saturation point beyond which model performance plateaus, challenging the traditional perspective that more data is always beneficial. Additionally, existing scaling methods often overlook the computational cost associated with processing large datasets. The resource demands of expanding datasets can strain existing infrastructures, further complicating the pursuit of scalability.

Relying solely on traditional scaling methods can trap practitioners in a cycle of inefficiency. Without considering the varying impacts of data on different models and tasks, there exists a heightened risk of misallocation of resources. In light of these limitations, it is evident that a shift towards innovative approaches, including the exploration of synthetic data, may be necessary to challenge existing paradigms regarding data scaling.

How Synthetic Data Challenges Traditional Scaling Limitations

Synthetic data is increasingly recognized for its potential to disrupt the conventional scaling curves that limit machine learning models and their efficiency. Traditional scaling methods often encounter significant challenges such as data scarcity, class imbalance, and the inability to replicate real-world intricacies. However, the introduction of synthetic data offers new avenues to overcome these hurdles, ultimately enhancing model training capabilities.

One of the primary advantages of synthetic data is its ability to generate large datasets that mirror the statistical properties of real-world data without the constraints of data acquisition. This capability is particularly beneficial in scenarios where obtaining labeled data is expensive or time-consuming. For instance, industries such as healthcare and finance can leverage synthetic datasets to create comprehensive training sets that reflect diverse patient demographics or financial scenarios. By utilizing synthetic data, organizations can train models more effectively and efficiently, laying the groundwork for superior performance in real-world applications.

Moreover, synthetic data plays a crucial role in addressing data scarcity, a common issue faced by many organizations. With synthetic datasets, companies can simulate various conditions that may not be present in their current datasets, thereby enriching the scope of training data available. This is particularly important for underrepresented classes within datasets, where real-world samples may be limited. By generating synthetic examples across different scenarios, models can learn to generalize better, improving their predictive accuracy.

The generalization capacity of machine learning models is significantly enhanced by the inclusion of synthetic data, which allows for the exploration of a broader array of possible inputs. This not only minimizes overfitting but also helps in developing robust models that can function effectively in diverse environments. With the advent of advanced algorithms and techniques for generating synthetic data, the traditional constraints imposed by current scaling curves are increasingly challenged, paving the way for more innovative, scalable, and adaptable machine learning solutions.

Case Studies of Synthetic Data in Action

Synthetic data has emerged as a transformative tool in machine learning, particularly when addressing scaling challenges. Several real-world projects have effectively utilized synthetic data, revealing its capabilities in improving accuracy, speed, and cost-efficiency in various domains.

One notable example is the automotive industry, where companies like Tesla have implemented synthetic data generation to enhance self-driving algorithms. By simulating diverse driving conditions that may be rare in real life, Tesla has been able to train its models efficiently. This approach has significantly increased the model’s ability to recognize and react to an array of scenarios, thereby reducing the training time needed with traditional data collection methods. Consequently, this has led to rapid advancements in autonomous driving technology.

In the medical sector, synthetic data has played a crucial role in developing algorithms for diagnosing diseases. A case study involving a healthcare provider demonstrated how generating synthetic patient records allowed for the training of predictive models without compromising patient privacy. The findings indicated a marked improvement in the model’s diagnostic accuracy, achieving more than a 20% increase over those trained solely on limited real patient data. This case not only highlights the value of synthetic data in preserving privacy but also illustrates its efficacy in boosting model performance.

Furthermore, synthetic data has proven invaluable in the finance sector, for fraud detection systems. A financial institution utilized synthetic datasets to simulate various fraudulent activities. By training their detection algorithms with this enriched dataset, the organization achieved a substantial decrease in false positives while maintaining a high detection rate. This case exemplifies how synthetic data can lead to improved operational efficiency and reduce costs associated with false alarms.

Future Implications of Synthetic Data on Machine Learning Progression

The future of synthetic data in machine learning is set to profoundly influence the way models are developed, deployed, and refined. As organizations increasingly adopt synthetic data techniques, we can anticipate a shift in current practices that may lead to novel methodologies and standards in training models. Synthetic data, generated through advanced algorithms and simulations, can fill gaps in existing datasets, enhancing the diversity and representativeness of training examples. This ability to generate realistic data on demand is particularly advantageous in situations where obtaining real-world data is expensive, time-consuming, or fraught with ethical concerns.

Moreover, the integration of synthetic data can potentially accelerate the training process for machine learning models. Traditionally, the scaling curves of model performance are constrained by the volume and quality of available data. By leveraging synthetic data, practitioners can create larger datasets that improve performance metrics. Consequently, this could lead to transformational changes in how we assess model efficacy and scalability in various applications, ranging from autonomous vehicles to healthcare diagnostics.

However, while the benefits of synthetic data are promising, they also bring forth significant ethical implications. The creation and usage of synthetic data must be approached with caution, ensuring that generated datasets do not perpetuate biases or misrepresent real-world scenarios. Ethical guidelines and validation procedures need to be established to ensure that synthetic data maintains fidelity to real data characteristics, thus ensuring model reliability. This validation is crucial, as the effectiveness of machine learning applications often hinges on their ability to generalize findings from training to real-world implementations.

In conclusion, synthetic data represents a pivotal advancement in machine learning, poised to redefine existing paradigms. As the field continues to evolve, it will be essential to balance innovation with ethical accountability, ensuring that synthetic data not only enhances model performance but also aligns with real-world applications and societal values.

Challenges and Considerations for Implementing Synthetic Data

The adoption of synthetic data in various applications presents several challenges that must be addressed to ensure its effectiveness and reliability. One of the primary concerns revolves around quality control. Synthetic data must mimic the statistical properties and distributions of real-world data accurately. If the generated synthetic data is not representative, it could lead to erroneous conclusions and adversely impact model performance. This necessitates the development and implementation of rigorous validation protocols that can evaluate the fidelity of synthetic datasets.

Another significant consideration is the potential for underlying biases in the synthetic data. If the model used to generate synthetic data is trained on biased or incomplete sets of real data, the resulting synthetic samples may propagate these biases. This issue raises ethical concerns, especially in sensitive areas such as healthcare or facial recognition technologies, where biased data can lead to unfair treatment or discrimination. Ensuring that synthetic datasets are balanced and equitable is critical, and this may require employing advanced techniques to identify and mitigate bias during the data generation process.

Best practices for generating trustworthy synthetic data include rigorous testing against benchmark datasets and regular audits to monitor for emerging biases. Furthermore, integrating stakeholder feedback into the development process can provide diverse perspectives that strengthen the quality of synthetic data. Establishing collaborative platforms that facilitate the sharing of insights and techniques among researchers and practitioners can foster innovation and improvement in synthetic data generation methods. By proactively addressing these challenges, organizations can harness the power of synthetic data, enhancing scalability while minimizing risks associated with data quality and bias.

Conclusion: The Future of Data in Machine Learning

As technologies evolve, the intersection of synthetic data and machine learning presents new opportunities and challenges that warrant careful consideration. Throughout this discussion, we have examined the transformative potential of synthetic data in breaking existing scaling curves that have constrained the capabilities of current machine learning models. The insights gleaned from this analysis highlight not only the advantages that synthetic data can provide but also emphasize the necessity for ongoing exploration in this field.

Synthetic data offers a scalable and efficient alternative to traditional data collection methods, enabling researchers and data scientists to generate vast amounts of high-quality data tailored for their specific needs. This approach can help to alleviate concerns regarding privacy, data scarcity, and bias, thereby improving model training processes and overall performance. By blending synthetic and real-world data, practitioners can create more robust datasets that enhance model accuracy while also ensuring compliance with data regulations.

Moreover, leveraging synthetic data encourages innovation in machine learning methodologies, prompting researchers to rethink model architectures and consider alternative learning paradigms. This symbiotic relationship between synthetic and real data can pave the way for new advancements, ultimately influencing various industries ranging from healthcare to finance.

In conclusion, the future of data in machine learning is undoubtedly promising, marked by the infinite potential that synthetic data brings. To fully harness its capabilities, it is imperative for the academic and industry communities to continue their collaboration. By fostering an environment of shared knowledge and experiences, the ongoing integration of synthetic data will not only optimize model performance but also redefine the landscape of machine learning as we know it.