Introduction to Extrapolation in Long Sequences

Extrapolation, in the context of data analysis, refers to the process of estimating or predicting future values based on existing data trends. This technique is particularly crucial when dealing with long sequences of data, where accurate projections can lead to significant advantages across various domains, including finance, meteorology, and social sciences. Long sequences present unique challenges due to the complexity of the relationships inherent in the data, making effective extrapolation a vital skill.

In fields such as time series forecasting, the ability to make predictions about future events can enhance decision-making processes. For instance, businesses rely on accurate projections to manage inventory levels or anticipate market trends. Similarly, in natural language processing (NLP), extrapolation allows for the generation of coherent text by predicting subsequent words or phrases based on previous context. This is especially important for tasks such as machine translation and sentiment analysis, where understanding the nuances of language can significantly affect outcomes.

The significance of accurate extrapolation in these fields cannot be overstated. When extrapolation is done effectively, the resulting insights can improve operational efficiencies, inform strategic planning, and ultimately, lead to better outcomes. Conversely, poor extrapolation can result in misguided strategies and financial losses. Given the array of applications and potential consequences, the demand for robust methods and technologies to enhance extrapolation has risen considerably.

XPOS emerges as a pivotal tool in this landscape, designed specifically to improve the accuracy of extrapolation in long sequences. By enabling researchers and practitioners to better harness data from lengthy sequences, XPOS seeks to mitigate common extrapolation challenges, thereby fostering enhanced predictability and reliability across various applications.

Understanding XPOS: Overview and Features

XPOS, short for eXtrapolation Positioning for Objective Signals, is a cutting-edge framework designed to enhance the efficiency of handling long sequences in data analysis. Developed in response to the limitations of traditional methods, XPOS facilitates improved extrapolation, thereby addressing a pivotal challenge in various fields such as natural language processing, time series forecasting, and quantitative research.

The foundation of XPOS lies in its unique architecture, which allows for the processing of extended sequences without compromising computational efficiency or accuracy. Unlike conventional models that struggle with long-range dependencies, XPOS employs sophisticated positional encoding techniques. These techniques enable it to maintain contextual integrity across extended data inputs, ensuring that essential information is not lost during processing.

One of the most notable features of XPOS is its ability to integrate learning mechanisms that emphasize relevance and impact. This framework uses enhanced attention mechanisms that prioritize significant data points within long sequences, thereby streamlining the extrapolation process. By leveraging this approach, XPOS effectively reduces the computational complexity associated with processing extensive data while still delivering high-performance results.

Moreover, XPOS is designed to be adaptable, allowing researchers and analysts to customize its parameters based on specific project requirements. This flexibility makes it an ideal choice for various applications, whether in academic research or industry-level analyses. By providing a robust solution for long sequences, XPOS stands out in its ability to enhance the extrapolation process significantly.

Challenges in Long Sequence Extrapolation

Extrapolating long sequences presents several challenges that can significantly hinder the performance of traditional models. One of the most pressing issues is the vanishing gradients problem. This phenomenon occurs when gradient values decrease exponentially as they are propagated back through layers of neural networks, particularly during the training of long sequences. As a result, the model struggles to learn relevant information from earlier inputs. Consequently, vital data points from the beginning of a sequence may be neglected, impairing the model’s overall accuracy and effectiveness in predicting future values.

Another significant challenge encountered in long sequence extrapolation is context retention. Efficiently retaining context over extended sequences is crucial for accurate predictions. Traditional models, such as vanilla recurrent neural networks (RNNs), often fail to maintain a continuous understanding of the sequence context. This inadequacy leads to a diminished grasp of the relationships between distant data points, resulting in performance degradation. To address this drawback, more advanced architectures like Long Short-Term Memory (LSTM) networks and Gated Recurrent Units (GRUs) have been developed. Although these models are better equipped to handle contextual information, they still face limitations, especially as the sequence length increases.

Memory constraints also pose an obstacle in long sequence extrapolation. Many existing models are designed with fixed input lengths, which can limit their ability to effectively manage and process longer sequences. As data complexity increases, the demand for processing power and memory resources becomes more pronounced. Traditional models may struggle to accommodate this demand, leading to inefficiencies and potential data loss during extrapolation. Thus, developing and refining methodologies that can dynamically adapt to various sequence lengths and complexities is essential for improving the performance in long sequence extrapolation tasks.

The Mechanism of XPOS

The XPOS architecture is designed to effectively handle the complexities associated with long sequences. At the core of its operation lies a sophisticated attention mechanism that allows for the dynamic weighting of input data. This feature enables XPOS to identify and prioritize relevant information from long input sequences, thereby facilitating enhanced comprehension and output accuracy. The attention mechanism addresses the challenges posed by traditional sequence processing methods, which often struggle to maintain relationships among distant tokens.

Additionally, XPOS employs a unique utilization of memory that distinguishes it from other models in the field. By integrating a memory-augmented approach, XPOS can store and recall pivotal information throughout the sequence processing task. This not only aids in retaining important context but also supports the model in extrapolating information over extended spans effectively. As a result, the performance of XPOS significantly improves in various applications, from natural language processing to complex time series forecasting.

Moreover, XPOS’s design incorporates layers that adaptively adjust based on the length of the sequence being processed. This adaptability ensures that the model remains efficient, regardless of whether it is working with short phrases or comprehensive texts. By fine-tuning the processing layers, XPOS minimizes redundancies, which contributes to faster computation and reduced resource consumption. The interplay between attention mechanisms and optimized memory utilization ultimately empowers XPOS to tackle extrapolation tasks with greater finesse, making it a transformative tool in the realm of sequence modeling.

Comparative Analysis: XPOS vs. Traditional Models

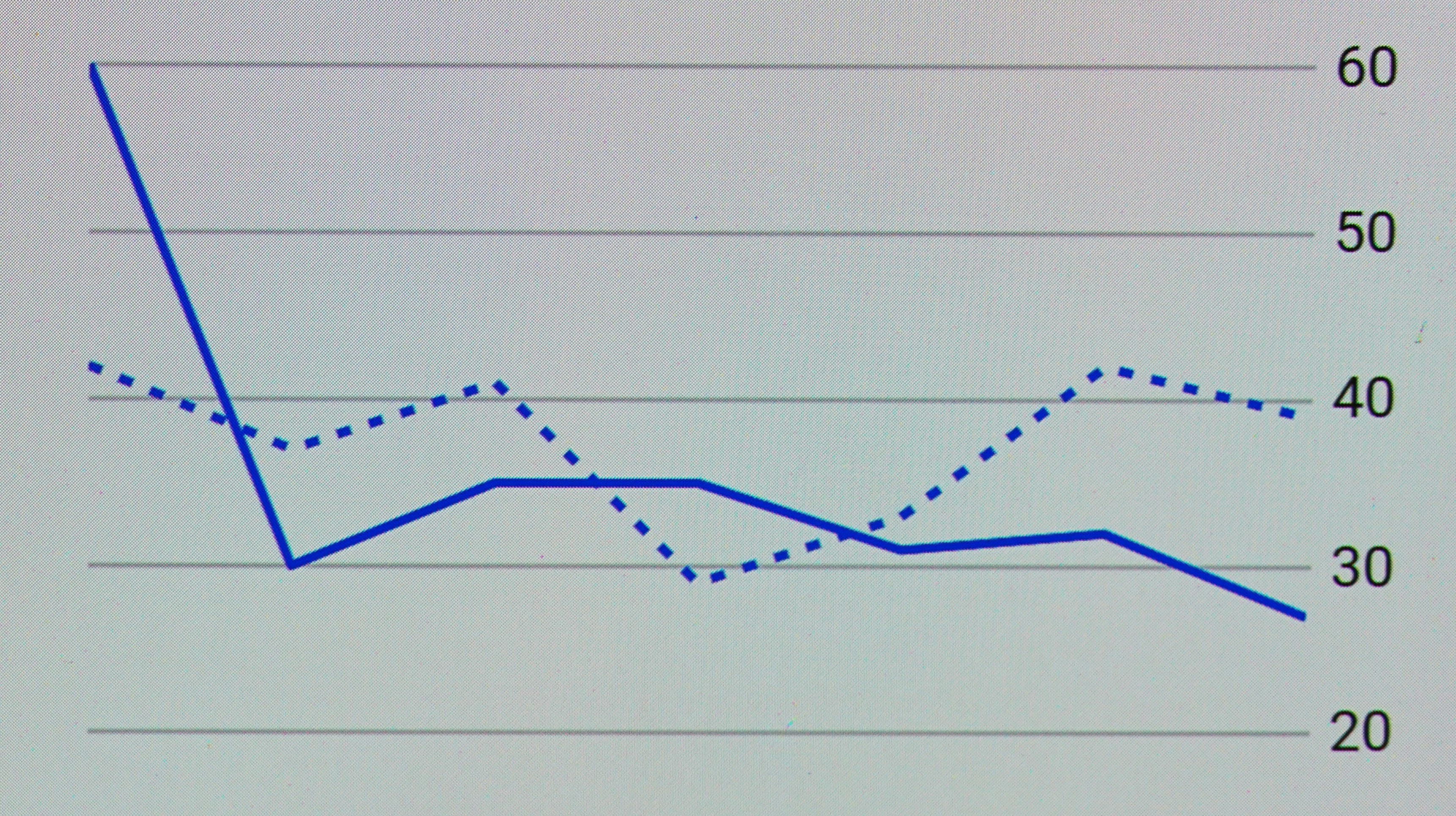

In the landscape of extrapolating long sequences, a comparative analysis between XPOS and traditional models reveals significant differences in performance and capability. Traditional models, such as Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRUs), have been widely utilized for sequence prediction tasks. However, they often struggle with long-range dependencies, leading to difficulties in accurately predicting outcomes across extended sequences.

XPOS, which stands for eXtrapolation with Position-aware Optimization System, introduces a novel approach by seamlessly integrating position-aware mechanisms into the extrapolation process. One of the primary metrics for evaluation is predictive accuracy over long sequences. Studies show that XPOS consistently outperforms traditional models in this domain. For instance, while LSTM models might experience diminishing performance as sequence length increases, XPOS retains robustness, providing improved predictions for longer extrapolation tasks.

Another metric worth examining is computational efficiency. Traditional models typically require extensive tuning of hyperparameters and can be computationally intensive. In contrast, XPOS presents a relatively streamlined training process. This efficiency allows practitioners to allocate computational resources more effectively, making it easier to train on larger datasets without sacrificing performance. Moreover, the interpretability of XPOS models enhances user understanding, facilitating better decision-making based on the extrapolation results.

Despite its strengths, XPOS is not without limitations. In specific scenarios with extremely noisy data, traditional models may still hold an edge due to their inherent design. For instance, when handling outliers, traditional recurrent networks can sometimes yield better performances by benefiting from a more conservative extrapolation approach.

Overall, while XPOS demonstrates meaningful advantages over traditional models in many aspects, it is essential to recognize that its effectiveness may vary depending on the context of the data and the specificity of the extrapolation task. The ongoing research in this area continues to shed light on optimizing these models further, ensuring that practitioners can leverage the best tools available for their needs.

Use Cases of XPOS in Real-World Applications

The XPOS framework is proving to be a pivotal tool in various industries, primarily due to its proficiency in handling long sequences for extrapolation purposes. One notable area of application is finance. Financial analysts use XPOS to predict stock trends by analyzing extensive historical data. By capturing the intricate patterns and relationships within vast datasets, XPOS enables more accurate forecasts, reducing the risks associated with trading decisions.

In the healthcare sector, XPOS has revolutionized the way patient data is interpreted. Hospitals and medical researchers employ this technology to analyze lengthy patient histories, which include treatment timelines and recovery sequences. By applying XPOS, medical practitioners can identify potential health deterioration earlier and personalize patient care plans effectively. This application not only enhances patient outcomes but also optimizes resource allocation within healthcare systems.

Moreover, natural language processing (NLP) is another domain where XPOS has demonstrated significant applicability. Language models powered by XPOS can efficiently extrapolate context from lengthy textual data, facilitating tasks such as sentiment analysis, translation services, and content summarization. The ability to draw meaningful conclusions from extensive literary sequences allows businesses to enhance their customer interactions and foster more engaging user experiences.

In summary, the versatility of XPOS extends across multiple sectors, enhancing the capability to extrapolate insights from long sequences. As industries continue to adopt this innovative framework, it is anticipated that the potential applications will expand, driving efficiencies and advancements across various fields.

Future Trends in Sequence Modeling and Extrapolation

The field of sequence modeling is poised for significant advancements in the coming years, driven by innovations that promise to enhance methods of extrapolation. As the complexity of data increases, so too does the need for sophisticated modeling techniques that can effectively handle long sequences. A prominent example of this is the XPOS model, which introduces a novel approach to optimizing performance in extrapolation tasks.

Looking ahead, several trends are likely to emerge in sequence modeling. One such trend is the integration of more advanced neural architectures, which may leverage principles from both machine learning and statistical methods to improve accuracy in predicting future data points. Techniques like transformers and recurrent neural networks will continue to evolve, potentially incorporating broader context windows that allow for deeper understanding and representation of sequential data.

Moreover, the growing accessibility of large datasets combined with enhanced computing power will facilitate the training of more robust models. This trend underscores the importance of transfer learning, where pre-trained models can be fine-tuned on smaller, task-specific datasets to improve extrapolation results. The synergy between XPOS and transfer learning exemplifies how new models are evolving to harness the value of existing data structures.

In addition, the application of real-time data processing is expected to gain traction, minimizing latency issues that may arise when handling long sequences. This capability will enable models to adapt dynamically to new information, which is crucial for applications in various sectors, including finance, healthcare, and autonomous systems.

Overall, these advancements not only signal a promising future for extrapolation techniques but also highlight the need for ongoing research and experimentation in the field of sequence modeling. As technologies like XPOS continue to influence this domain, the potential for improved predictive capabilities becomes greater, ultimately enriching the interpretation and utilization of long sequences in practical applications.

Best Practices for Implementing XPOS

Implementing XPOS to enhance extrapolation in long sequences requires careful attention to several key practices that can significantly impact model performance. One of the first steps in this process is data preparation. Ensure that your dataset is comprehensive and representative of the patterns you expect to extrapolate. This involves curating high-quality input data and properly formatting it to meet the requirements of the XPOS framework. Furthermore, data normalization can be crucial to improve model convergence and prevent training instability.

Once your data is well-prepared, focus on model configuration. Configuring XPOS correctly involves selecting the appropriate hyperparameters tailored to your specific use case. This includes adjusting the embedding size, the number of layers, and the attention heads to align with the complexity of your data. Utilizing techniques like grid search or random search to fine-tune these parameters can result in optimized model performance, enabling better extrapolation capabilities.

In addition to configuration, consider employing optimization techniques during the training phase. Techniques such as learning rate scheduling and early stopping can help achieve the best possible results. Implementing these strategies not only allows for efficient training but also prevents overfitting, a common issue in deep learning models. Combining these optimization strategies with regularization methods, like dropout, can further enhance the model’s ability to generalize from training data to unseen sequences.

Moreover, continuous evaluation and iteration are essential for refining your implementation. Monitor the model’s performance using validation datasets to identify areas for improvement. This iterative approach ensures that the XPOS implementation remains aligned with your project’s goals, ultimately resulting in superior extrapolation outcomes that leverage the strengths of this advanced system.

Conclusion: The Impact of XPOS on Long Sequence Extrapolation

In reviewing the advancements brought forth by XPOS, it is evident that this technology plays a pivotal role in enhancing extrapolation capabilities, particularly in navigating long sequences. The integration of XPOS addresses multiple challenges associated with traditional models, which often struggle with maintaining accuracy as sequences elongate. Through optimized processing and intelligent data handling, XPOS significantly improves the reliability of predictions made over extensive sequences.

Moreover, XPOS harnesses advanced algorithms that facilitate better context retention, allowing for a more coherent understanding of varied data points within lengthy sequences. This is particularly beneficial in fields such as natural language processing, time-series analysis, and complex forecasting scenarios, where the integrity of long-term dependencies is crucial for successful outcomes. Enhanced extrapolation combined with XPOS’s robust framework also fosters innovation across different sectors, encouraging organizations to rethink how they approach data analysis and decision-making processes.

As we move forward, the implications of XPOS are vast. The potential for further exploration and application of this technology is promising. With continuous advancements in machine learning and artificial intelligence, the ability to accurately predict and extrapolate data in long sequences will likely evolve further. Industries that embrace such advancements will not only benefit from improved accuracy but also gain a competitive advantage as they refine their operational strategies and decision-making capabilities.

In conclusion, the significance of XPOS in enhancing long sequence extrapolation cannot be overstated. By improving the methods of handling and interpreting extensive data, XPOS represents a critical development in the field of data science. It is essential for stakeholders to consider the adoption of such technologies to maximize their potential and lead the way in data-driven innovations.