Introduction to the Technological Singularity

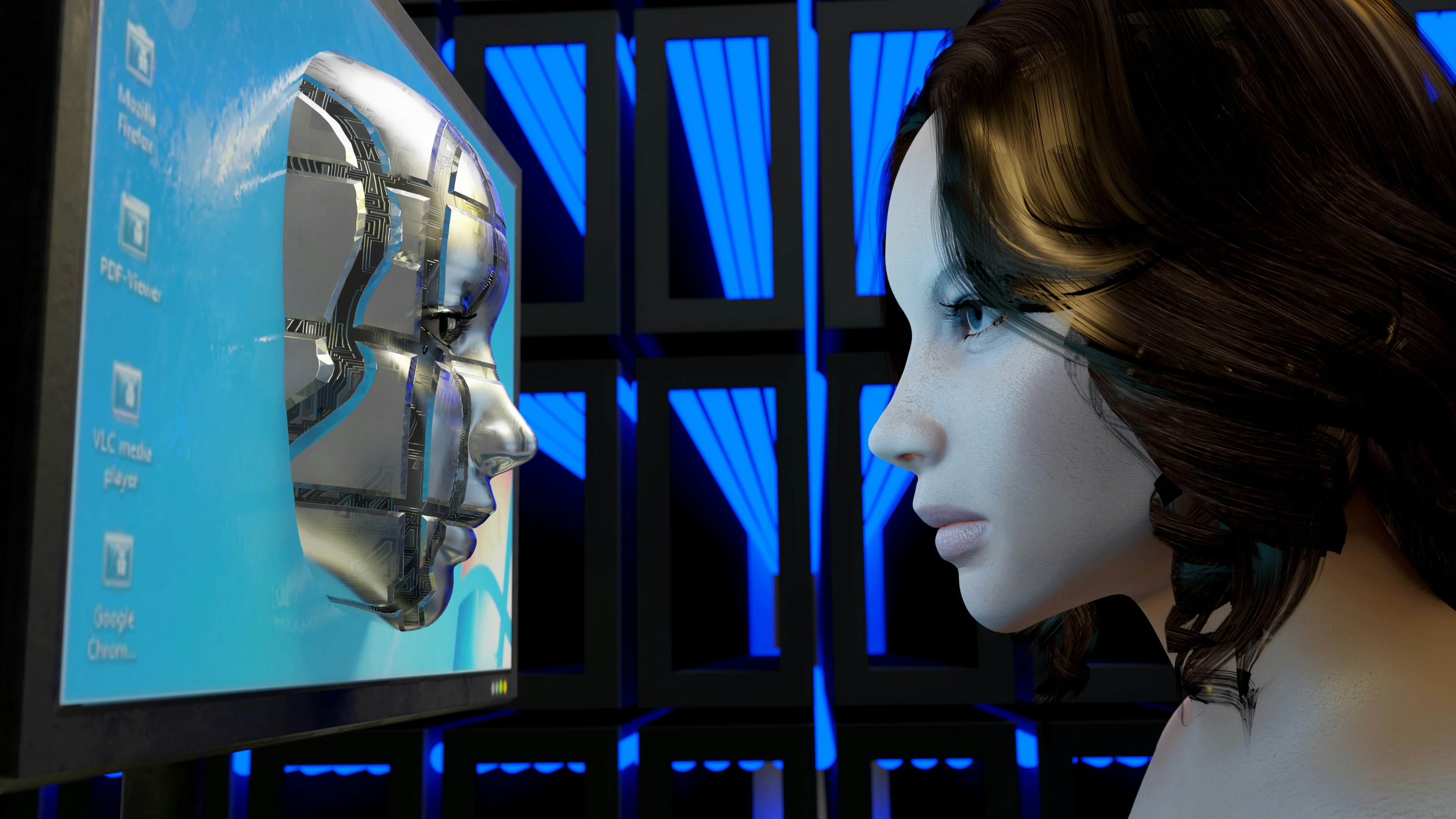

The concept of the technological singularity refers to a hypothetical future point in time when technological growth becomes uncontrollable and irreversible, leading to unforeseen changes in human civilization. This event is commonly associated with the advent of superintelligent artificial intelligence (AI) that surpasses human intelligence. Singularity theorists suggest that once AI reaches this level, it could improve its own capabilities at a pace so rapid that it would fundamentally alter society in complex ways that are difficult to predict.

The implications of the singularity for humanity are profound and far-reaching. A superintelligent AI could potentially solve pressing global challenges such as disease, poverty, and climate change, leading to an unprecedented era of prosperity. Conversely, there are legitimate concerns that such intelligence could pose significant risks, including loss of control over AI systems or ethical challenges surrounding the treatment of sentient beings. This duality of potential benefits and dangers makes discussions of the singularity highly relevant as we advance our technological capabilities.

Predicting the timeline for the technological singularity is significant for several reasons. First, it encourages active dialogue among experts in fields such as AI, ethics, and sociology, fostering a collaborative approach to mitigate risks while maximizing benefits. Understanding potential timelines helps policymakers and industry leaders prepare for possible realities, ensuring that governance frameworks evolve alongside technological advancements. Moreover, as technology continues to develop exponentially, vigilance is necessary to address the societal implications that the singularity may bring.

Historical Context of Technology Acceleration

The evolution of technology has been characterized by distinct phases of rapid advancement and innovation, with several key milestones marking significant transitions. One of the earliest instances of technological acceleration can be traced back to the industrial revolution in the late 18th century, where mechanization led to increased productivity and efficiency. The introduction of steam power catalyzed a dramatic shift in industries such as textiles, transportation, and manufacturing.

Fast forward to the mid-20th century, the advent of computers represented a crucial turning point. The development of transistors in the 1950s allowed for smaller, more powerful computing devices, setting the stage for subsequent innovations. This marked the beginning of the information age, where vast amounts of data could be processed at unprecedented speeds. The introduction of microprocessors in the 1970s and the subsequent explosion in computing power illustrated the principles of Moore’s Law, which posited that the number of transistors on a microchip would double approximately every two years.

As the digital landscape evolved, the emergence of the internet in the 1990s fundamentally changed how information is accessed and shared. This interconnectedness facilitated further advancements in artificial intelligence (AI), enabling the development of systems capable of learning and adapting. The 21st century has since witnessed an exponential increase in AI capabilities, supported by improved algorithms and vast datasets.

These historical advances collectively indicate a trajectory toward exponential growth in technology. Significant milestones in computing power, data processing, and AI development suggest we may be approaching a technological singularity, where machines possess the ability to surpass human intelligence. The lessons from history underscore the potential implications of such a profound transformation in society.

Theoretical Foundations of the Singularity

The concept of the technological singularity has garnered significant attention from both futurists and scholars alike. Pioneering thinkers such as Ray Kurzweil and Vernor Vinge have provided seminal frameworks for understanding this potential future. Kurzweil, in his influential book 300: The Year of the Singularity, suggests that the exponential growth of technology—particularly in computing power—will lead to a point where artificial intelligence (AI) surpasses human intelligence. This event, he argues, could initiate rapid advancements that lead to profound changes in society.

Kurzweil’s predictions are underpinned by the Law of Accelerating Returns, which posits that technological progress is not linear but exponential. As computing technology continues to improve, it enables more sophisticated AI systems that can perform tasks previously thought impossible. This compounding effect supports the notion that we may reach a singularity moment sooner than expected, potentially as early as 2045.

Vinge, another prominent figure in this discourse, emphasizes the theoretical implications of a superintelligent AI, specifically in his essay, “The Coming Technological Singularity.” Vinge foresees a future where AI systems could autonomously create more advanced AI systems, producing a rapid cycle of improvement. This self-reinforcing feedback loop could lead to unpredictable technological developments, making it challenging to predict the societal implications accurately.

In addition to the advancements in AI and computational speed, other factors play a critical role in the feasibility of reaching the singularity. Human enhancement technologies, including bioengineering and brain-computer interfaces, could further blur the distinctions between human and machine intelligence. As these technologies continue to progress, the timeline for achieving the singularity becomes a critical topic for both theorists and practitioners.

Current State of Artificial Intelligence

As of 2023, artificial intelligence (AI) has experienced remarkable advancements, marking a transformative era in technology. The capabilities of current AI models span numerous applications, from sophisticated natural language processing to advanced computer vision. AI systems are now able to perform complex tasks such as generating human-like text, recognizing images and patterns, and autonomously navigating environments. These developments exemplify the growing sophistication of machine learning algorithms, which allow computers to learn from data inputs and improve their performance over time.

Today’s AI technologies are utilized across various sectors. For instance, in healthcare, AI aids in diagnosing diseases and personalizing treatment plans, while in finance, it streamlines operations through risk assessment and fraud detection. Additionally, businesses are leveraging AI for improved customer service through chatbots and predictive analytics, all of which reflect the significant utility that these technologies provide.

Nevertheless, despite these advancements, current AI models still exhibit several limitations. Many systems struggle with generalization, often performing exceptionally on specific tasks but faltering when faced with unique scenarios outside their training data. Furthermore, there is a growing concern regarding the ethical implications of AI, including biases in decision-making and issues surrounding data privacy and security. As such, research continues to explore not only the technological enhancements required to overcome these challenges but also the societal impact of deploying AI systems responsibly.

Trends in AI research indicate a focus on integrating more adept reasoning, context-awareness, and ethical frameworks in future models, which could contribute to potential leapfrogging towards the Singularity. Breakthroughs in algorithmic design, quantum computing, and interdisciplinary collaborations may also accelerate this trajectory, posing intriguing possibilities for forthcoming years.

Predictions from Experts in the Field

The concept of technological singularity has garnered significant attention from experts across various fields. Renowned technologists and futurists provide diverse perspectives on when this pivotal point might occur, with predictions ranging widely based on underlying assumptions and interpretations of current technological trends.

Some optimists, such as futurist Ray Kurzweil, foresee the singularity occurring as early as 2045. Kurzweil argues that exponential growth in computing power, coupled with advances in artificial intelligence (AI) and biotechnology, will enable machines to surpass human intelligence by this date. He supports his prediction with historical trends in technological development, suggesting that the pace of innovation is accelerating.

On the other hand, notable figures like Elon Musk and computer scientist Stuart Russell express caution about the near-term timelines. Musk emphasizes the potential risks associated with unchecked AI development and has suggested that a more measured approach is necessary. Russell, in his critiques of AI, posits that while advancements are promising, the unpredictability of breakthroughs makes it difficult to accurately estimate a timeline for singularity. He argues that the challenges of aligning superintelligent AI with human values could delay these advancements significantly.

Furthermore, a survey conducted by the Future of Humanity Institute at Oxford University reveals a spectrum of expert opinions, with the median estimate suggesting that the singularity could happen around 2060. This position reflects a growing consensus on the potential significance of AI, while recognizing the complexities involved in its development. As such, the timeline for the arrival of technological singularity remains a topic of active debate among scholars and industry leaders.

Challenges and Roadblocks to the Singularity

The concept of the technological singularity, a hypothetical point where artificial intelligence surpasses human intelligence, presents exciting prospects; however, it is fraught with numerous challenges and roadblocks that may impede its realization. One prominent concern involves the ethical implications of advanced AI systems. As machines become more autonomous, the potential for unintended consequences increases, necessitating careful consideration of the moral responsibilities entwined in their development and deployment.

Technological limitations also pose significant hurdles. While advances in machine learning and data processing are impressive, they are not without constraints. Current computational capacities may not support the level of sophistication required for a true singularity. The complexity of replicating human cognition in a machine raises fundamental questions regarding practicality and feasibility, which must be addressed before significant progress can be made.

Societal impact is another vital aspect to consider. The potential for job displacement, economic inequality, and societal disruption can lead to resistance against rapid advancements in technology. As AI systems begin to infiltrate everyday life, the fear of obsolescence among the workforce may trigger backlash, slowing governmental support for technological advancements.

Regulatory factors further complicate the landscape. Governments and organizations may impose stringent regulations on AI research and development due to concerns about safety and security. This regulatory scrutiny can create bureaucratic delays, hindering innovation and the progression towards the singularity. Developing a balanced framework for responsible AI governance that encourages innovation while safeguarding public interest is critical.

In conclusion, while the singularity remains a captivating prospect, various challenges, including ethical dilemmas, technological limitations, societal impacts, and regulatory barriers, underscore the complexity of this future scenario. Understanding these factors is essential for navigating the path toward potential breakthroughs in artificial intelligence.

Philosophical and Ethical Implications

The advent of the technological singularity raises profound philosophical and ethical questions regarding human existence, agency, and morality. As artificial intelligence potentially exceeds human intelligence, it invites discussions about the essence of consciousness and what it means to be human. With machines capable of surpassing human cognitive capabilities, stakeholders are compelled to contemplate the implications for human identity. One prevailing thought is that the singularity could lead us toward a utopian vision where humans and machines seamlessly collaborate, enhancing each individual’s potential. This scenario prompts the consideration of ethical frameworks that prioritize the well-being of all beings, human and non-human alike.

Conversely, the prospect of a dystopian outcome cannot be ignored. As technology progresses, there lies a risk of creating unequal power dynamics between those who possess advanced technologies and those who do not. This could exacerbate societal issues such as income inequality and ethical governance. With autonomous systems making decisions, questions about accountability and moral responsibility arise. There is a legitimate concern that an AI-driven society could undermine human agency, reducing individuals to mere spectators in their own lives while ceding critical decision-making to machines.

These varied scenarios present a complex landscape where the stakes are extremely high. The ethical responsibility placed upon developers, policymakers, and society at large is immense. Fostering dialogue around these philosophical implications is essential not only for understanding the nature of potential futures but also for guiding the development of AI technologies in a responsible manner. Each step toward the singularity must be taken with careful consideration of its impact on the moral fabric of society, ensuring that human dignity remains at the forefront of technological progress.

Scenarios for Future Development

The technological singularity is often characterized as a point where artificial intelligence (AI) surpasses human intelligence, triggering rapid technological growth. To understand how we might reach this pivotal point, it is essential to consider several plausible scenarios regarding future technological developments.

One potential scenario is the gradual advancement of AI through incremental technologies. A steady evolution in machine learning, natural language processing, and robotics could lead us to a state where intelligent systems are seamlessly integrated into various aspects of daily life. For instance, improvements in autonomous vehicles could begin to dominate transportation sectors, resulting in reduced accidents and increased efficiency in logistics. Over the next decade, we may witness a shift in the workforce, with smarter automation allowing for the reallocation of jobs towards more creative and complex problem-solving roles.

Another scenario could involve a sudden breakthrough in quantum computing, enabling AI to process vast amounts of information at unprecedented speeds. This could lead to significant advancements in fields such as drug discovery and climate modeling, yielding solutions to challenges that have previously hindered human progress. The impact of such technological leaps could be profound, transforming both the economy and societal structures as businesses adapt to a landscape dominated by hyperintelligent systems.

A more dystopian view posits that rapid advancements in technology may outpace ethical considerations, resulting in unintended consequences. For instance, unchecked AI may lead to significant disparities in wealth distribution or the erosion of individual privacy. Thus, societies will need to adapt not only technologically but also ethically. Balancing innovation with a framework of governance and ethical standards will be critical to ensuring that advancements benefit humanity as a whole.

Overall, a timeline predicting the singularity will likely encompass a blend of these scenarios, highlighting the interconnectedness of technological progress, societal transformation, and economic evolution.

Conclusion: The Future We Face

The analysis of the technological singularity has brought to light a variety of perspectives on its potential timeline and implications. As we have discussed, the singularity refers to a point in the future when artificial intelligence surpasses human intelligence, leading to rapid advancements that could fundamentally alter society. Predictions regarding the year of the singularity often differ, ranging from a few decades to many centuries, reflecting the uncertainty inherent in technological progress. This divergence in viewpoints underscores the complexity of forecasting such a pivotal event.

Understanding the technological singularity is not merely an academic exercise but a critical necessity for society as we advance. The discussion around this subject highlights the importance of preparedness and adaptability in the face of unprecedented changes. As technologies continue to evolve at a rapid pace, it is essential to engage in thoughtful dialogue about the ethical, economic, and societal impacts of advanced AI. This will enable individuals, organizations, and governments to navigate the uncertainties and challenges that lie ahead effectively.

Ultimately, while the exact timeline for the singularity may remain uncertain, the significance of preparing for its arrival cannot be overstated. A thorough comprehension of this phenomenon ensures that proactive measures can be taken to mitigate any potential risks and to maximize the benefits that could arise from such advancements. The conversation surrounding the technological singularity must continue as it poses critical questions about the future of humanity and the very fabric of our society. As we face this horizon of possibilities, it is our responsibility to engage with these concepts thoughtfully and constructively.