Introduction to Continual Learning and Catastrophic Forgetting

Continual learning is a domain within machine learning that focuses on developing algorithms capable of learning from a stream of data over time. This approach mimics human cognitive abilities, allowing systems to adapt to new information and tasks without the need for retraining from scratch. As a result, continual learning is increasingly relevant in various applications, from robotics to natural language processing, where models must continuously evolve to meet dynamic environments and requirements.

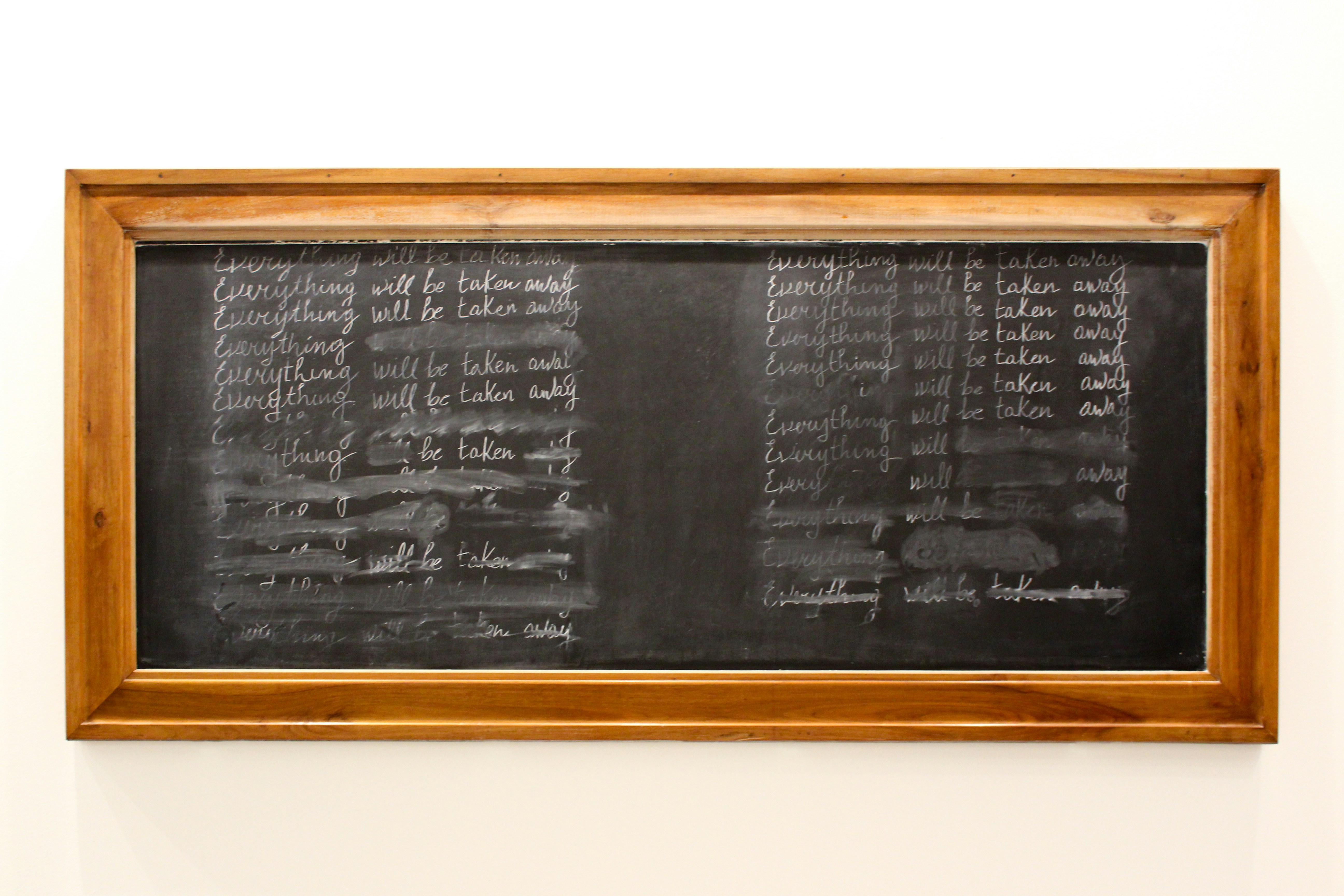

One of the significant challenges in continual learning is the phenomenon known as catastrophic forgetting. This term describes the tendency of neural networks to suddenly lose previously acquired knowledge when they are exposed to new data or tasks. As a neural network learns to solve a new task, its parameters may shift, inadvertently disrupting the learned representations corresponding to earlier tasks. This unintentional erasure of knowledge can hinder the model’s overall performance and adaptability, particularly in scenarios where data is sequentially presented.

The mechanics of catastrophic forgetting are often attributed to the way traditional neural network architectures operate. When trained on multiple tasks sequentially, these networks have difficulty retaining previously learned information, leading to significant performance drops on earlier tasks. Understanding the underlying mechanisms of catastrophic forgetting is crucial for developing effective strategies to mitigate its impacts. Researchers have explored various approaches, ranging from architectural modifications to regularization techniques that encourage memory retention while optimizing performance on new tasks.

By addressing the challenges posed by catastrophic forgetting, researchers can create more robust and flexible machine learning models that truly embody the principles of continual learning. This exploration sets the foundation for more detailed discussions and advancements in the field, paving the way for intelligent systems that can retain valuable knowledge over prolonged interactions with varied data sources.

The Mechanisms of Catastrophic Forgetting

Catastrophic forgetting, a significant challenge in continual deep learning, occurs when neural networks rapidly lose previously acquired knowledge upon learning new information. This phenomenon is primarily attributed to the mechanisms of weight updates within these networks, which inadvertently overwrite existing parameters corresponding to earlier learned tasks.

In standard deep learning architectures, the weight updates are adjusted based on the gradients computed during backpropagation. When a model is trained on a new task, the optimization process modifies the weights, adjusting them to minimize the loss for the new data. Consequently, this readjustment can deteriorate the performance on subsequent tasks, leading to the loss of information that was previously encoded within the neural network.

One of the limitations of conventional neural networks in the context of continual learning is their static structure, which lacks capacity to accommodate new information without revisiting or re-training from scratch. This rigidity emerges from the reliance on a single set of weights, which cannot independently represent features from multiple tasks. As more tasks are introduced, the gradients obtained from the most recent task can significantly influence the weight updates, resulting in previously learned concepts being overshadowed or completely forgotten.

Furthermore, interference among tasks exacerbates this problem. When a neural network encounters new data, it applies the same set of weights, which may not be optimal for the specific features or patterns of the new task. This situation creates a conflict that can lead to incorrect predictions, as the network struggles to balance what it has learned against new requirements. As a result, continuing to expose the model to a sequence of tasks without effective mechanisms for retention invariably yields a decline in overall performance, thereby highlighting the critical need for strategies that can mitigate catastrophic forgetting.

Factors Influencing the Severity of Catastrophic Forgetting

Catastrophic forgetting arises when a neural network is trained sequentially on multiple tasks, causing it to lose previously acquired knowledge. Several factors influence the extent of this phenomenon, primarily revolving around the nature of the training data, architectural choices in the model, the training protocols, and hyperparameter settings.

Firstly, the nature of training data plays a critical role in knowledge retention. Datasets characterized by a high degree of variability and complexity often result in more severe forgetting. If the tasks are too diverse or the data is presented in a biased manner, the model may struggle to consolidate learnings effectively, leading to catastrophic forgetting. Conversely, well-structured datasets with similar characteristics can mitigate this risk.

Secondly, architectural choices significantly affect how a model retains information. Certain network architectures, like recurrent neural networks (RNNs) or long short-term memory (LSTM) networks, may offer better mechanisms for retaining past information compared to conventional feedforward networks. The presence of mechanisms such as attention or memory augmentation could also enhance a model’s capacity to remember previous tasks, reducing the likelihood of forgetting.

Moreover, the training protocols adopted during model training can greatly dictate performance in the face of continual learning. For instance, utilizing rehearsal strategies, where past experiences are reintroduced during training, can improve the model’s ability to retain knowledge. Likewise, varying the frequency and order of task presentation is crucial in managing the severity of catastrophic forgetting.

Lastly, hyperparameter settings, such as the learning rate, batch size, and regularization techniques, serve as influential facets in determining how well the model adapitates to new tasks without losing previous knowledge. Fine-tuning these parameters according to specific tasks can lead to more robust performance against catastrophic forgetting.

The Role of Neural Network Architecture

In the realm of continual deep learning, one of the critical factors influencing the phenomenon of catastrophic forgetting is the underlying neural network architecture. Catastrophic forgetting occurs when a model forgets previously acquired knowledge upon learning new information. Different neural network architectures exhibit varying levels of resilience against this issue, which can significantly impact a model’s effectiveness in learning over time.

Recurrent Neural Networks (RNNs), designed for sequence data, process information in a temporal context, which allows them to maintain some degree of memory over time. However, despite their advantages, RNNs can suffer from difficulties related to long-term dependencies. This architecture is susceptible to catastrophic forgetting, particularly when exposed to a sequence of diverse tasks. The hidden states that encapsulate past information may become overwritten as new input sequences are processed, leading to a significant erosion of previously learned representations.

Conversely, Convolutional Neural Networks (CNNs) exhibit a degree of robustness to catastrophic forgetting owing to their hierarchical structure and localized receptive fields. CNNs are primarily used in image processing tasks, wherein they learn spatial hierarchies of features. By leveraging feature extraction through convolutional layers, CNNs can retain important representations over various tasks, although they are not entirely immune to forgetting if task distributions differ markedly.

Moreover, emerging architectures such as Transformers have gained traction in continual learning due to their unique self-attention mechanism, which allows for better contextual understanding. Transformers are designed to weigh the significance of different inputs dynamically, enabling them to retain essential information from diverse tasks more effectively than traditional architectures. This capability may reduce the incidence of catastrophic forgetting, as they can integrate new information without extensively overwriting past knowledge.

In summary, the choice of neural network architecture plays a pivotal role in determining the extent of catastrophic forgetting in continual deep learning. RNNs, CNNs, and Transformers each present their strengths and weaknesses in this regard, influencing how models adapt and retain knowledge across tasks.

Strategies to Mitigate Catastrophic Forgetting

Catastrophic forgetting remains a significant challenge in continual deep learning, leading to the degradation of previously learned knowledge when new tasks are introduced. Researchers have developed several strategies to address this issue effectively.

One prominent approach is the use of regularization techniques. These techniques aim to stabilize the learning process by constraining the parameters of the model to prevent drastic changes that could lead to the loss of previously acquired skills. Examples include Elastic Weight Consolidation (EWC) and Synaptic Intelligence (SI), where the importance of certain weights is maintained to retain learned information while accommodating new data.

Memory-augmented networks are another innovative solution. These architectures incorporate external memory components that allow the model to store and retrieve useful information across different tasks, effectively reducing the risk of forgetting. By leveraging these memory mechanisms, models can access relevant experiences from past tasks when learning new ones.

Rehearsal strategies also prove to be efficient in tackling catastrophic forgetting. This involves continually revisiting a subset of old tasks during training by either saving previous examples or using generated data. This helps reinforce the neural network’s memory of earlier tasks, thus preventing loss of knowledge as it adapts to new challenges.

Additionally, k-nearest neighbor (k-NN) methods can be integrated to retain information from prior tasks by using similarity measures to categorize new input data. This approach allows models to reference old knowledge more effectively, merging it with new learnings.

Finally, generative replay has emerged as a powerful technique for combating catastrophic forgetting. By generating synthetic examples from previously learned distributions, a model can rehearse old tasks without needing to retain all past data explicitly. This method effectively creates a continual learning environment where the model can adapt to new tasks while still recalling the old.

The Influence of Data Distribution and Task Differences

Catastrophic forgetting remains a significant challenge in continual deep learning, particularly when considering the impact of data distribution and the nature of tasks involved. The effectiveness of a model’s ability to retain knowledge is intricately linked to how similar or disparate the data distributions are across various tasks. When a model is trained on tasks that exhibit vastly different data characteristics, it may struggle to maintain previously acquired knowledge, resulting in substantial forgetting.

Specifically, when faced with more diverse data distributions, the likelihood of catastrophic forgetting increases. For instance, if a deep learning model is trained on a dataset containing images of animals and is subsequently trained on a dataset focused solely on vehicles, the model’s performance on the animal classification task may significantly decline. This phenomenon often occurs because new learning erases the weights associated with the earlier task, leading to reduced performance in those areas.

Conversely, tasks that share similar data characteristics may facilitate better knowledge retention. When tasks are more related — such as various types of animal classifications — the model can leverage shared features to reinforce its learning, thereby mitigating the effects of forgetting. This concept indicates that the effective structure of learning tasks can influence the model’s overall stability and retention of learned knowledge.

In addressing these challenges, researchers have explored various strategies, such as task-specific architectures or the use of memory-based mechanisms that help preserve critical information from previous tasks. Understanding the interplay between data distribution and task differences will be crucial for developing methodologies that promote effective knowledge retention in deep learning models, as well as for mitigating the adverse effects of catastrophic forgetting.

Experimental studies on catastrophic forgetting in continual deep learning have provided crucial insights into its dynamics and implications. One notable experiment, conducted by Kirkpatrick et al. (2017) in their seminal paper titled “Overcoming Catastrophic Forgetting in Neural Networks,” introduced the Elastic Weight Consolidation (EWC) technique. This approach helps models retain previously learned information while adapting to new tasks, thereby reducing the severity of catastrophic forgetting. The researchers demonstrated that EWC can significantly enhance the performance of neural networks across sequential tasks.

Another influential study by Lopez-Paz and Ranzato (2017) presented a theoretical framework to analyze catastrophic forgetting and its mitigation strategies. Their work, “Gradient Episodic Memory” (GEM), introduced a mechanism that preserves past knowledge by utilizing episodic memory in the learning process. Their experiments indicated a marked improvement in retention of older tasks, showcasing that GEM effectively addresses the challenges posed by catastrophic forgetting in continual learning environments.

In the exploration of generative models, a study by Nguyen et al. (2019) demonstrated how certain architectures could inherently mitigate forgetting. Their paper, “Generative Memory Networks for Continual Learning,” illustrated that generative methods could be employed to synthesize previous data, thereby allowing models to refresh their memory of earlier tasks while learning new ones. This finding emphasizes how integrating generative techniques into continual learning frameworks can diminish the impact of catastrophic forgetting.

Moreover, a comprehensive meta-analysis of various strategies was presented in the survey by Parisi et al. (2019), highlighting the effectiveness of diverse methodologies such as regularization techniques, memory systems, and architectural approaches. Their findings underscored the complex nature of catastrophic forgetting and the ongoing need for robust solutions that accommodate the combining of new and old information without significant loss.

Future Directions in Research

The issue of catastrophic forgetting remains one of the central challenges in the field of continual deep learning. As researchers aim to develop more robust models capable of incremental learning, several promising directions are emerging. One significant avenue is the exploration of innovative model architectures specifically designed to mitigate forgetting. For instance, modular neural networks, where different modules can specialize in various tasks, have the potential to enhance retention of learned information without compromising learning capacity.

Another essential direction involves the development of novel training paradigms. Techniques such as rehearsal-based methods, where previously learned information is periodically revisited during training, appear to be effective. Additionally, employing generative models to simulate past experiences can aid in reducing the effects of catastrophic forgetting by reinforcing previously acquired knowledge. Researchers are also investigating approaches like meta-learning, where models learn to learn, potentially making them more adaptable to new information without overwriting existing knowledge.

Interdisciplinary collaboration will play a crucial role in addressing the complexities associated with catastrophic forgetting. Drawing insights from cognitive science and neuroscience, for instance, could inform more biologically inspired models that could handle the retention and integration of new information more effectively. Moreover, engaging with fields such as psychology can provide deeper understanding into how humans manage learning and memory, which could inspire novel algorithms.

As technology evolves and the demand for intelligent systems that can navigate dynamic environments increases, the challenge of catastrophic forgetting in continual learning will remain paramount. Future research efforts that focus on these emerging architectures and training strategies, alongside interdisciplinary collaborations, will be vital in paving the way for advancements in this area, potentially leading to more effective and resilient deep learning models.

Conclusion: The Implications of Catastrophic Forgetting in AI Development

As we have explored throughout this discussion, catastrophic forgetting poses a significant challenge in the domain of continual deep learning. This phenomenon occurs when a neural network, while acquiring new information, inadvertently diminishes its performance on previously learned tasks. This effect has considerable implications for the development of artificial intelligence systems that require adaptability and longevity in their learning processes.

The ramifications of catastrophic forgetting extend across various fields, including healthcare, autonomous driving, and natural language processing, where sustained performance across diverse tasks is critical. Without robust mechanisms to mitigate this issue, AI systems may struggle in applications requiring cumulative knowledge, thereby hindering advancement in real-world scenarios where continuous learning is vital.

Furthermore, the exploration of catastrophic forgetting underscores the importance of ongoing research in this area. Understanding the mechanisms that lead to this type of forgetting can inform the creation of more resilient learning algorithms. Implementing strategies such as rehearsal methods, architectural adaptations, or memory augmentation can help ensure that AI systems retain previously acquired knowledge while incorporating new information seamlessly.

In conclusion, as AI technology advances towards greater autonomy and capability, addressing catastrophic forgetting will remain a crucial aspect of ensuring the effectiveness of deep learning models. Stakeholders in AI research and development must prioritize investigations into novel approaches that enhance long-term learning capabilities. By doing so, they can enable the creation of AI systems that not only adapt but thrive in ever-evolving environments, facilitating significant progress in the future of artificial intelligence.