Introduction to Transformers in Circuit Learning

Transformers represent a revolutionary architecture in the field of machine learning, originally introduced for natural language processing tasks. They operate on the principle of self-attention mechanisms, allowing them to weigh the significance of various components within input data, which is particularly beneficial for managing complex data structures. This capability makes transformers highly relevant in the context of electronic circuit learning, where various parameters and relationships must be understood and modeled accurately.

The fundamental architecture of a transformer consists of an encoder and a decoder, where the encoder processes the input data and generates context-sensitive representations, while the decoder translates these representations into the desired output format. This architecture enables transformers to capture dependencies across data points, regardless of their position, facilitating the understanding of intricate structures such as circuits.

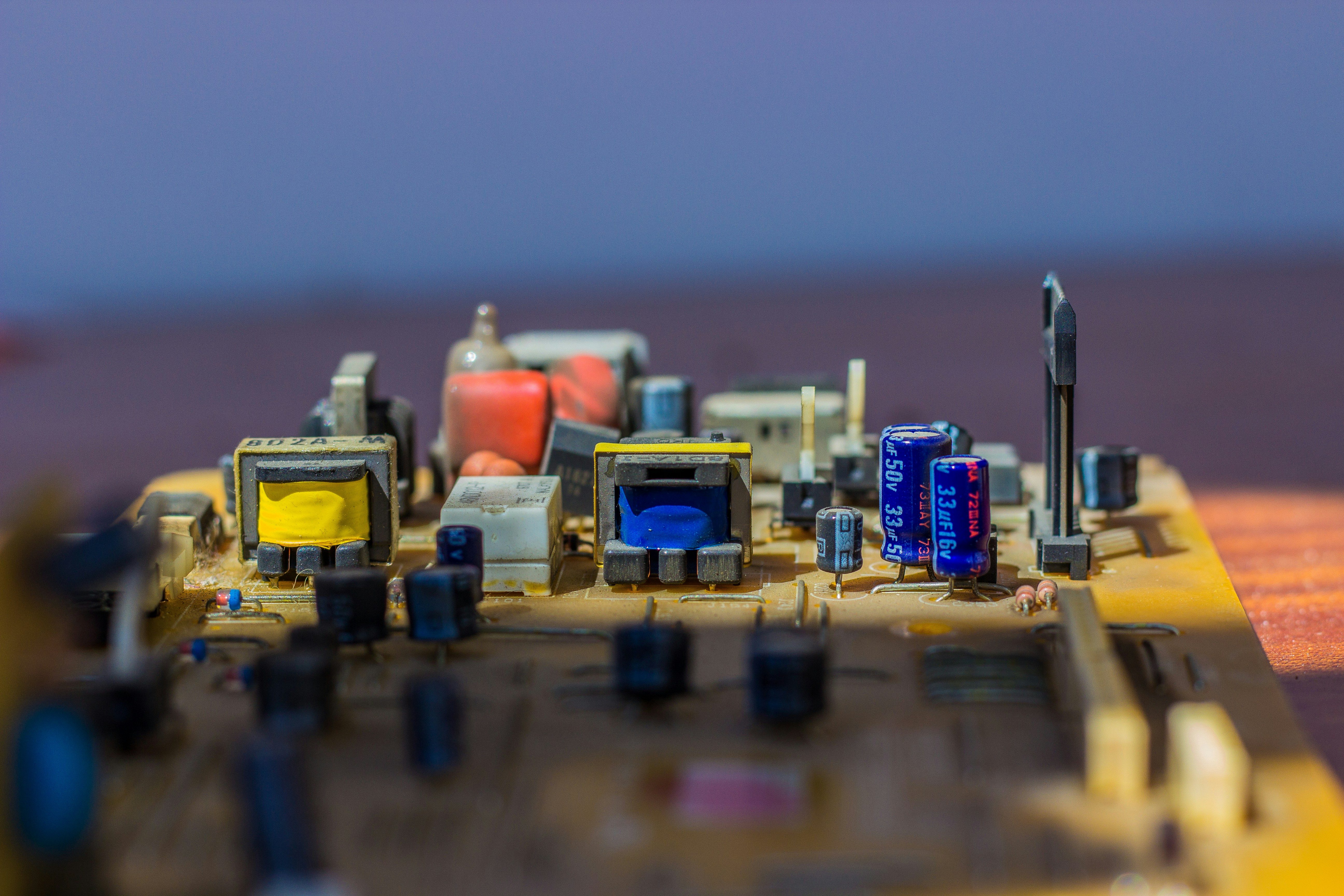

In the realm of circuit learning, transformers can analyze the components of electronic circuits, their connections, and overall behaviors. This application opens avenues for automated design, fault detection, and optimization of circuits by leveraging vast datasets from existing circuit designs and behaviors. However, several challenges persist in their application; notably, the complexity of the task often leads to issues such as overfitting and scalability when dealing with extensive circuit data.

Moreover, the necessity for thorough training with labeled datasets cannot be understated, as models must learn to differentiate between varied circuit designs and their expected outcomes. Despite these hurdles, the potential for transformers to enhance capabilities in circuit learning is profound, warranting further research and development to refine their application in this domain. This discussion sets the groundwork for exploring how we may optimize transformer-based models to achieve enhanced learning of electrical circuits.

Understanding Circuit Learning and Its Importance

Circuit learning refers to the methodology through which algorithms and machine learning models acquire the capability to recognize, analyze, and interpret electrical circuits. This process involves sophisticated techniques that enable these systems to identify patterns within circuit designs and operational behaviors. As our reliance on automation and advanced technologies escalates, understanding circuit learning becomes increasingly critical across a myriad of domains, including electronics design, industrial automation, and robotics.

In the realm of electronics design, effective circuit learning can significantly streamline the development process. By leveraging algorithms that have the ability to learn from extensive datasets of circuit schematics, designers can enhance efficiency and reduce the time required to innovate and implement new circuits. Furthermore, these intelligent systems can aid in optimizing circuit performance by predicting potential issues and suggesting improvements based on learned patterns.

Within the field of automation, the ability of algorithms to adapt and refine their understanding of circuit configurations allows for the development of more responsive and flexible automated systems. Advanced circuit learning capabilities enable machines to self-adapt to new circuit inputs, fostering increased reliability and efficiency in operational settings.

In robotics, where robots must process and respond to electrical signals from various sensors, the principles of circuit learning are essential. Robots equipped with these learning capabilities can efficiently manage complex tasks, such as navigation and task execution, by interpreting and acting upon the feedback received from their electronic components.

Ultimately, the significance of efficient circuit learning lies in its potential to revolutionize the design and functionality of electronic systems. As these technologies evolve, the implications for productivity and innovation across diverse sectors are profound, encouraging further investigation into how we can better train transformers and other models to learn effective circuits.

Current Approaches to Teaching Transformers Circuit Patterns

In the realm of artificial intelligence, transformers have emerged as a powerful architecture for a variety of applications, including the learning and prediction of circuit patterns. Existing methodologies to train transformers in this domain predominantly utilize both supervised and unsupervised learning techniques.

Supervised learning is characterized by its reliance on labeled datasets, where each example is paired with a corresponding target output. This approach is instrumental in situations where specific circuit patterns need to be recognized and categorized effectively. By feeding the transformer model a substantial amount of labeled data representing diverse circuit patterns, practitioners can guide the model to learn associations between the input features and their respective labels. The use of loss functions enables optimization of the model’s performance, gradually improving its accuracy in delineating intricate circuit designs.

Conversely, unsupervised learning methods do not necessitate labeled data, allowing transformers to discern patterns and structures in the circuit data autonomously. Techniques such as clustering and dimensionality reduction are often employed to identify hidden features within the data, enabling the model to learn circuit relationships without direct human intervention. Innovations such as self-supervised learning have further blurred the lines between these methodologies, leveraging large unlabeled datasets while generating pseudo-labels to bolster learning efficiency.

In addition to these traditional approaches, researchers are exploring advancements such as adaptive learning rate schedules and novel architecture modifications that are tailored specifically to the unique challenges posed by circuit pattern recognition. These innovations aim to enhance the capabilities of transformers, allowing them to learn more sophisticated circuit patterns effectively.

Challenges in Forcing Transformers to Learn Circuits

Training transformers to gain a deeper understanding of circuits presents several notable challenges that hinder their efficiency and effectiveness. One significant limitation arises from the scarcity of relevant data. Transformers thrive on large datasets for optimal performance, yet high-quality circuit datasets may be limited due to privacy, proprietary information, or the complexity involved in assembling such data. This scarcity can subsequently lead to inadequate model training and a critical understanding of circuit behavior.

Another challenge lies in the intricate nature of the circuits themselves. Circuits often involve complex interdependencies, non-linear behaviors, and a variety of components that can add layers of difficulty to the learning process. Transformers, by design, excel at capturing relationships within data, but translating those relationships into practical circuit understanding requires sophisticated interpretations that are not always clear cut. This complexity can result in models not performing effectively when tasked with circuit analysis.

Additionally, the computational expense associated with training transformers cannot be overlooked. The architecture of a transformer requires significant processing power, memory, and time, especially when examining extensive or intricate circuit layouts. As a result, enterprises must carefully weigh the costs and benefits associated with deploying such models, which can become prohibitive in resource-sensitive environments.

Finally, there’s the prevalent issue of overfitting. Given the limited availability of training data, transformers might end up memorizing the training samples rather than genuinely learning from them, which can lead to poor generalization when encountering unseen data. Striking a balance between model complexity and generalization remains a critical challenge in this context. In summary, the challenges of limited data availability, model complexity, computational demands, and the risk of overfitting must be systematically addressed to enhance the ability of transformers to learn and interpret circuits effectively.

Evaluating Transformer Performance in Circuit Learning

Evaluating the performance of transformers in the realm of circuit learning is a critical aspect of understanding their capabilities and limitations. In this context, several criteria and metrics are employed to provide an objective assessment of their effectiveness. The primary focus is on how well these models can learn and represent circuit designs, which require complex reasoning and precision.

One of the most common metrics used to evaluate transformer performance is accuracy, which reflects the proportion of correct predictions made by the model. In circuit learning, accuracy is often assessed by the model’s ability to generate correct netlists or schematic representations given a set of design specifications. However, this metric alone does not provide a comprehensive picture; therefore, additional criteria such as precision, recall, and F1-score are frequently used to paint a clearer picture of the model’s proficiency in various learning tasks.

Furthermore, benchmark datasets play a crucial role in this evaluation process. Datasets such as ISPD (International Symposium on Physical Design) benchmarks and OpenCores can be utilized to compare the performance of different transformer models. These benchmarks are designed to test various aspects of circuit learning, from simple to more complex designs, thus allowing for a meaningful comparison of different model architectures and configurations.

Another important factor in evaluating transformer performance is generalization. A model that performs well on training data but poorly on unseen data may indicate overfitting, which is a significant challenge in machine learning. Metrics related to generalization help assess the robustness of a transformer’s learning abilities, ensuring that the model is capable of applying its learned knowledge to a variety of circuit design scenarios.

Investment in developing these evaluation metrics not only enhances our understanding of transformer models in circuit learning but also provides a framework for continuous improvement and innovation in this field.

Recent Advances and Innovations in Transformer Models

The field of transformer models has experienced significant advancements in recent years, impacting their performance and efficiency in various applications. Researchers have been focusing on enhancing transformer architectures and developing innovative training strategies to improve learning capabilities, especially in specialized areas such as circuit learning.

One notable development is the introduction of sparse transformer architectures. These models incorporate mechanisms to focus computational resources on the most relevant parts of the input data, thus significantly improving both the speed and efficiency of the models. This sparsity allows transformers to deal with larger datasets and more complex learning tasks without an associated increase in computational costs.

Another innovative approach has been the integration of attention mechanisms that adaptively prioritize information. By leveraging dynamic attention scoring, these transformers are capable of learning more nuanced relationships within the data that directly contribute to an enhanced understanding of circuit structures. This adaptability plays a crucial role in enabling the models to discern intricate patterns that are characteristic of effective circuit designs.

The exploration of hybrid models that combine transformers with other neural architectures, like convolutional neural networks (CNNs), has shown promise as well. Such hybrids capitalize on the strengths of both model types; the convolutional layers excel at extracting spatial hierarchies, while the transformer layers retain the ability to model long-range dependencies. This synergy could potentially lead to breakthroughs in how transformers learn and generalize complex circuit designs.

Additionally, advancements in self-supervised learning techniques have been pivotal in transformer training. By utilizing unlabeled data to pre-train models on various tasks, researchers have been able to make transformers more versatile. This self-supervised approach ensures that the models develop a robust understanding of circuit learning, equipping them for downstream applications.

Case Studies: Successful Implementations of Transformers in Circuit Learning

Transformers have emerged as a robust tool for various applications in machine learning, and their implementation in circuit analysis and design represents a significant advancement in the field. One prominent case study involved the use of transformers to analyze complex electronic circuits in the semiconductor industry. Researchers implemented a transformer model to predict the operational efficiency of various circuit configurations, which ultimately led to enhanced performance metrics. By utilizing historical data, the model was able to identify patterns and correlations that human engineers might overlook, thus streamlining the optimization process.

Another noteworthy example comes from the realm of integrated circuit (IC) design. Here, transformers were applied to automate the design verification process. Traditionally a labor-intensive task requiring extensive human oversight, the integration of transformers allowed for a more efficient verification workflow. In this case, the model was trained on extensive datasets containing design specifications alongside their verification outcomes. As a result, the transformer significantly reduced the time taken to validate circuit designs, demonstrating not only time efficiency but also an improved accuracy rate in identifying potential design flaws.

A further illustration of transformers in circuit learning can be observed in the development of adaptive filtering systems for communication applications. In this instance, researchers leveraged a transformer to enhance the signal processing capabilities of circuits used in wireless communications. By modeling the adaptive filtering process, the transformer effectively learned to adjust filter parameters in real-time, leading to improved signal quality and reduced interference. This case study highlights the transformer’s versatility and its potential to significantly elevate circuit performance across various applications.

These case studies provide crucial insights into the practical capabilities of transformers in learning and improving circuit designs. The successes attained in these implementations not only showcase the efficacy of transformers but also pave the way for future explorations in this promising area of research.

Future Directions for Research in Transformer Circuit Learning

The exploration of transformer architectures in the domain of circuit learning presents an exciting frontier for both machine learning and electrical engineering. As the capabilities of transformer models evolve, researchers are increasingly focused on fine-tuning their performance in various applications related to circuit design and analysis. One promising direction for future research involves enhancing the ability of transformers to interpret and generate circuit schematics. By integrating graphical data with numerical features, transformers could become adept at understanding complex relationships within circuits, leading to potential breakthroughs in automated circuit design.

Moreover, the quest for improving the learning efficiency of transformers can benefit from incorporating hybrid models that leverage both supervised and unsupervised learning strategies. Combining transformer architecture with techniques like reinforcement learning may yield more adaptable and smarter models, capable of solving intricate circuit learning tasks. Additionally, expanding the dataset size and diversity may enhance the model performance, allowing transformers to capture a wider range of circuit behaviors and operational conditions.

In light of these advancements, the implications of transformer circuit learning extend far beyond circuit design. Such innovations could significantly impact the development of intelligent systems that can adapt to changing electrical environments, improve reliability in circuit functionality, and reduce the need for extensive human intervention in engineering processes. Furthermore, examining how transformers can assist in predictive maintenance of circuit systems is an area ripe for exploration. Ultimately, the future of transformer circuit learning promises not only to redefine research methodologies in machine learning but also to revolutionize practices within electrical engineering, leading to more efficient and reliable circuit designs.

Conclusion: The Potential of Transformers in Circuit Analysis

Throughout this discussion, the role of transformers in improving circuit analysis has been underscored. Transformers, particularly in the realm of machine learning, offer robust methodologies that can enhance understanding and representations of complex circuit patterns. Their self-attention mechanisms enable the model to focus on relevant features, thereby streamlining the learning process in circuit analytics.

Despite the promise that transformers hold, it is crucial to acknowledge existing challenges. For instance, incorporating transformers effectively into traditional circuit design methodologies may require significant adaptation of existing frameworks. Additionally, the complexity and scale of data encountered in real-world circuit analysis can pose obstacles, necessitating the development of more efficient training protocols and computational resource management.

Looking ahead, researchers are increasingly optimistic about addressing these challenges. As advancements in transformer architectures continue to emerge, there is potential for significant improvements in circuit analysis applications. Future studies may lead to novel hybrid models that integrate transformers with other machine learning techniques, thereby capitalizing on their strengths while mitigating limitations. Moreover, interdisciplinary collaborations may pave the way for transformative insights at the intersection of machine learning and electrical engineering.

In conclusion, while hurdles remain, the future of transformer models in circuit learning is bright. Continued exploration in this domain promises to unlock new methodologies, enabling engineers to design more efficient and effective circuits. This journey into enhancing the intersection between transformers and circuit analysis could lead to groundbreaking developments, setting the stage for advancements that benefit both academia and industry.