Introduction to Chain-of-Thought Distillation

Chain-of-thought distillation is a significant paradigm in the realm of Artificial Intelligence (AI) and machine learning, which focuses on improving the cognitive performance of models by mimicking human-like reasoning processes. This methodology traces its origins back to the need for AI systems to achieve higher efficiency and effectiveness in task execution, particularly in complex scenarios that require multi-step reasoning.

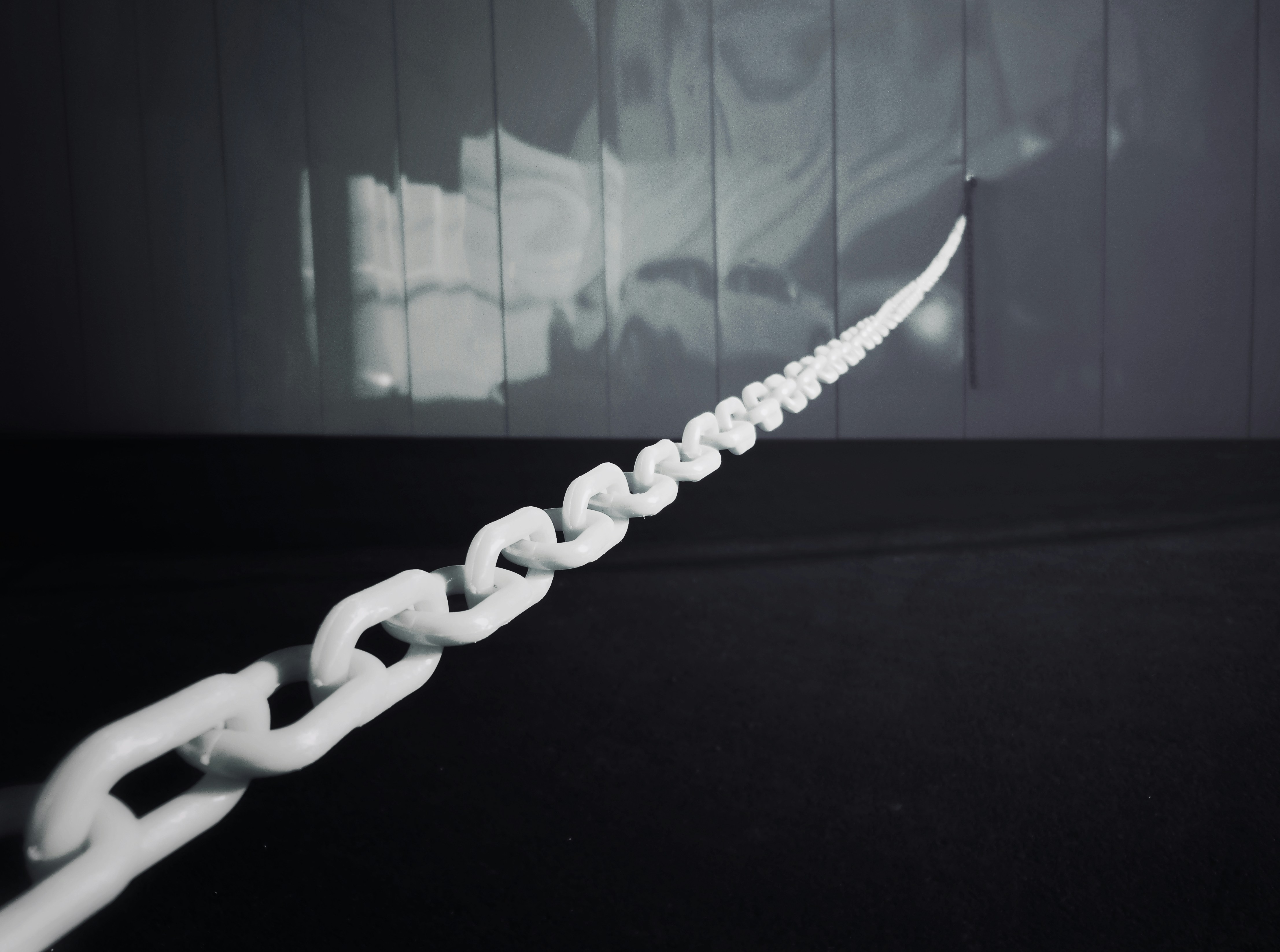

The primary essence of chain-of-thought distillation lies in its ability to cultivate intricate reasoning chains within AI models. This is accomplished through a systematic training process where a model learns to replicate the sequential thought processes exhibited by human experts. Essentially, this distillation process breaks down complicated tasks into manageable steps, allowing models to learn from the structured reasoning that humans naturally employ.

In practice, chain-of-thought distillation enhances the model’s capabilities by facilitating an understanding of the logical connections and dependencies inherent in various tasks. For instance, when faced with a problem, the model is trained to navigate through a sequence of reasoning, leading to conclusions that are both coherent and highly relevant. This framework not only elevates the performance of AI models in straightforward tasks but substantially enriches their ability to tackle more sophisticated challenges.

The relevance of this approach cannot be overstated, as it addresses one of the critical limitations in current AI technologies — the lack of interpretability and reasoning depth. By integrating human-like reasoning patterns into AI models through chain-of-thought distillation, the field of machine learning takes a significant leap towards creating systems that can operate more autonomously and intelligently.

Understanding World Models

World models refer to the internal representations created by artificial intelligence systems to simulate their understanding of the external environment. These models function as a cognitive framework that allows AI to interact effectively with the world, akin to how humans perceive and navigate their surroundings. The concept of world models encompasses a range of methodologies, particularly in the fields of machine learning and robotics, where the objective is to equip AI with the ability to predict future states based on past and current observations.

At their core, world models function by using data from various inputs, enabling AI systems to build a comprehensive map of the environment. This mapping process involves capturing critical relationships and dynamics that govern the behavior of objects and phenomena within the surroundings. Through continuous learning and adaptation, these models evolve, thereby refining their understanding over time. Such an adaptive framework is essential for enabling AI systems to make informed predictions and decisions.

The predictive capabilities of world models are particularly pertinent in applications such as autonomous driving and robotic manipulation, where understanding the environment is crucial for safe and effective operation. By simulating various scenarios, AI can foresee potential obstacles or changes in the environment, allowing it to respond proactively rather than reactively. This foresight contributes to increased efficiency and safety in performing complex tasks.

Moreover, the integration of chain-of-thought distillation can significantly enhance the performance of world models. By distilling reasoning processes into more streamlined forms, AI can better utilize its internal models, leading to improved prediction accuracy. This synergy between world models and advanced reasoning techniques represents a key development in the pursuit of more autonomous and intelligent AI systems.

The Link Between Chain-of-Thought Distillation and World Models

Chain-of-thought distillation is a pivotal process in machine learning that facilitates the communication and transfer of complex reasoning processes from a large model to a smaller, more efficient one. This technique has garnered significant attention for its potential to enhance world models, enabling them to better understand and simulate their environments.

The primary function of world models is to create internal representations of the surrounding space, which can be employed for various tasks such as decision-making, navigation, and interaction with external stimuli. By integrating chain-of-thought distillation into the training process, researchers can improve these models in several noteworthy ways. First, distillation helps to streamline the vast amount of knowledge captured in larger models into more compact versions, which can operate with less computational power without sacrificing critical insights.

Moreover, chain-of-thought distillation encourages the distilled models to internalize reasoning patterns similar to human cognition. This alignment with human-like thought processes enhances the interpretability and reliability of world models, making them more adept at handling complex situations. With a refined focus on stepwise reasoning and logical progression, world models become better equipped to forecast outcomes based on previous experiences, thereby improving their predictive capabilities.

Additionally, innovative distillation methods can adaptively mold world models, enabling them to learn from dynamic environments. This adaptability is crucial in real-world applications where the context may shift rapidly. By leveraging the synergy between chain-of-thought distillation and world models, researchers are paving the way for the development of more robust and responsive systems that mimic human reasoning, yielding improved performance across diverse scenarios.

Key Benefits of Chain-of-Thought Distillation for World Models

Chain-of-thought distillation is a novel approach that significantly enhances the performance and capabilities of world models. By extracting key reasoning patterns from complex neural networks and simplifying them without losing the depth of understanding, this method offers various advantages. The foremost benefit is the improvement in reasoning capabilities. World models that employ chain-of-thought distillation can process information more efficiently and draw logical conclusions based on the distilled reasoning patterns. This leads to heightened accuracy in representations and the ability to simulate various scenarios effectively.

Another critical advantage of chain-of-thought distillation lies in its influence on decision-making. By utilizing distilled reasoning chains, world models can evaluate multiple outcomes based on different inputs, allowing for adaptive and informed decision-making processes. This adaptability is particularly beneficial in dynamic environments where quick and rational responses are required. Consequently, models that leverage this process tend to outperform traditional models in tasks where effective decision-making is crucial.

Furthermore, chain-of-thought distillation significantly enhances environmental predictions. The distilled knowledge embedded within the models improves their ability to forecast future states based on current observations. Enhanced predictive accuracy not only allows for better simulation of complex phenomena but also ensures that models can adapt to evolving conditions, thus increasing their reliability in practical applications. Organizations utilizing world models based on this distillation technique can expect more nuanced insights and better outcomes from their predictive analyses.

In essence, the incorporation of chain-of-thought distillation into world models results in improved reasoning, informed decision-making, and enhanced prediction capabilities, all of which contribute to the overall efficacy of these systems in various real-world applications.

Challenges in Implementing Chain-of-Thought Distillation

The implementation of chain-of-thought distillation in world models presents several prominent challenges that must be addressed to fully leverage its potential. One of the primary obstacles is the computational cost associated with the distillation process. Chain-of-thought distillation necessitates extensive computational resources, given that it involves processing large datasets and performing complex calculations to extract and refine reasoning paths from model outputs. As models grow in complexity and size, the demands for hardware and energy consumption escalate, rendering it cost-prohibitive for many practitioners.

Additionally, the success of chain-of-thought distillation is heavily reliant on the quality and quantity of data used. Insufficient or biased training data can lead to incomplete or skewed reasoning pathways, which in turn diminishes the effectiveness of the distilled model. Data requirements are not only vast but also varied; the model must capture diverse scenarios to ensure that the chain of thoughts accurately reflects a broad spectrum of knowledge. This underlines the necessity for rigorous data collection and curation processes, which can be time-consuming and resource-intensive.

Moreover, potential biases embedded within the source models pose another challenge. If the initial models harbor specific biases, these can be propagated and even amplified during the distillation process. This may lead to homologous inaccuracies in the reasoning chains, impacting the reliability of the conclusions drawn. Addressing these biases requires concerted efforts in monitoring data quality and implementing techniques that promote fairness and equity in AI outputs.

In the face of these challenges, a strategic approach to overcoming computational limitations, ensuring high-quality data, and actively addressing biases is essential for the successful integration of chain-of-thought distillation into world models.

Case Studies: Successful Applications of This Methodology

Chain-of-thought distillation has emerged as a powerful technique to refine world models across various domains, resulting in improved performance and accuracy. This section delves into several noteworthy case studies that illustrate the practical application of this methodology.

In the field of autonomous vehicles, developers have successfully employed chain-of-thought distillation to enhance decision-making algorithms. By systematically analyzing the reasoning processes behind complex driving scenarios, researchers were able to train models that not only predict the behavior of other road users but also adapt to dynamic environments more effectively. This enhanced capability has led to significant advancements in safety and efficiency for autonomous systems.

Another compelling example can be found in healthcare, specifically in the realm of diagnostics. Machine learning models have been refined through chain-of-thought distillation to better interpret medical imaging data. By integrating expert reasoning patterns during the training phase, these models achieved higher diagnostic accuracy and reduced false positives. This success story underscores how chain-of-thought methodologies can directly impact patient outcomes and streamline clinical workflows.

Additionally, in the financial sector, the application of chain-of-thought distillation has been transformative in fraud detection systems. By enabling algorithms to replicate human-like reasoning, financial institutions have significantly reduced their vulnerability to fraudulent activities. Incorporating historical patterns and expert insights, these systems now provide a higher level of security, showcasing the importance of innovative model training methods.

These case studies collectively emphasize the versatility and effectiveness of chain-of-thought distillation in enhancing world models. Across diverse fields such as transportation, healthcare, and finance, the integration of this methodology has proven instrumental in driving advancements and improving decision-making processes.

Future Directions and Research Opportunities

The field of chain-of-thought distillation is poised for significant advancements, particularly in its application to world models within artificial intelligence. As researchers continue to explore the intricate dynamics of thought processes and their representations, several emerging trends have surfaced that may shape future developments. First and foremost, enhancing the interpretability of models through chain-of-thought distillation is gaining traction. This focus aims to produce models that not only perform well but also offer insight into their decision-making processes, thus fostering a better understanding of AI behavior in complex scenarios.

Furthermore, the integration of chain-of-thought distillation into reinforcement learning environments is an area ripe for exploration. By leveraging this approach, researchers could create more robust world models that are capable of understanding and predicting different agent behaviors in diverse settings. This capability will be crucial as we move towards general-purpose AI systems that need to navigate real-world challenges effectively.

Another promising direction revolves around the collaborative aspect of chain-of-thought distillation, particularly in multi-agent systems. By incorporating mechanisms that allow for knowledge sharing among agents, we can enhance the collective intelligence of artificial systems. This could lead to dramatic improvements in task efficiency and adaptability, fostering environments where collaborative learning among AI agents will shape future applications.

Moreover, ethnographic approaches that incorporate human cognitive processes into the distillation framework can yield innovative insights. Understanding how humans think and reason can inform the design of more sophisticated world models that mimic nuanced human thought patterns, paving the way for ethical AI development. Overall, the future of chain-of-thought distillation holds immense potential, indicating that continued research in this domain could significantly influence the trajectory of AI technology.

Ethical Considerations and Implications

The implementation of chain-of-thought distillation in world models raises significant ethical concerns that warrant thorough examination. As AI technologies evolve, their integration into society poses challenges that require careful navigation to mitigate potential negative impacts. One of the foremost ethical considerations involves transparency. The process by which AI systems arrive at conclusions or predictions should be understandable to users, especially when these systems influence critical decision-making processes in areas such as healthcare, finance, and law enforcement.

Societal perceptions play a crucial role in shaping the acceptability of chain-of-thought distillation. Trust in AI systems can easily be undermined if people perceive that decisions are made without adequate explanation. Furthermore, the risk of biases being reinforced through poorly designed models poses significant ethical dilemmas. Models trained on biased data can propagate unfair outcomes, exacerbating existing inequalities in society. Thus, ensuring robust training on diverse and representative datasets is essential to address potential issues related to fairness and discrimination.

Additionally, the potential for misuse of chain-of-thought distillation technology presents another layer of ethical challenges. As AI systems become more sophisticated, there exists a risk that malicious actors may exploit these technologies for deceptive practices, manipulation, or misinformation. This highlights the importance of establishing strict ethical standards and regulations governing the development and deployment of such systems.

Responsible usage of advanced AI technologies entails ongoing collaboration among developers, policymakers, and the public to foster an environment that prioritizes ethical considerations. Proactive measures must be taken to ensure that the benefits of chain-of-thought distillation in world models do not come at the cost of societal trust and well-being. Balancing innovation with accountability is imperative in navigating the ethical landscape associated with these advancements.

Conclusion: The Transformative Potential of Chain-of-Thought Distillation

In reviewing the advancements brought forth by chain-of-thought distillation, it becomes evident that this innovative approach significantly enhances the construction and refinement of world models. The fundamental principle of leveraging sequential reasoning processes allows for the extraction of deeper insights and a more nuanced understanding of complex scenarios. As demonstrated throughout this discourse, chain-of-thought distillation not only facilitates the learning process but also fosters greater accuracy and reliability in the outcomes generated by artificial intelligence systems.

One of the most critical findings is the enhanced capacity for these models to predict and simulate various real-world situations, thanks to the structured reasoning facilitated by this methodology. By focusing on the layers of reasoning that guide decision-making, chain-of-thought distillation equips AI systems with the ability to analyze data more holistically. This leads to more sophisticated interactions and responses in varied applications, ranging from natural language processing to robotic automation.

Furthermore, the implications for future AI development are profound. The integration of chain-of-thought distillation into existing AI architectures represents a significant leap toward achieving genuine understanding and reasoning capabilities in machines. As these systems evolve, the anticipated impact on industries, including education, healthcare, and manufacturing, could be transformative. Harnessing the potential of this approach not only progresses AI but also encourages ethical considerations surrounding its implementation, ensuring these advancements contribute positively to society.

Overall, chain-of-thought distillation stands as a pivotal element in the ongoing quest for improving world models. Its emphasis on structured reasoning promises to redefine the boundaries of what artificial intelligence can accomplish, ultimately guiding us toward more intelligent and adaptive systems.