Introduction to Loss Geometry

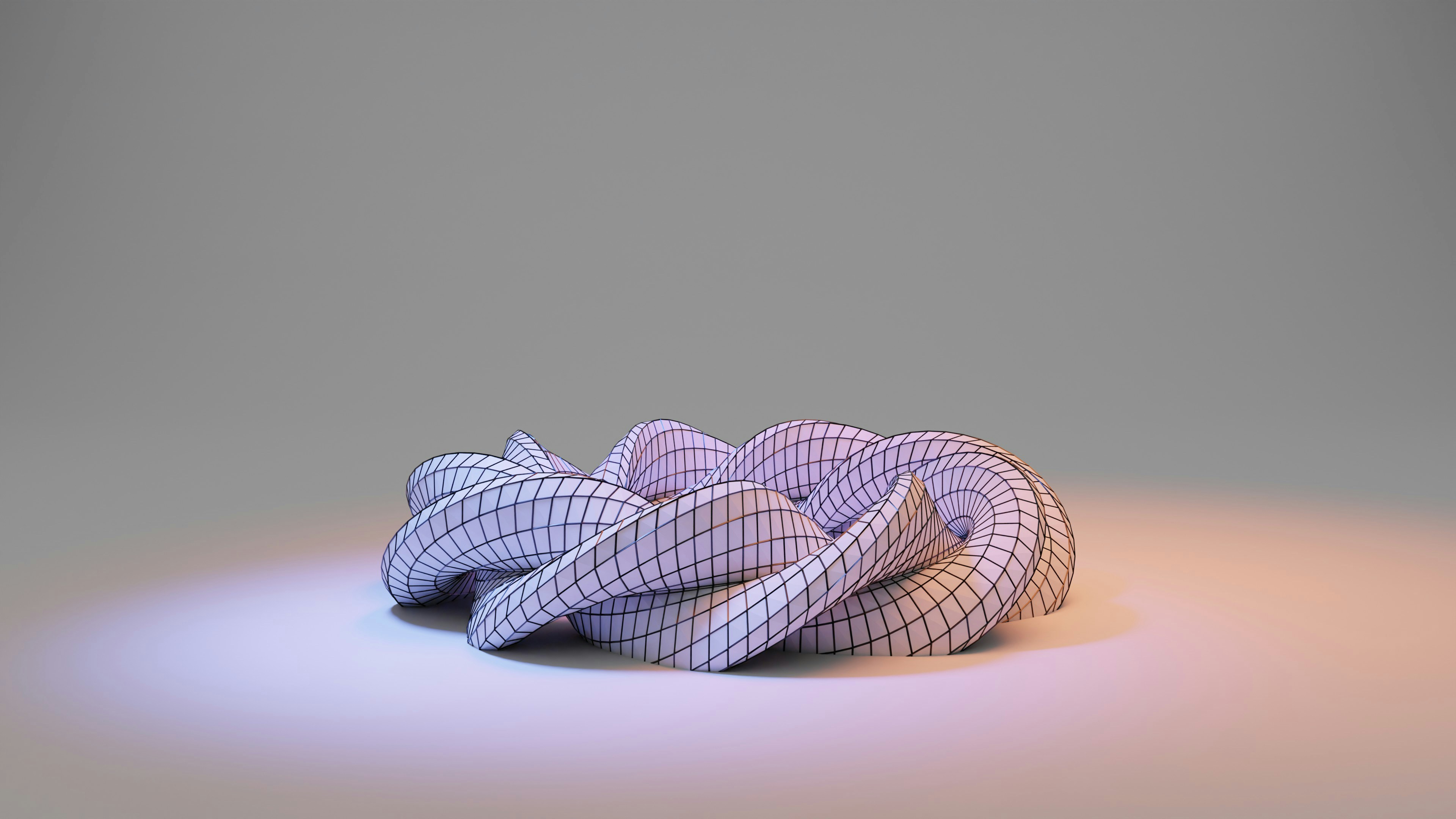

Loss geometry is a pivotal concept in the realm of machine learning, particularly in the context of optimization algorithms. It refers to the geometrical structure of the loss landscape, which represents how the loss function varies with respect to the model parameters. Understanding this structure is crucial for analyzing the performance of optimization methods, such as gradient descent, and their ability to converge to a solution.

In essence, the shape of the loss landscape can significantly affect the training process of a machine learning model. For instance, a loss surface with wide, flat regions may allow for easier convergence, as the optimization algorithm can traverse these areas without being impeded by steep gradients. Conversely, sharp valleys or narrow ravines can create challenges as they may lead to the optimizer getting stuck in local minima, thus hindering the overall training process. The study of loss geometry sheds light on why certain algorithms may perform better than others depending on the nature of the loss landscape.

Moreover, the dimensionality of the parameters also plays an important role in loss geometry. High-dimensional spaces are common in modern machine learning models, and navigating these intricate landscapes can be complex. Techniques such as visualizing the loss surface in lower dimensions or employing advanced optimizers, like the SAM (Sharpness-Aware Minimization) optimizer, can enhance the understanding of how specific points in the parameter space relate to the overall landscape.

Thus, grasping the concept of loss geometry is essential in machine learning. It informs the development and selection of optimization algorithms, helping to optimize model training and improve convergence behaviors.

What is the SAM (Sharpness-Aware Minimization) Optimizer?

The Sharpness-Aware Minimization (SAM) optimizer is an innovative approach to enhancing the performance of machine learning models through a distinct approach to parameter updates. Unlike traditional optimizers, which focus solely on minimizing the loss function, SAM takes into account the landscape of the loss surface around the current parameters. This enables SAM to produce solutions that not only minimize the loss but also ensure robustness against perturbations in the parameter space.

The core principle of SAM revolves around the idea of sharpness. A ‘sharper’ minimum indicates that small changes in model parameters could lead to significant increases in loss. This creates a risk of overfitting in machine learning models. SAM mitigates this risk by adjusting the training process to target flatter minima, which are believed to generalize better on unseen data. The mathematical foundation involves a two-step update process: first, it computes the gradients of the loss with respect to the model parameters, and then it modifies the parameters to move towards a region that minimizes the sharpness of the loss surface.

In comparison to traditional optimization methods such as Stochastic Gradient Descent (SGD) or Adam, which adjust parameters based solely on local gradients, SAM’s approach systematically considers the curvature of the loss landscape. This differentiation is crucial in contexts where the landscape is complex and may involve numerous local minima. Applications of SAM have shown promising results in both deep learning and general machine learning scenarios, particularly in tasks involving neural networks where robustness and generalization to new data are paramount.

Understanding the Challenges of Complex Loss Landscapes

The optimization of machine learning models is often hindered by the complexities of loss landscapes. A complex loss landscape, characterized by sharp minima, saddle points, and plateaus, creates significant challenges for the optimizer. These features contribute to the overall difficulty in finding optimal parameters, which can consequently affect model performance.

Sharp minima tend to attract optimizers, but they can lead to a type of solution that is highly sensitive to small perturbations in the data. This means that while an optimizer might converge to a solution in a sharp minimum, this solution may not generalize well to unseen data, ultimately compromising the robustness of the model. The rapid changes in loss function gradient near these minima can make it difficult for optimization algorithms to escape and explore other potentially better solutions.

Saddle points present another significant hurdle. Unlike local minima, which represent lower loss values, saddle points have both higher and lower gradients. This duality can mislead optimization processes that utilize gradient descent methods, as they may oscillate around these points without achieving substantial overall loss reduction. Furthermore, saddle points can prolong convergence times, imposing computational delays that hinder the efficiency of the training process.

Plateau regions add yet another layer of complexity to the optimization landscape. These flat regions exhibit little gradient change, which can stall the learning process. When an optimizer encounters a plateau, it may struggle to make progress or identify an effective path towards regions of lower loss. As a result, optimization can become inefficient, potentially requiring increased training times or more sophisticated techniques to navigate through these challenging areas.

How SAM Optimizes Loss Geometry

The Sharpness-Aware Minimization (SAM) optimizer introduces a novel approach to navigating complex loss geometries by focusing on the landscape of the loss function. At its core, SAM enhances traditional optimization techniques by adjusting the trajectory of the optimization process in such a way that prioritizes not just reaching the minima but also ensuring the minima are robust and flatter.

One of the primary mechanisms SAM employs is the identification of optimal paths through the loss landscape. It does this by looking beyond traditional gradients, taking into account the sharpness of the loss surface. By calculating the potential increase in loss when perturbating parameter values slightly, SAM can delineate between steep and flatter regions. This aspect allows SAM to target and converge on flat minima, which are associated with better generalization of the model on unseen data.

In practice, this means that during optimization, SAM modifies the update rules based on how sharp or flat the achieved solution is. When the optimizer identifies a steep minimum, it adjusts the parameter updates dynamically to steer clear from these sharp regions. Conversely, when a flatter area is found, it allows for the exploration of those zones more aggressively, effectively promoting convergence towards them. This results in a marked improvement in generalization, as models are less likely to overfit to the training data.

Furthermore, by integrating information about the geometry of loss functions, SAM effectively refines the exploration-exploitation trade-off typical in optimization workflows. This geometrical awareness translates into a more informed decision-making process during each training iteration, aligning the optimization trajectory with better performance metrics on various datasets. Ultimately, SAM’s innovative mechanisms not only enhance the efficiency of the optimization process but also yield superior model outcomes.

Comparing SAM with Traditional Optimizers

In recent years, the introduction of the Sharpness-Aware Minimization (SAM) optimizer has sparked substantial interest in the field of machine learning, especially when compared to traditional optimization techniques such as Stochastic Gradient Descent (SGD) and Adam. This comparison becomes particularly significant when evaluating performance metrics like convergence rates, model accuracy, and robustness across various scenarios.

Starting with convergence rates, SAM has exhibited a remarkable ability to accelerate the training process relative to traditional methods. Empirical studies have shown that SAM can achieve convergence in fewer epochs, which is particularly beneficial for large-scale datasets. For instance, in deep learning tasks involving image classification, SAM often reduces the number of iterations required to reach optimal performance, thereby conserving computational resources.

When it comes to model accuracy, SAM frequently outperforms SGD and Adam, particularly in high-dimensional parameter spaces. The sharper minima identified by SAM contribute to enhanced generalization capabilities of the trained models. In various benchmark tests, models optimized using SAM demonstrated a lower generalization error compared to those optimized with SGD or Adam. This increased accuracy is crucial, especially in applications where precision is paramount.

Moreover, robustness is another area where SAM shows significant advantages. Traditional optimizers may lead to solutions that are sensitive to perturbations in the training data, whereas SAM’s focus on sharpness allows for the discovery of models that maintain performance even when faced with noisy data or distributional shifts. Studies indicate that models optimized with SAM are more resilient to adversarial attacks, maintaining consistent accuracy in challenging environments.

In summary, while traditional optimizers such as SGD and Adam have paved the way for advancements in machine learning, SAM presents a compelling alternative. The evidence suggests that SAM not only accelerates the convergence process but also enhances model accuracy and robustness, making it a powerful tool in the optimization toolbox.

Case Studies of SAM in Action

The implementation of the Sharpness-Aware Minimization (SAM) optimizer has demonstrated considerable success across various domains, showcasing its versatility and efficiency in real-world applications. This section outlines several case studies where SAM has been effectively employed, highlighting the problem context, the integration of SAM, and the resulting outcomes.

One notable case study involved the training of convolutional neural networks (CNNs) for image classification tasks. In this scenario, researchers faced the challenge of overfitting, where the network performed well on training data but poorly on unseen data. By integrating SAM into the training process, the researchers adjusted the model’s weights to account for loss sharpness, ultimately improving generalization. The results were substantial, with accuracy rates increasing by over 5% compared to traditional training methods.

Another significant application of SAM occurred within natural language processing (NLP), specifically in training language models for sentiment analysis. The initial models exhibited high variance across different datasets. By employing SAM in the optimization phase, the models became less sensitive to adversarial examples, ensuring that they maintained performance consistency across a range of inputs. The outcome was a more robust sentiment analysis tool, ultimately leading to better user experiences and more reliable analytical insights.

Additionally, SAM has been utilized effectively in reinforcement learning scenarios, where agents must adapt to dynamic environments. By optimizing the agents’ learning processes with SAM, practitioners noticed improved performance in various simulations, including complex games and robotics. This was attributed to SAM’s ability to stabilize learning, thereby allowing the agents to navigate intricate environments with greater ease and precision.

Overall, these case studies exemplify the tangible benefits of implementing SAM across diverse applications, resulting in enhanced model performance and improved generalization capabilities.

Limitations and Considerations when Using SAM

The SAM optimizer, while a powerful tool for navigating complex loss landscapes in machine learning, does come with inherent limitations and considerations that practitioners must be aware of. One notable drawback is the computational overhead associated with integrating SAM into the training process. The need to compute not only the standard gradient but also the perturbation gradients can significantly increase computation time and resource usage, especially for large-scale models. This additional computational burden may not be feasible in scenarios with limited computational resources or tight time constraints.

Moreover, there are certain situations where SAM’s performance may not align with practitioners’ expectations. It is particularly sensitive to the choice of hyperparameters, and improper tuning can lead to suboptimal results. For instance, a poorly chosen radius for the neighborhood in which perturbations are applied can result in ineffective updates. Additionally, in scenarios where the loss landscape is not particularly complex or exhibits minimal curvature, the advantages of employing SAM over simpler optimizers may diminish. Thus, it becomes imperative to assess the complexity of the task at hand before deciding to implement SAM.

Another key consideration is model architecture. Certain architectures may inherently benefit from SAM, while others may not see significant improvements. For example, convolutional neural networks (CNNs) may leverage SAM’s strengths more effectively than simpler architectures due to their complex loss surfaces. Therefore, conducting preliminary experiments to determine the optimizer’s effectiveness on specific models is advisable.

In summary, while SAM offers promising advantages in optimizing complex loss geometries, practitioners should carefully weigh its computational demands, sensitivity to hyperparameters, and compatibility with model architecture. Employing SAM effectively requires thoughtful consideration of these factors to ensure the best possible outcomes in machine learning endeavors.

Future Directions in Loss Landscape Exploration

The exploration of loss landscapes is a rapidly evolving domain in machine learning research, particularly regarding optimization techniques such as the Sharpness-Aware Minimization (SAM) optimizer. As researchers deepen their understanding of the intricate topology that characterizes loss landscapes, several potential directions emerge that could significantly augment the efficacy of model training.

Firstly, one promising area of inquiry involves the integration of advanced visualization techniques, which can provide insights into the geometry of loss landscapes. By employing tools such as topological data analysis or dimensionality reduction methods, researchers could uncover hidden structure within the loss landscape, leading to improved optimization strategies. Enhanced visualization methods may allow developers to identify regions of the landscape that are prone to sharp minima, guiding the selection of more robust optimization paths.

Another avenue for improvement lies in the theoretical underpinnings of existing optimization techniques. Further developing the mathematical foundation of SAM could yield new methodologies that reduce sensitivity to hyperparameters, ultimately making training more efficient. There is also potential in exploring connections between SAM and other optimization strategies, such as momentum-based optimizers or adaptive learning rate methods. Innovations in algorithmic design could reduce computational overhead while preserving or enhancing training outcomes.

Moreover, connecting loss landscape exploration with contemporary advancements in neural network architectures may lead to synergies that influence future practices. As neural networks, particularly deep learning models, become increasingly complex, a better understanding of how architecture interacts with the loss landscape could inform both model design and optimization approaches.

In conclusion, the future of loss landscape exploration holds significant promise, with the potential for elucidating critical aspects of model training. By embracing these emerging trends and integrating fresh perspectives, researchers can enhance the overall efficacy and reliability of optimization techniques, including SAM, in developing artificial intelligence systems that are both powerful and adaptable.

Conclusion

In this blog post, we have explored the intricate world of loss geometry and its significance in optimizing machine learning models. The concept of loss geometry facilitates a better understanding of how different optimization techniques behave in navigating the loss landscape. A core portion of our discussion revolved around the advantages provided by the Sharpness-Aware Minimization (SAM) optimizer. The SAM approach enhances the model’s ability to generalize by identifying flatter regions in the loss landscape, which can lead to improved performance on unseen data.

The intricacies of loss geometry comprise several dimensions, including the shape and curvature of the loss function, which can greatly influence the effectiveness of various optimization strategies. It is vital for data scientists and machine learning practitioners to comprehend these aspects to select the right tools and methodologies effectively. SAM’s innovative approach allows it to maintain robustness amidst the complexities that arise in non-convex optimization problems.

By actively engaging with the loss geometry of the models we work with, we can better grasp the implications of optimizers like SAM. This understanding not only aids in achieving higher accuracy during training but also in fostering more reliable prediction capabilities. Overall, as we continue to advance in the field of machine learning, recognizing the relevance of loss geometry and leveraging optimizers such as SAM will be essential for driving progress and innovation. Embracing these methodologies will undoubtedly pave the way for more effective and efficient model optimization strategies.