Introduction to Catastrophic Forgetting

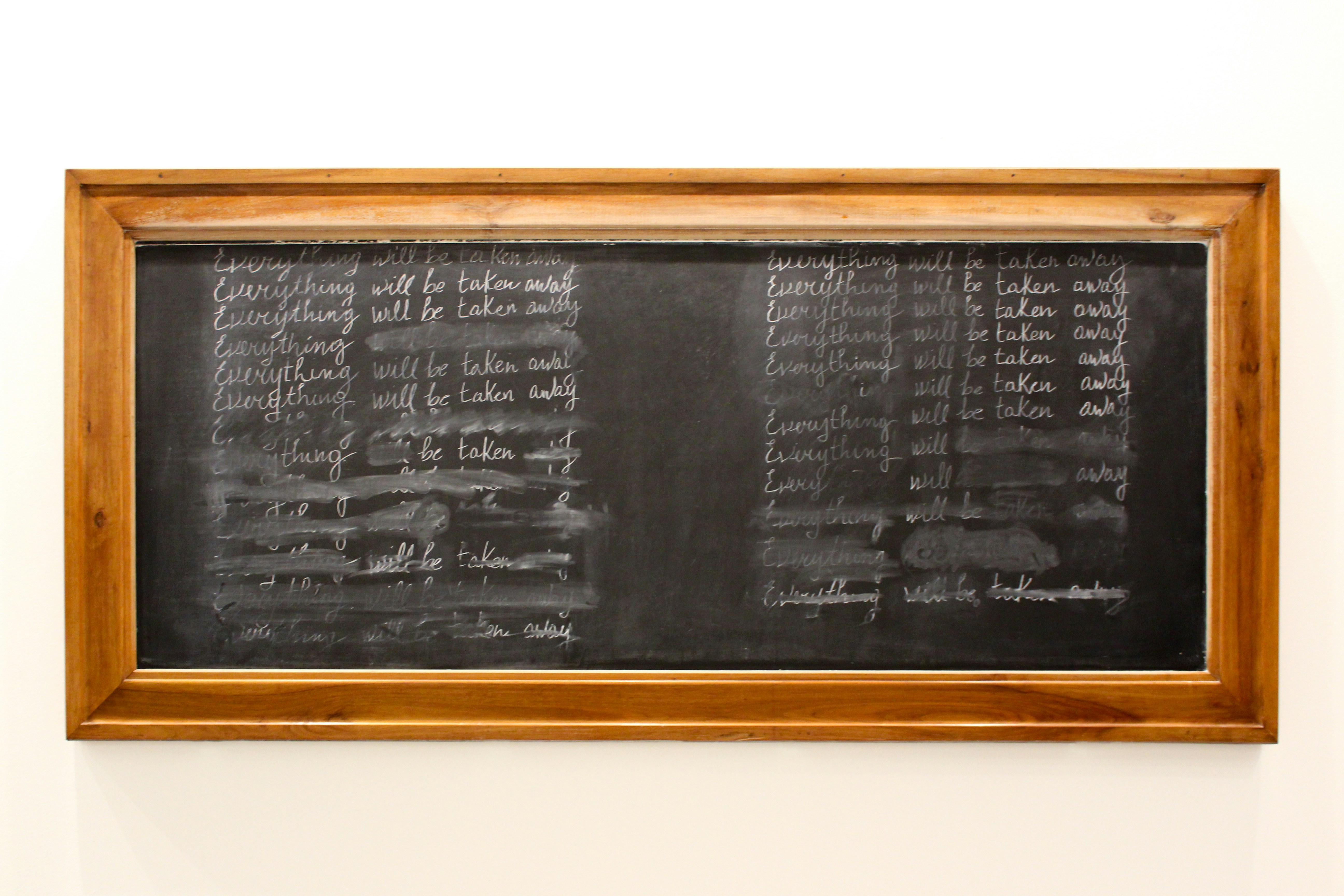

Catastrophic forgetting refers to the phenomenon where a machine learning model, particularly neural networks, loses previously acquired knowledge upon learning new information. This issue becomes prominent in the context of continual training, where a model is expected to learn sequentially from multiple tasks or datasets without forgetting the earlier ones. The significance of understanding catastrophic forgetting lies in its impact on creating robust artificial intelligence systems capable of adapting over time while retaining essential information.

The challenges posed by continual training are manifold. As models learn from new tasks, they can overwrite the weights of the neural network, which can lead to a deterioration in performance on previously learned tasks. This loss of information can severely affect the model’s effectiveness, making it crucial to investigate methods that mitigate such forgetting. Consequently, addressing catastrophic forgetting is paramount for tasks that require a long-term accumulation of knowledge, such as autonomous driving, personalized recommendations, and natural language processing.

Gaining insights into the mechanisms of catastrophic forgetting can aid in the advancement of better training frameworks and architectures. It drives the development of techniques such as regularization strategies and memory-based approaches designed to preserve the integrity of earlier learnings. Understanding this phenomenon not only contributes to improving the performance of AI systems but also enhances their ability to function in dynamic environments where continuous learning and adaptation are essential. Therefore, a thorough exploration of catastrophic forgetting is integral to the ongoing evolution of machine learning and neural network design.

The Mechanism of Catastrophic Forgetting

Catastrophic forgetting is a phenomenon where neural networks tend to lose previously acquired knowledge when they are exposed to new learning tasks. This behavior is particularly problematic in the context of continual training, where models are required to adapt to a series of different tasks over time. The mechanism underlying catastrophic forgetting is deeply intertwined with the architecture of the neural network itself and the methods used for training.

A primary factor contributing to catastrophic forgetting is the way neural models update their weights during training. As new information is integrated, the updates intended to optimize performance on the current task can inadvertently disrupt weights that encode information relevant to earlier tasks. This interference occurs primarily because neural networks are often optimized for a single task at a time, leading to an imbalance in the retention of knowledge.

Memory retention mechanisms play a crucial role in this context. In traditional neural network architectures, memory is often stored within the weights of the model. When a network processes new data, it tends to overwrite existing connections that are associated with previous data. As a result, the system reconfigures itself to prioritize the new information, which is essential for performing with improved accuracy on the most recent task, at the expense of older knowledge.

Furthermore, the phenomenon can be exacerbated by the lack of a structured approach to memory allocation. Without sufficient mechanisms to maintain distinct representations for past tasks, the neural network may struggle to balance between learning new tasks and retaining previously learned ones. Overall, these processes illustrate why catastrophic forgetting poses significant challenges in the realm of continual training, emphasizing the need for novel strategies and approaches that can enhance memory retention while accommodating continuous learning.

Factors Influencing the Acceleration of Catastrophic Forgetting

Catastrophic forgetting represents a significant challenge in the realm of continual learning, where neural networks are expected to adapt to new information without overly compromising previously acquired knowledge. Understanding the factors that accelerate this phenomenon is crucial for the development of more resilient models.

One primary factor is the learning rate. If the learning rate is set too high, the model can become overly aggressive in updating its parameters, leading to the rapid erosion of previously learned information. Adjustments to the learning rate can drastically influence performance—while a lower rate might help in retaining past knowledge, it could also hinder the model’s ability to assimilate new information efficiently. Thus, finding the optimal learning rate is vital in managing the trade-off between learning new tasks and preserving old knowledge.

Another influential aspect is the model architecture. Certain architectures may inherently be more prone to catastrophic forgetting than others. For instance, models that use tightly integrated shared weights across layers often struggle more with conflicting signals presented by new tasks compared to those designed with modularity in mind. Exploring architectural modifications, such as using separate pathways for different tasks or employing memory-augmented networks, can help mitigate this issue.

Lastly, the nature of the training data plays a significant role. If subsequent tasks are highly dissimilar to previous ones, the likelihood of forgetting increases. Conversely, training data that maintains a level of similarity can help reinforce prior learning. The presence of redundancy in the data can aid retention, while significant shifts in distribution may provoke memory loss. Overall, the interplay of these factors necessitates careful consideration and analysis when designing continual training systems to combat catastrophic forgetting effectively.

Comparison with Standard Machine Learning Practices

Continual training, distinct from standard machine learning methods, presents unique challenges and opportunities regarding the phenomenon known as catastrophic forgetting. In traditional training settings, a model is trained on a fixed dataset. This approach typically allows the model to effectively learn and generalize from the training data without the risk of forgetting, as the entire dataset remains available throughout the training process.

In contrast, continual training involves incrementally training models on a sequence of tasks, often with the earlier tasks being displaced from memory as new tasks are introduced. This incremental learning can lead to catastrophic forgetting, where the model effectively erases knowledge gained from previous tasks. Standard machine learning practices, by virtue of their static dataset, do not encounter this issue, as the comprehensive data allows for a more stable learning trajectory.

Moreover, the methodologies employed in standard training, such as batch learning and data shuffling, tend to reinforce the model’s memory of prior information. However, in continual training, common practices like regularization techniques or replay strategies are necessary to mitigate these forgetting effects. For instance, strategies such as experience replay, where past data is revisited during the training of new tasks, help preserve the earlier learnings. Other approaches may involve dynamic architectures that adapt based on the task requirements.

Thus, while traditional machine learning may inadvertently sidestep the challenges associated with catastrophic forgetting, continual training necessitates the incorporation of specific strategies designed to maintain learned knowledge. This difference in approach significantly influences how models are developed and demonstrates the need for careful consideration when designing systems intended for lifelong learning.

Implications for AI Development

Catastrophic forgetting poses significant challenges for the development and deployment of Artificial Intelligence (AI) systems, particularly in environments requiring continual learning. As AI technologies are integrated into various applications, the risk of accelerated forgetting can undermine system reliability and operational efficiency. This phenomenon occurs when an AI model abruptly loses previously acquired knowledge upon learning new information. Such a scenario can have severe implications for sectors like healthcare, autonomous driving, and financial services, where decision-making accuracy is essential.

One of the primary risks associated with deploying AI systems that suffer from catastrophic forgetting is the potential compromise of safety. For instance, an autonomous vehicle trained to recognize various road conditions may forget critical driving cues when encountering new data scenarios, such as weather changes or unexpected obstacles. This could lead to erratic behavior, increased accident rates, and loss of public trust in autonomous systems.

Moreover, in the healthcare domain, AIs are often trained on patient data and medical histories. If these systems experience accelerated forgetting, they might fail to apply learned treatments or outcomes relevant to patient care. This gap in knowledge can adversely affect patient health outcomes and lead to misdiagnoses.

Additionally, businesses relying on AI tools for customer relations can suffer if these systems fail to retain customer interactions and preferences. The loss of such data can impede personalized service delivery, damaging customer satisfaction and trust.

Addressing the implications of catastrophic forgetting is vital for the continued advancement and ethical deployment of AI technologies. Developers must focus on creating robust architectures that incorporate mechanisms for knowledge retention, thereby ensuring AI systems maintain reliability and effectiveness as they evolve through continual training.

Approaches to Mitigating Catastrophic Forgetting

Catastrophic forgetting is a significant challenge in continual training, where a model’s ability to learn new information can lead to the loss of previously acquired knowledge. Various strategies have been developed to tackle this issue effectively, allowing for improved learning without sacrificing earlier tasks. One of the most promising methods is the application of regularization techniques, which focus on restricting the changes made to important weights during training. Techniques like Elastic Weight Consolidation (EWC) employ a penalty to the loss function, effectively balancing the learning of new tasks with the retention of prior knowledge.

An alternative approach is rehearsal methods, wherein previously learned examples are repeatedly revisited during the training of new tasks. This can take the form of explicit rehearsal, where samples from previous tasks are stored and reintroduced into the training process, or implicit rehearsal, where the model is encouraged to retain information through various techniques like pseudo-rehearsal. By maintaining a connection to earlier learnings, these methods help in alleviating the instability observed in neural network weight adaptations.

Another avenue worth exploring involves advanced architectures such as memory-augmented networks, which inherently possess mechanisms for storing and retrieving information akin to human memory systems. These architectures can employ external memory stores that enable the model to access previous experiences more effectively, mitigating the effects of catastrophic forgetting. By utilizing a combination of existing techniques alongside such innovative architectures, researchers can significantly enhance the capabilities of continual learning systems.

In conclusion, addressing catastrophic forgetting requires a multifaceted approach that integrates various strategies and architectural changes. The continuous exploration and implementation of these methodologies will pave the way for more efficient learning systems capable of retaining knowledge over time while adapting to new information seamlessly.

Case Studies of Catastrophic Forgetting

Catastrophic forgetting has become a significant topic of interest in the fields of machine learning and artificial intelligence, particularly in applications involving continual learning. Several key case studies illustrate how this phenomenon manifests in various contexts. One prominent example is the research conducted on deep neural networks especially on image recognition tasks.

In a notable experiment, a neural network trained on a set of image classes was introduced to a new subset of classes without retraining on the original dataset. As anticipated, the performance on the initial classes significantly deteriorated. This loss of knowledge demonstrated a clear instance of catastrophic forgetting, highlighting that without proper mitigation strategies, neural networks can easily overwrite previously acquired knowledge when exposed to new information.

Another case study focuses on natural language processing. Continuous updates to language models with new corpora often result in the models losing competency in previously learned linguistic patterns. For instance, when a model fine-tuned on recent articles exhibited performance declines on older, less frequently encountered texts, researchers noted that it had forgotten key elements of its training. This occurrence highlighted the necessity of creating robust continual learning frameworks that prevent or minimize this detrimental effect.

A third compelling example comes from reinforcement learning, particularly in robotic systems. One experiment showed that robots trained sequentially to perform different tasks experienced substantial drops in performance due to catastrophic forgetting. When robots transferred from one task to another, they often reverted to less effective behaviors since the newly learned task destabilized the knowledge acquired from previous tasks. Observations from this case underscored the importance of employing techniques such as elastic weight consolidation (EWC) to safeguard against these knowledge losses.

These case studies collectively emphasize that understanding the mechanisms behind catastrophic forgetting is crucial for developing more resilient models in continual learning environments. Careful consideration of training methodologies and strategic knowledge retention techniques can aid in mitigating these challenges, allowing for the progression of AI capabilities without loss of prior learning.

Future Directions for Research

As the field of artificial intelligence progresses, understanding catastrophic forgetting remains a crucial area for research, particularly in the context of continual training. Future research directions may focus on enhancing the learning algorithms to better manage the trade-off between stability and plasticity. One promising avenue is the exploration of dynamic architectures that adaptively adjust their complexity based on incoming data, potentially minimizing the effects of catastrophic forgetting.

Additionally, integrating approaches from neuroscience could yield valuable insights into how biological systems retain information over time. Investigating brain-inspired learning mechanisms, such as synaptic plasticity, could lead to novel frameworks for developing more robust continual learning systems. Understanding biological underpinnings might also facilitate the design of systems that can effectively consolidate knowledge across multiple tasks without significant degradation of previously learned information.

Another vital area for exploration is the incorporation of memory-augmented networks. Techniques such as external memory components may present opportunities to counteract forgetting by providing a means to store and retrieve past experiences efficiently. Research that combines memory mechanisms with traditional learning models has the potential to create systems that are less prone to catastrophic forgetting.

Furthermore, enhancing our understanding of the effects of task similarity on learning can provide a clearer picture of how various learning scenarios impact retention. Investigating how different tasks influence the formation of cognitive maps or whether certain task sequences exacerbate forgetting could prove beneficial.

Finally, developing standardized benchmarks is essential for assessing the performance of continual learning algorithms in the presence of catastrophic forgetting. A consistent set of metrics would facilitate comparative studies and enable researchers to identify the most effective strategies for mitigating forgetting.

Conclusion

In summary, catastrophic forgetting is a significant challenge faced in the realm of continual training within artificial intelligence systems. Throughout this discussion, we have explored the mechanisms that contribute to this phenomenon, including the roles of transfer learning and neural network architecture. The evidence shows that when an AI model is trained on new tasks or datasets, it often loses the ability to recall previously learned information, leading to a decline in performance on older tasks. Understanding this process is crucial for researchers and developers who aim to create robust AI systems capable of lifelong learning.

Furthermore, the implications of catastrophic forgetting extend beyond mere performance metrics; they touch on the fundamental capabilities of AI to adapt and evolve as new information becomes available. Addressing this challenge involves leveraging various strategies, such as regularization techniques and memory management systems, which can enhance the retention of previously acquired knowledge. As the landscape of artificial intelligence continues to progress, the exploration of new methodologies to mitigate catastrophic forgetting remains imperative.

Ultimately, grasping the dynamics of catastrophic forgetting in continual training empowers stakeholders to design more effective AI systems that can maintain high performance across a variety of tasks. This knowledge not only aids in overcoming current limitations but also lays the groundwork for future advancements in AI’s ability to learn continuously and adaptively. The future of AI hinges on our ability to understand and tackle these fundamental issues, ensuring that machines can learn from experiences without sacrificing the knowledge they have already acquired.